AI in Healthcare Moves From Hype to Hospital Workflows

AI in healthcare is rapidly shifting from glossy demos to tools that touch real patients, clinicians and operating rooms. Over the past weeks, a wave of breakthroughs has highlighted that shift: a precision oncology engine that can compress cancer treatment planning from several weeks to a single day, the world’s first open-source medical video LLM specialized for surgical and nursing footage, and a clinician-tuned version of ChatGPT offered free to verified medical professionals. At the same time, new research on clinical AI safety, hallucinations and ideological bias underscores how fragile trust remains. Together, these developments mark a turning point: AI is increasingly embedded in the cognitive workflow of medicine—triage, reasoning, documentation, and imaging interpretation—rather than sitting on the sidelines as a novelty. The challenge now is less about what models can do, and more about how safely, fairly and controllably they can do it at scale.

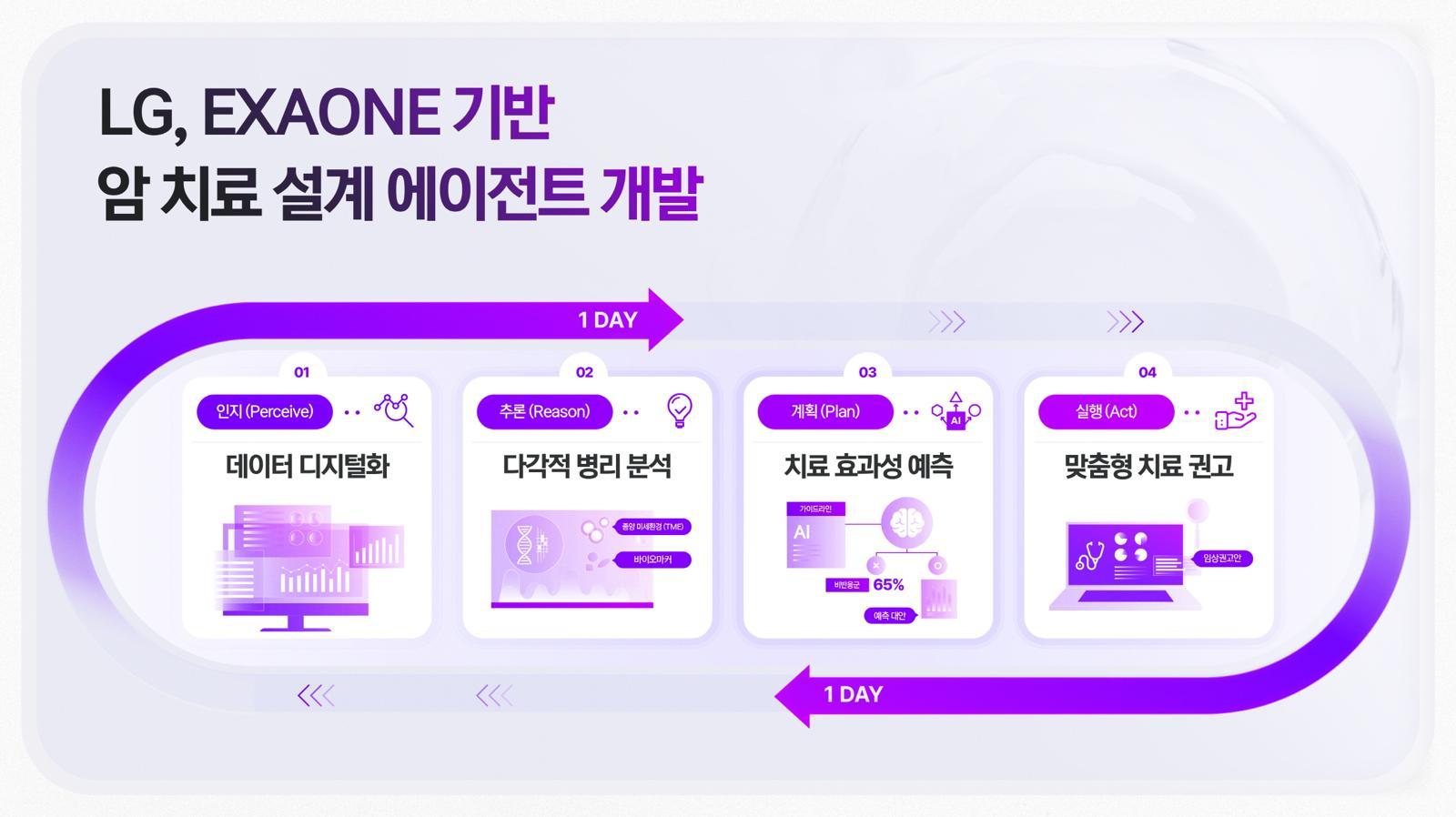

One-Day AI Cancer Treatment Planning, With Humans Still in Charge

LG’s new cancer agentic AI, built on its EXAONE platform and co-developed with Vanderbilt University Medical Center, aims to be a “brain” for precision oncology. The system links pathology AI—EXAONE Path, which predicts oncogene activity from a single histopathology image in under a minute—to downstream agents that help design a treatment strategy within a single day, a process that can traditionally take up to four weeks. By inferring oncogene activity at “world-class” accuracy, the platform could reduce unnecessary tests and quickly identify patients who may benefit from targeted therapies. Crucially, this is not an autonomous oncologist. AI handles pattern recognition and scenario generation, while medical specialists review tissue findings, validate biomarker predictions, choose actual therapies and discuss options with patients. The promise of AI cancer treatment here is not replacing tumor boards, but giving them a tighter, data-rich starting point so they can move from information gathering to decision-making much faster.

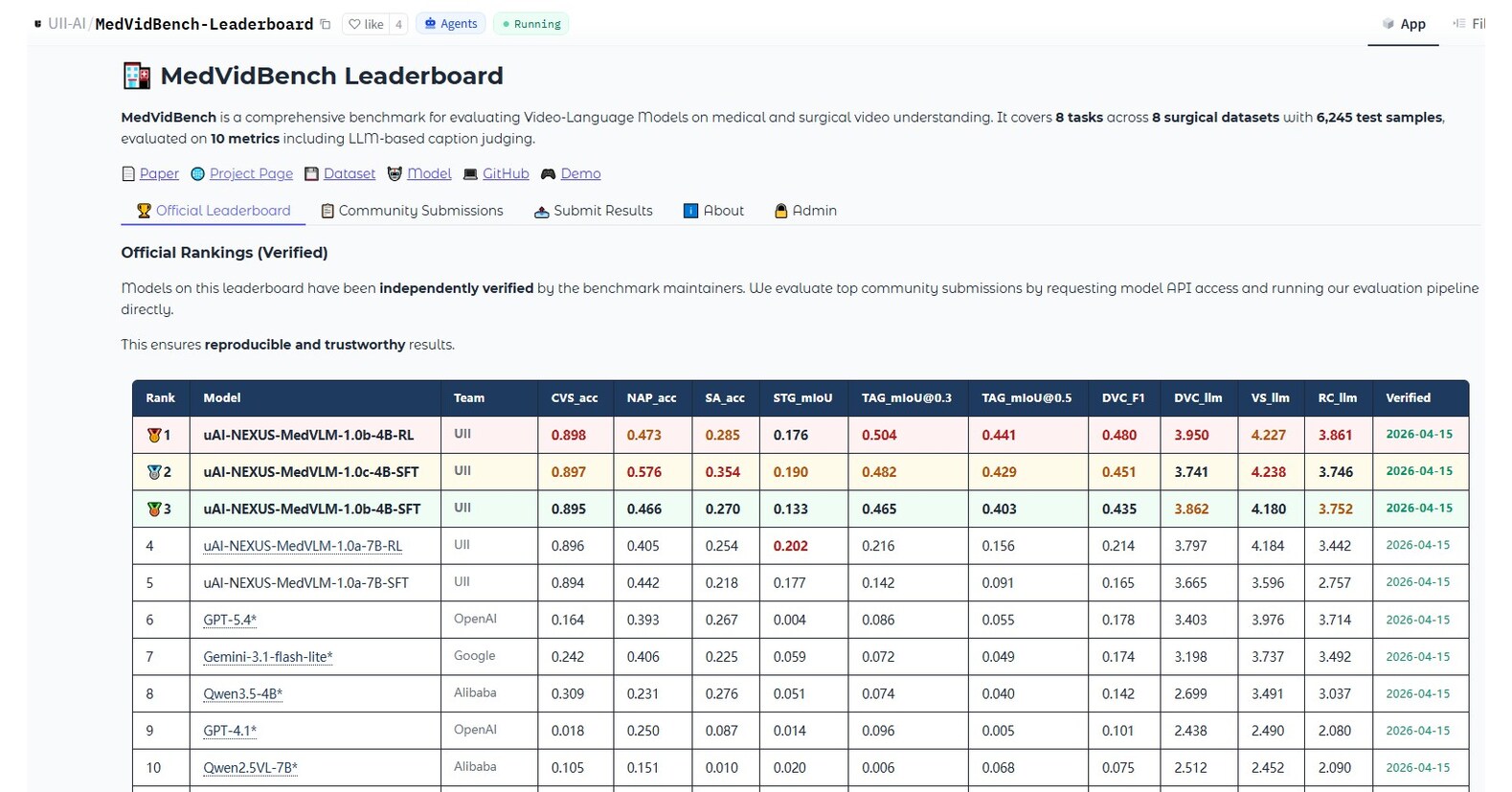

The First Open-Source Medical Video LLM and a Free ChatGPT for Clinicians

While oncology AI targets pathology slides, a new medical video LLM is attacking the flood of moving images in modern care. United Imaging Intelligence’s uAI NEXUS MedVLM is trained on 531,850 video–instruction pairs across eight scenarios, from robotic and laparoscopic surgery to endoscopy, open surgery and nursing care. Despite having only 4B/7B parameters, it dramatically outperforms much larger general-purpose models like GPT-5.4 and Gemini 3.1 on tasks such as surgical safety assessment, spatio-temporal action localization and video report generation, and it is fully open-source. That openness, plus a public benchmark and leaderboard, matters for hospitals that need transparency and local validation of clinical tools. In parallel, OpenAI is making ChatGPT for clinicians available at no cost to verified medical professionals, aiming to support documentation, clinical reasoning and patient communication. Together, these moves push AI deeper into day-to-day workflows, from operating rooms to clinic notes, while enabling broader research and scrutiny.

Clinical AI Safety, Hallucinations and Ideological Bias

As these tools reach real patients, clinical AI safety is under the microscope. A recent evaluation of large language models on real-world clinician chat transcripts highlights that performance in controlled tests does not guarantee reliability in authentic triage, documentation and patient communication. Hospital leaders cite hallucination management and AI agent reliability as core bottlenecks for deployment. Fundamental research shows hallucinations are baked into how language models learn: rare facts and one-off details are statistically prone to confident falsehoods, and standard accuracy-focused evaluation can even reward this behavior. Fairness is another emerging fault line. A University of Queensland study found that political personas assigned to LLMs shifted how they moderated hateful content, revealing ideological bias in moderation behavior. Meanwhile, EPFL’s work using a multimodal AI “digital twin” to mimic dyslexia shows both the power and risk of modeling neurodiversity: systems may need tailoring to cognitive profiles, but they can also misrepresent or overlook diverse users if not carefully validated.

What Patients Should Expect Next: Augmented, Not Automated, Care

In the near term, patients are likely to feel AI’s presence less as a talking robot and more as a quieter, pervasive assistant. Cancer teams might arrive at personalized treatment plans faster because pathology AI has pre-screened slides and prioritized likely biomarkers. Surgeons and nurses could benefit from a medical video LLM that flags safety issues, auto-generates structured reports, or supports training and simulation. Clinicians may lean on tools like ChatGPT for clinicians to draft letters, summarize complex histories and explore guideline-consistent options. Yet the fundamental dynamics of care will not flip. Clinical AI safety concerns—hallucinations, biased behavior, lack of transparency and control—mean that human clinicians must remain the final decision-makers, especially for diagnosis, consent and complex trade-offs. In practice, the best-case scenario is a division of labor: AI handles much of the cognitive heavy lifting and administrative grind, while humans focus on judgment, empathy and accountability.