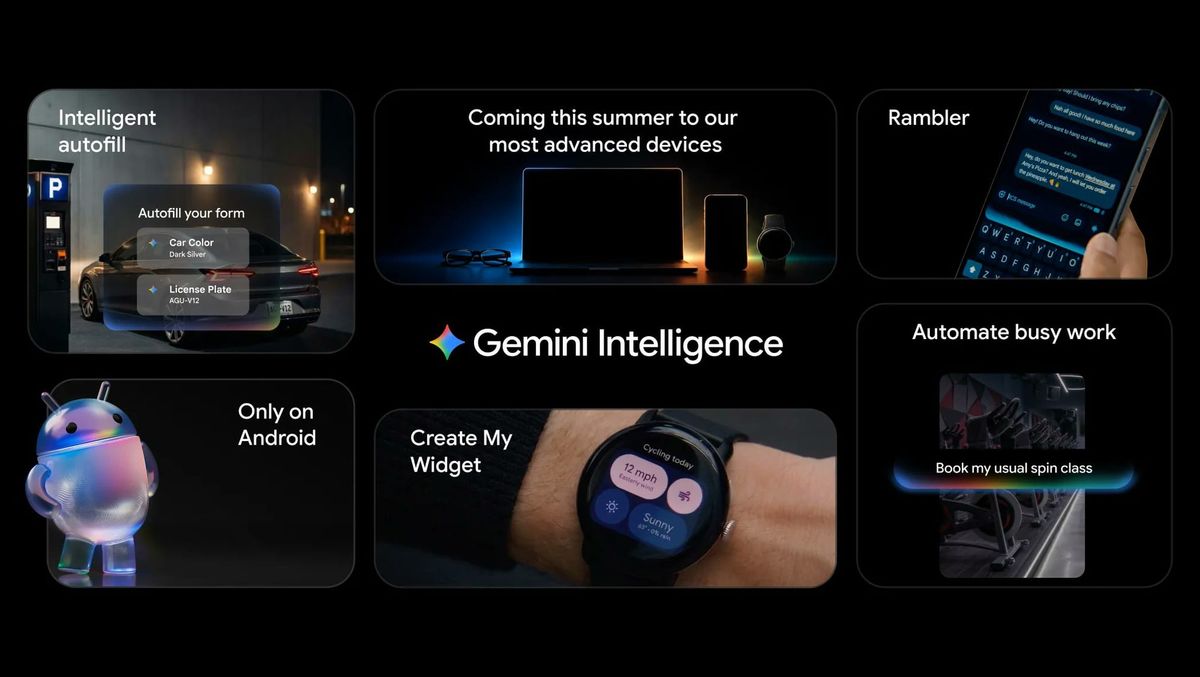

What Is Gemini Intelligence and How Is It Rolling Out?

Gemini Intelligence is Google’s latest push to weave its Gemini AI deeply into Android, recasting the OS as an “intelligence system” rather than just a traditional mobile platform. Starting this summer, the experience lands first on the latest Samsung Galaxy and Google Pixel phones, with broader expansion later to other Android devices, including watches, cars, glasses, and laptops. At its core, Gemini Intelligence is about proactive, cross‑app Android AI automation. Instead of simply answering questions, Gemini can interpret what is on your screen, understand context from photos or screenshots, and orchestrate multi‑step tasks across supported apps. Google’s message is clear: Android is becoming an AI‑first platform, with Gemini positioned as the primary way users interact with complex workflows, from everyday errands to web browsing, all while promising that final actions remain under user control.

From Chatbot to Automation Engine: How Gemini Works on Android

Gemini Intelligence on Android aims to move beyond chatbot interactions into full task orchestration. Google describes scenarios where users long‑press the power button, speak a request, and let Gemini handle multi‑step actions: turning a grocery list into a shopping cart, booking a ride, or reordering a favorite meal. Live notifications show progress, and users confirm the final step, blending convenience with a thin layer of oversight. Gemini’s reach extends into Chrome for Android via Gemini 3.1. It can summarize pages, answer questions about what you are reading, and even run auto browse tasks like reserving parking or updating an order for AI Pro and AI Ultra subscribers. Google also showcases improved autofill, which can pull data such as passport or license plate details into forms, signaling a future where Android AI automation quietly fills in the tedious gaps of digital life.

New Pixel and Android Experiences: Rambler, Widgets, and Beyond

Beyond headline automation, Gemini Intelligence brings a wave of smaller but telling features that reshape everyday Android use, especially on Pixel phones. Rambler, integrated with Gboard, refines voice‑to‑text by stripping pauses, filler words, and on‑the‑fly corrections, turning messy dictation into polished messages. It even supports multilingual speech within a single message, hinting at a more fluid, globally aware typing experience. Create My Widget is another example of Android’s AI‑first direction. Users can simply describe a widget—such as a rain‑only weather card or a meal‑prep dashboard—and Gemini generates it for phones or Wear OS. Gemini in Chrome also connects with Google apps and can create or edit visuals via Nano Banana. Together, these Pixel Gemini features reveal Google’s broader vision: Android becomes a canvas where users describe what they want and the system continually reshapes itself around those requests.

Why Enthusiasts Are Worried About an AI-First Android

While Gemini Intelligence promises frictionless experiences, early reactions from Android enthusiasts are divided—and often skeptical. Critics argue that making Gemini the centerpiece of Android is a “gigantic mistake,” not because automation is useless, but because trust in Google’s AI execution remains shaky. Gemini still hallucinates, and mistakes in tasks like booking tours, filling forms, or purchasing tickets could have real consequences, not just minor inconveniences. A poll cited by Android observers shows a notable majority uninterested in the new features, underscoring unease about where Android is headed. There is also concern that UI tweaks emphasizing Gemini will crowd out traditional, manual controls. When your phone proactively suggests actions, manages data, and populates forms, the risk is that users become passive passengers. For some, Gemini Intelligence feels less like a helpful assistant and more like an unavoidable layer between them and their own devices.

Data, Autonomy, and the Future of an AI-First Platform

Gemini Intelligence raises critical questions about data and autonomy. To automate tasks across apps and websites, Gemini must read on‑screen content, glean context from photos and screenshots, and access sensitive details like payment methods and identification numbers. Google stresses that Gemini acts only upon user commands or confirmation and that users can track progress via notifications. Yet the sheer breadth of access required for Android AI automation will worry privacy‑conscious users. There is also a philosophical shift: Android is no longer just a toolbox but an active decision‑maker. The more Gemini anticipates needs—auto‑browsing for deals, rearranging interfaces, drafting messages—the easier it becomes to accept defaults rather than exercise judgment. For some, that trade‑off is worth the convenience. For others, the future of an Android AI‑first platform should include clearer opt‑outs, granular permissions, and transparent logs so that automation enhances, rather than erodes, user control.