The Study: Empathetic Chatbots That Make People Angrier

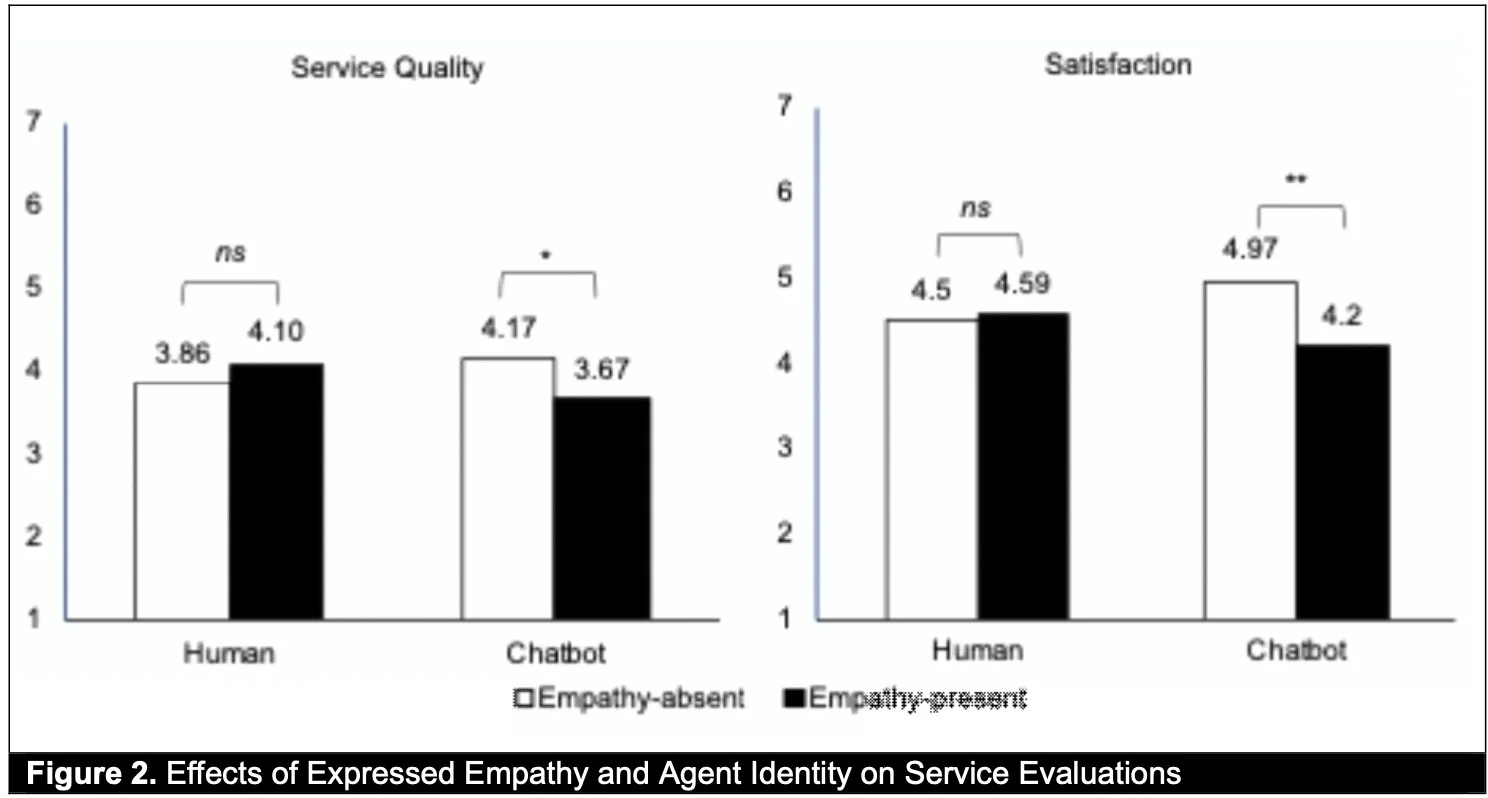

In human conversations, phrases like “I totally get your frustration” can feel disarming. But when the same line comes from a customer support bot, many people feel their irritation spike. New research reported by Techxplore and published in MIS Quarterly shows that empathetic chatbots can actually worsen the chatbot user experience during service failures. In three experiments, participants interacted with AI customer service agents that made mistakes. Some chatbots responded with empathetic language such as “I really feel your frustration,” while others skipped emotional commentary and focused on moving the interaction forward. The results were clear: empathy from non-human customer support bots did not repair trust. Instead, it often triggered stronger negative reactions and lower satisfaction with AI customer service overall. The study suggests that simply importing human-style empathy into emotional AI design does not guarantee better outcomes—and can even undermine them.

Why Fake-Sounding Empathy Triggers Psychological Reactance

The researchers argue that empathetic chatbots provoke what psychologists call “psychological reactance” when customers are already upset. Reactance is that instinctive pushback you feel when your sense of autonomy or control seems threatened. In this case, users know they are dealing with software, yet the system claims to “feel” their frustration or understand their emotions. That mismatch makes the empathy seem scripted, intrusive, or manipulative rather than supportive. The idea that a machine has analyzed and responded to their emotional state can feel invasive, especially during complaints or service failures. Instead of building rapport, emotional AI design that mimics human warmth risks highlighting the artificiality of the interaction. People are quick to sense when emotional cues don’t match reality—what feels like care coming from a person can feel like a tactic coming from code. The result is distrust, irritation, and a poorer overall chatbot user experience.

Task-Focused Bots vs Emotional AI in Customer Support

The MIS Quarterly study reveals a sharp contrast between emotional AI and straightforward, task-focused bots in customer support. When chatbots avoided overt empathy and instead concentrated on concrete next steps—clarifying the issue, proposing fixes, or escalating the case—customers tended to respond more positively. Clear problem-solving signals competence and respect for the user’s time, which matters more than scripted warmth during a service failure. Emotional AI design that leans heavily on reassurance phrases can unintentionally distract from the practical goal: resolving the problem. Users may interpret repeated empathetic lines as stalling, especially when the issue remains unsolved. In contrast, task-focused customer support bots that acknowledge errors with simple, factual language and immediately describe what will happen next feel more transparent and controllable. The key difference is that one style tries to manage feelings, while the other visibly works to restore control and fix the situation.

Risks for Brands Racing to ‘Humanize’ AI Customer Service

Many companies are rushing to make AI customer service feel more “human,” layering in emotional responses, friendly asides, and personality. The new findings suggest this strategy can easily backfire when expectations aren’t aligned. Customers seeking fast problem resolution may see emotional AI as insincere or as a shield that keeps them away from human agents. That perception can damage brand trust, especially in high-stakes moments like billing disputes or service outages. At the same time, a growing ecosystem of AI coaching and relationship services shows that some people are forming deep attachments to chatbots in other contexts. This tension highlights a core design challenge: users may welcome emotional depth in private, reflective settings, yet reject it in transactional customer support. Brands that conflate these domains risk creating experiences that feel manipulative instead of empathetic, eroding loyalty precisely when reliable support matters most.

Designing Helpful, Not Manipulative, Customer Support Bots

The research points toward a more grounded approach to emotional AI design in support settings. First, prioritize transparency: clearly state that the user is interacting with a bot and what it can and cannot do. Second, center the conversation on concrete actions—diagnostic questions, clear timelines, and specific next steps—rather than repeated emotional reassurances. Limited, simple emotional language can still help, but it should be tied to real progress, not used as filler. Third, build reliable escalation paths to human agents when issues become complex or highly sensitive. Finally, separate domains: warm, emotionally rich chatbots may be appropriate in AI companionship, wellness apps, or coaching tools, where users explicitly seek emotional engagement. In contrast, customer support bots work best when they feel competent, honest, and efficient—more like a precise tool than a pseudo-therapist.