The New Gap: Code Output Without Real Understanding

AI coding tools have turned software development into a high-speed production line. Junior engineers are reportedly completing tasks up to 55% faster with AI assistance, while tools like Claude Code are spreading rapidly across teams. Yet this speed hides a structural problem: the act of generating code has been decoupled from the act of understanding it. Senior engineers still bring years of system context to evaluate AI suggestions. For many newer developers, though, the AI is effectively the author. Clean, test-passing pull requests can conceal subtle race conditions or security flaws that the submitter cannot explain, because they never truly reasoned through the solution. This is where debugging skills loss begins. When developers skip the struggle of tracing logic, probing edge cases, and systematically narrowing down failures, they stop building the mental models that make debugging instinctive later on.

Code Review Problems and the Rise of the ‘AI Expert Beginner’

Engineering leaders describe a new kind of code review problem: pull requests that look polished but reveal a comprehension void when questioned. The “expert beginner” concept has been updated for the AI era. Instead of ego-driven stagnation, today’s version is fast, conscientious, and AI-augmented—yet unable to articulate why the code works. Reviewers find that juniors, encouraged to lean on AI coding tools, are less equipped to challenge or validate the generated logic. They paste in large changes, adjust until tests are green, and move on. The imbalance is stark: AI accelerates code generation, but the experience required to safely validate that code has not kept pace. Even seniors who avoid AI risk falling behind on evolving patterns, while those who adopt it must work harder in review to catch issues that the original developer cannot meaningfully discuss, amplifying the perception of developer skills deteriorating across teams.

Under Pressure, Developers Skip Audits and Let Skills Atrophy

Workload and headcount pressure are pushing developers to trust AI coding tools more than they should. Interviews describe teams being nudged—or ordered—to use AI agents for broad, sweeping changes across codebases. The volume of modifications is so high that careful audits become unrealistic, and developers admit that unaudited code is moving into production simply to keep pace. Instead of strengthening debugging habits, they are outsourcing both implementation and much of the reasoning. Over time, this leads to debugging skills loss: people report forgetting frameworks they once mastered, likening it to no longer remembering phone numbers after smartphones. Prompting the model replaces deliberate problem-solving, and the cognitive load shifts from understanding systems to wrangling AI outputs. What was sold as freeing humans for “higher-level” work is, for many, creating a sense of de-skilling and a growing anxiety that their hard-won technical abilities are quietly fading.

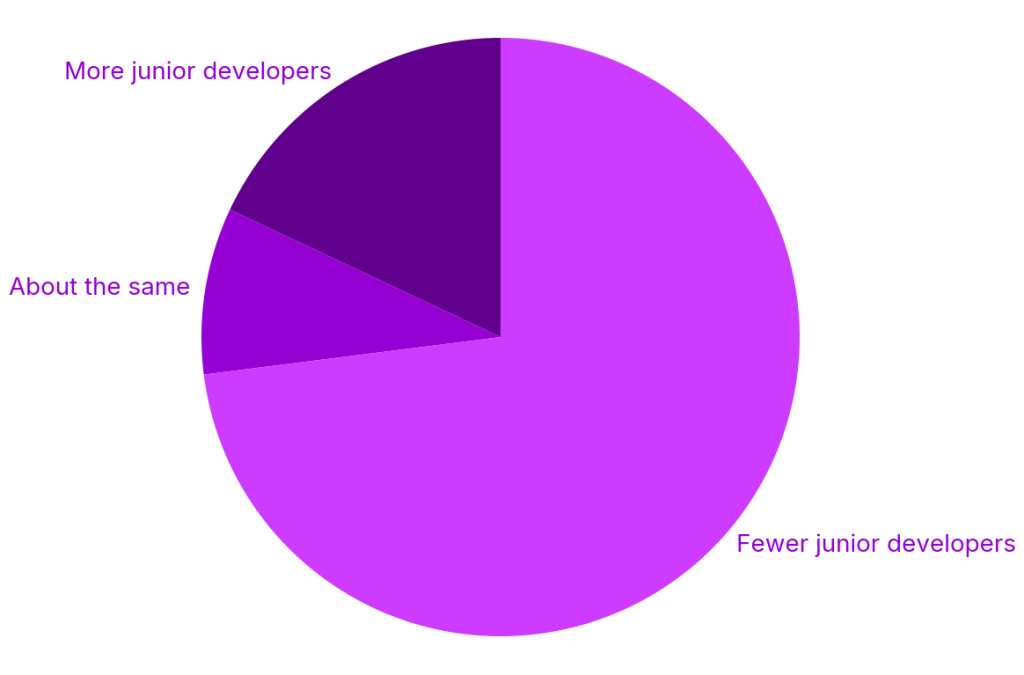

Stalled Talent Pipelines and Mounting Technical Debt

The organizational context compounds the problem. Entry-level roles are shrinking, internships are declining, and many companies are leaning on a “seniors with AI” model instead of growing junior talent. With fewer structured learning opportunities, juniors rely even more heavily on AI coding tools, reinforcing the cycle of shallow understanding. At the same time, leaders and developers warn of a creeping rat’s nest of technical debt. Large, AI-generated changes are hard to reason about, let alone fully test. Security, performance, and maintainability concerns may only surface when systems must be significantly updated—at which point the original authors might not truly understand the code they merged. This combination of developer skills deteriorating and opaque, AI-shaped codebases sets up a future where teams face brittle systems and a shortage of engineers who can methodically debug, refactor, and rescue them from their own automated past.