From Autocomplete to Agent Fleets in Enterprise AI Coding

Enterprise AI coding is moving from single-user helpers to orchestrated fleets of agents that behave like virtual development teams. Traditional AI developer tools such as Copilot-style autocomplete and chat-based code helpers focus on suggesting snippets or answering questions in the IDE. They live in a developer’s workflow but rarely act beyond the local context of a file or session. In contrast, the new wave of coding automation bots is built to operate at organisation scale. These systems can write, run, and maintain code across multiple repositories, integrate with CI/CD and ticketing tools, and coordinate multi-step workflows that continue after a human leaves their desk. Instead of just assisting keystrokes, they manage tasks, state, and permissions centrally. This shift is reshaping what “AI pair programming” means: from typing suggestions to fully managed dev bots that participate in planning, implementation, and operations alongside human engineers.

Inside Google’s Gemini Agent Platform for Enterprise Dev Bots

Google’s revamped Gemini Enterprise Agent Platform signals how far agentic AI has come. Built on Vertex AI, it unifies model selection, tuning, and deployment with new capabilities for agent integration, security, DevOps, and orchestration. Organisations can design an agent’s entire lifecycle: structuring agents into sub-networks via the Agent Development Kit, maximising reasoning for complex, multi-step work, and using Memory Bank so agents share context and delegate efficiently. Critically, this Gemini agent platform focuses on governance for fleets of agents, not just single assistants. Agent Identity assigns each agent a cryptographic ID, while a single control plane standardises identity, security, and auditing for both no-code and pro-code agents. Engineering leaders can stress-test agents with Agent Simulation before rollout, then publish them into the Gemini Enterprise app, where employees launch multiple agents in parallel to tackle tasks such as inventory analysis or marketing projects using data drawn from Workspace and other enterprise systems.

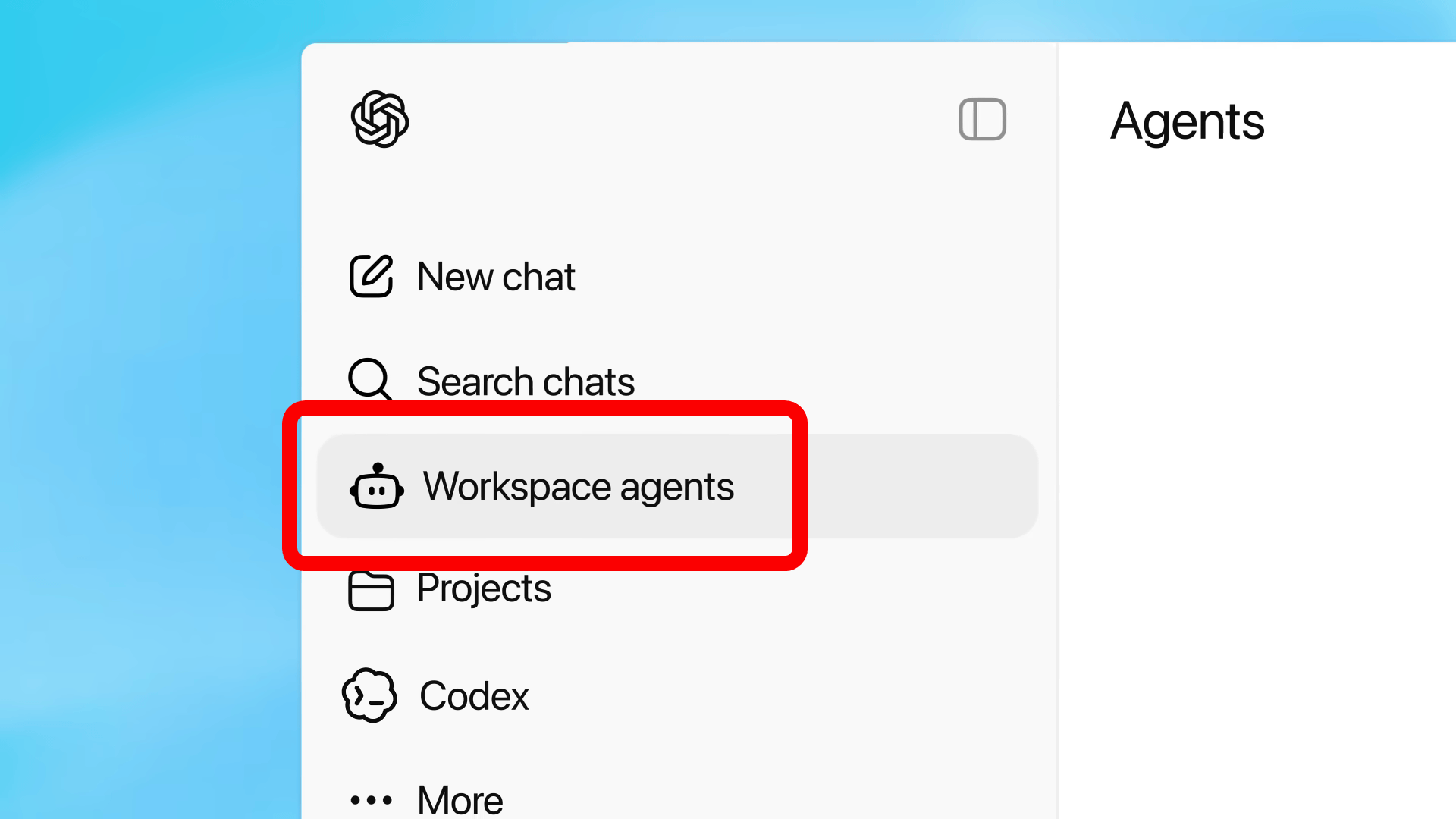

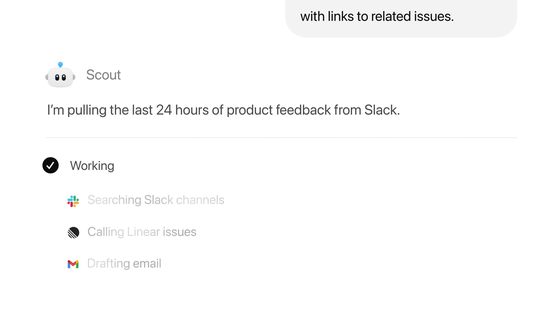

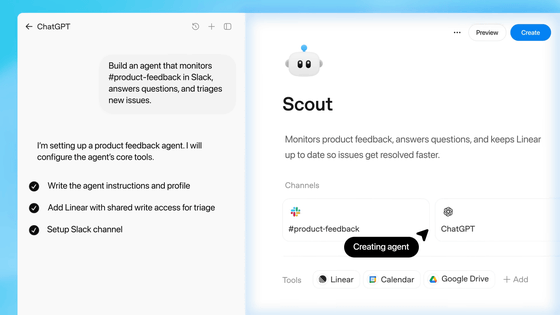

OpenAI Workspace Agents: From Code Generation to Always-On Workflows

OpenAI workspace agents extend ChatGPT from a conversational tool into an always-on operations layer for the enterprise. Evolving from GPTs and powered by the code generation engine Codex, these agents automate recurring work such as report creation, coding, and replying to emails. They can follow team processes, seek approvals, and orchestrate multi-step workflows that span documents, inboxes, and codebases. Unlike ad hoc chat sessions, workspace agents run within a persistent environment with access to files, code, tools, and memory in the cloud. They can write and execute code, use connected applications, and continue tasks even when users are offline. OpenAI emphasises quick setup: teams describe a recurring workflow or upload a file, and ChatGPT guides them through defining procedures, connecting tools, and adding skills. Fine-grained security lets admins specify which tools and data each agent can use and require approvals for sensitive operations like editing spreadsheets or sending emails.

Benefits, Risks, and Early Use Cases for Enterprise Dev Teams

For engineering organisations, these platforms promise productivity gains far beyond autocomplete. Enterprise AI coding agents can handle automated bug triage, summarise large legacy codebases, and propose low-risk refactors, freeing developers to focus on architecture and critical paths. By centralising logic in managed agents rather than scattered scripts, teams can enforce consistent coding patterns, dependency policies, and documentation standards across projects. However, this power introduces governance challenges. Autonomous agents with access to code, tools, and communication channels raise security and access control questions: who defines guardrails, who approves risky operations, and how are changes logged? Misconfigured agents could create or merge problematic changes, expose data, or trigger unexpected workflows. Successful adopters will start with constrained, well-audited use cases—like internal tooling, test generation, or documentation updates—while carefully expanding access, approvals, and monitoring as maturity grows.

What Engineering Leaders Should Demand from AI Agent Roadmaps

As AI developer tools evolve into coding automation bots, engineering leaders need to scrutinise vendor roadmaps through a governance lens. At minimum, platforms should offer detailed logging and audit trails that show which agent executed which action, against which repositories or systems, and under whose authority. Features like Google’s single governance control plane and Agent Identity, or OpenAI’s approval workflows for sensitive operations, are early signals of this direction. Integration is equally critical. Agent platforms should plug into existing CI/CD, issue trackers, and observability stacks so that agent-initiated changes follow the same review and deployment paths as human ones. Leaders should look for robust role-based access control, configuration-as-code for agent policies, sandboxed testing environments, and clear separation between experimentation and production. The long-term winners will be platforms that balance raw automation power with enterprise-grade safety, transparency, and interoperability across the full software development lifecycle.