From Operating System to Intelligence System

Google is reframing Android 17 as an “intelligence system” rather than a traditional operating system, and the Gemini AI assistant sits at the center of that shift. Instead of you managing every tap and swipe, Android is being redesigned so Gemini-powered agents can quietly take on more of the work. This is the clearest move yet toward AI-first smartphone design, where intelligence is built into the core experience rather than sprinkled on top as a few clever features. Google describes Gemini Intelligence as a system that “learns and works for you,” signaling a future where your phone behaves less like a toolbox and more like a capable helper. The goal is consistency: one assistant that understands you across devices and apps, so your AI smartphone features feel unified whether you’re on your phone, in the car, or using wearables.

Gemini Takes Direct Control of Your Apps

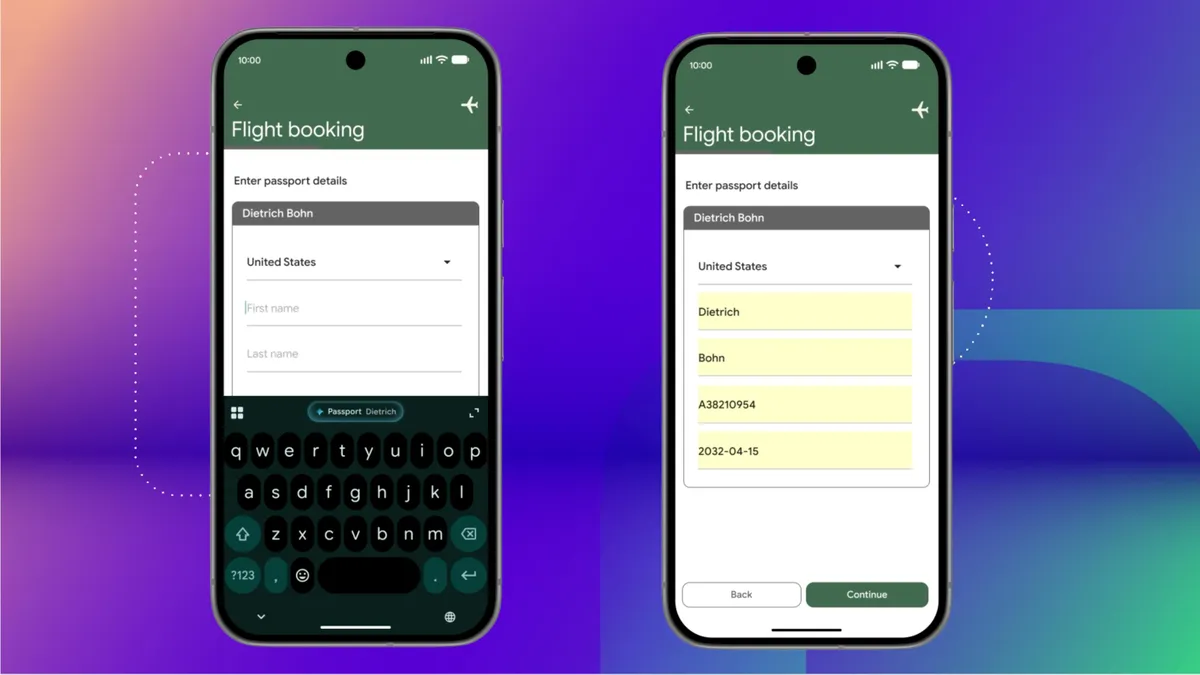

The most important change isn’t what Gemini can answer, but what it can now do inside your apps. With Android app control baked into Android 17, Gemini Intelligence can manage everyday tasks across services you already use. You can ask it to turn a grocery list in your notes app into a shopping order, or to schedule an appointment with a highly rated dentist without manually hopping between search, maps, and your calendar. It can autofill complex forms using data from connected apps like Google Drive, pulling in details such as your driver’s license or passport number with a tap. You can even snap a brochure and have Gemini find a tour that fits your group. Instead of serving up instructions or links, the assistant begins to act like a trusted delegate that carries out the steps for you.

Natural Language Becomes the New Universal Remote

As Gemini gets deeper hooks into Android, natural language starts to feel like a universal remote for your phone. Voice command automation is no longer limited to setting alarms or sending quick messages. You can ask Gemini to plan a party, book appointments, or hunt down hard-to-find items online, and it will use tools like Chrome Auto Browse to work through the details on your behalf. The same intelligence upgrades classic features you already know: Intelligent Autofill now uses AI to handle sensitive, multi-field forms, while Create My Widget lets you describe a home-screen widget you want—like showing temperatures in two units—and have Gemini build it. Across these experiences, the Gemini AI assistant is shifting from reactive Q&A to proactive task execution, letting you offload multistep workflows with simple, conversational commands.

An AI-First Phone You Mostly Don’t See

For an AI-first smartphone to work, it can’t constantly get in your way. Google’s Material Expressive updates for Android 17 are designed so Gemini “melts into the background” and appears only when needed. Subtle visual cues show when the assistant is listening, thinking, or acting on your behalf, but the interface avoids flashy animations that distract from the task at hand. This understated design is critical to building trust; if Gemini is going to fill forms, place orders, and manage apps, users need clear, calm feedback about what it’s doing. At the same time, Gemini Intelligence is extending across Android Auto, Wear OS, and even smart glasses, hinting at an ecosystem where a single assistant quietly follows you from screen to screen. The net effect: less visible AI, more invisible help.

What This Means for Your Daily Phone Use

For everyday users, the impact of Gemini’s expanded Android app control will show up as friction that simply disappears. Routine chores—booking services, filling in repetitive details, generating useful widgets—shift from manual tapping to quick requests. Over time, your phone becomes less of a grid of icons and more of a conversational gateway to actions. This also clarifies Google’s broader strategy: make AI the default way you interact with your device, not an optional extra. As Gemini Intelligence rolls out first to premium Android phones like upcoming Pixel and Galaxy models, expectations around what an AI smartphone should do are likely to change. If Google executes well, you may find yourself spending less time thinking about which app to open and more time simply telling your phone what you want done—and letting Gemini quietly handle the rest.