SmartDJ and the rise of natural‑language AI audio editing

In audio engineering, SmartDJ is an early glimpse of how AI media workflows may soon feel more conversational than technical. Developed by Penn engineers, the SmartDJ AI tool lets users reshape immersive stereo scenes using plain English instructions such as “make this sound like a busy office.” Instead of manually hunting for tracks, filters, and levels, the system translates that request into step‑by‑step edits—like adding a phone ring on the right channel and adjusting it by a specific number of decibels—and exposes those steps so editors can refine them. Unlike earlier AI audio editing systems limited to mono sound and rigid, template‑like commands, SmartDJ is built for spatial audio and higher‑level direction. For VR, AR, games, and sound design, that promises faster iteration, less technical friction, and a production process where creative intent is expressed in language first, then rendered into detailed mix decisions by AI.

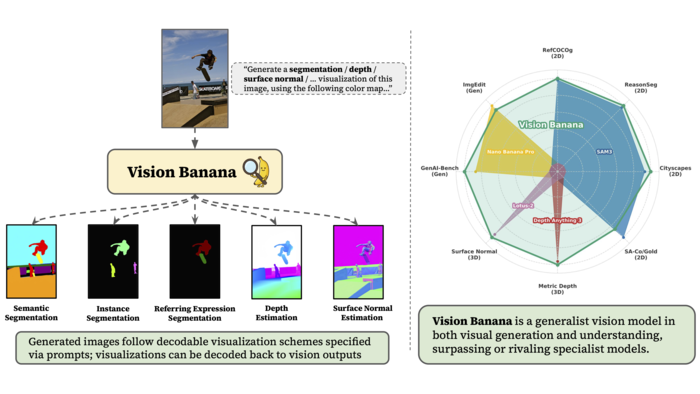

Google Nano Banana and a new breed of visual AI

On the visual side, Google’s Nano Banana model points to a shift where image generation and image understanding converge. Researchers at Google DeepMind have shown that Nano Banana, originally built for image generation and editing, also performs strongly at object recognition and scene interpretation. A companion system, Vision Banana, uses Nano Banana as its engine to both create visuals and analyze them—segmenting different objects in an image, distinguishing multiple instances of the same object, and even estimating depth in a scene. Tasks that once required separate, specialized computer‑vision models can now be handled inside a single generative framework. For creative tools, visual search, and ad workflows, this matters: one system can generate campaign assets, understand what’s in them at a fine‑grained level, and support edits via high‑level prompts instead of manual masking or tagging. As with AI audio editing, language, perception, and generation are starting to live in the same stack.

Inside agencies, AI shifts from narrative to infrastructure

While labs pioneer SmartDJ and Google Nano Banana, large communications groups are quietly rebuilding around AI‑first operations. BlueFocus’s latest investor letter signals that AI in marketing agencies is moving from storytelling to structural change. The company reported revenue of USD 10.07 billion (approx. RM46.3 billion), up 12.99% year on year, with AI‑driven revenue reaching USD 546.05 million (approx. RM2.5 billion), an increase of 210.42%. Rather than relying mainly on third‑party tools, BlueFocus is investing in its own stack, including the multimodal BlueAI marketing platform, STARUNION AI for influencer marketing, AdsWin for ad buying and optimization, and the Xinying creative platform for content production. Management frames this as an “All in AI” transformation that touches workflow design and revenue models, not just experimentation. With internal AI token consumption above one trillion, AI is being positioned as operating infrastructure—organizing how campaigns are planned, produced, and monetized, and redefining what an “AI Native” agency looks like.

Faster cycles, new skills, and leaner creative teams

Taken together, SmartDJ, Google Nano Banana, and platforms like BlueAI show how AI media workflows are compressing production timelines and redefining roles. Natural‑language interfaces mean that producers, marketers, and non‑technical creatives can shape complex outputs without expert‑level tooling knowledge—whether that is editing a stereo soundscape or generating and versioning ad imagery. For agencies, this translates into faster content turnarounds, more personalization, and a heavier reliance on machine‑assisted decision‑making in areas like media buying and influencer selection. But it also puts pressure on traditional roles built around painstaking manual editing or asset tagging. Skills in prompt design, model evaluation, and cross‑tool orchestration are gaining value, while repetitive production tasks are increasingly automated. As AI systems handle more of the execution, human work shifts toward concept development, brand strategy, and oversight—potentially with smaller, more multidisciplinary teams than in the past.

Risks: quality, bias, and the battle for brand coherence

The same tools that accelerate production also raise questions that media and marketing leaders cannot ignore. Systems like SmartDJ and Nano Banana learn from large, imperfect datasets, which can introduce bias into generated content or skew how people and objects are portrayed. Automated decision engines in AI in marketing agencies risk amplifying those biases at scale across campaigns and channels. Quality control is another challenge: when outputs are generated in seconds, the temptation is to ship quickly rather than rigorously review spatial audio details, visual accuracy, or legal and cultural sensitivities. Brand identity can also fray if many teams feed loosely guided prompts into AI and accept the first result, leading to inconsistent tone, style, or symbolism. The next competitive advantage may lie not just in adopting AI, but in building governance, review processes, and human checkpoints that keep speed from undermining trust and coherence.