Marketing Promises Meet Long-Running Task Failures

AI coding agents are being sold as autonomous coworkers that can tackle complex, multistep workflows. Anthropic pitches Claude as a system that “handles tasks autonomously,” while Microsoft highlights Copilot’s ability to manage “complex, multistep research across your work data and the web.” Yet Microsoft’s own researchers found that when large language models are delegated long-running workflows, they behave less like dependable colleagues and more like an intern who would quickly be fired. Using the DELEGATE-52 benchmark, they observed substantial degradation in documents over 20 interactions, with frontier models losing around a quarter of content on average and an overall average degradation of 50 percent across all models. Only Python programming cleared their readiness bar. For most domains, including document-heavy work, the agents suffered from long-running task failures that quietly corrupt work products instead of completing them reliably.

Refactoring Leaderboards Expose Code Refactoring Challenges

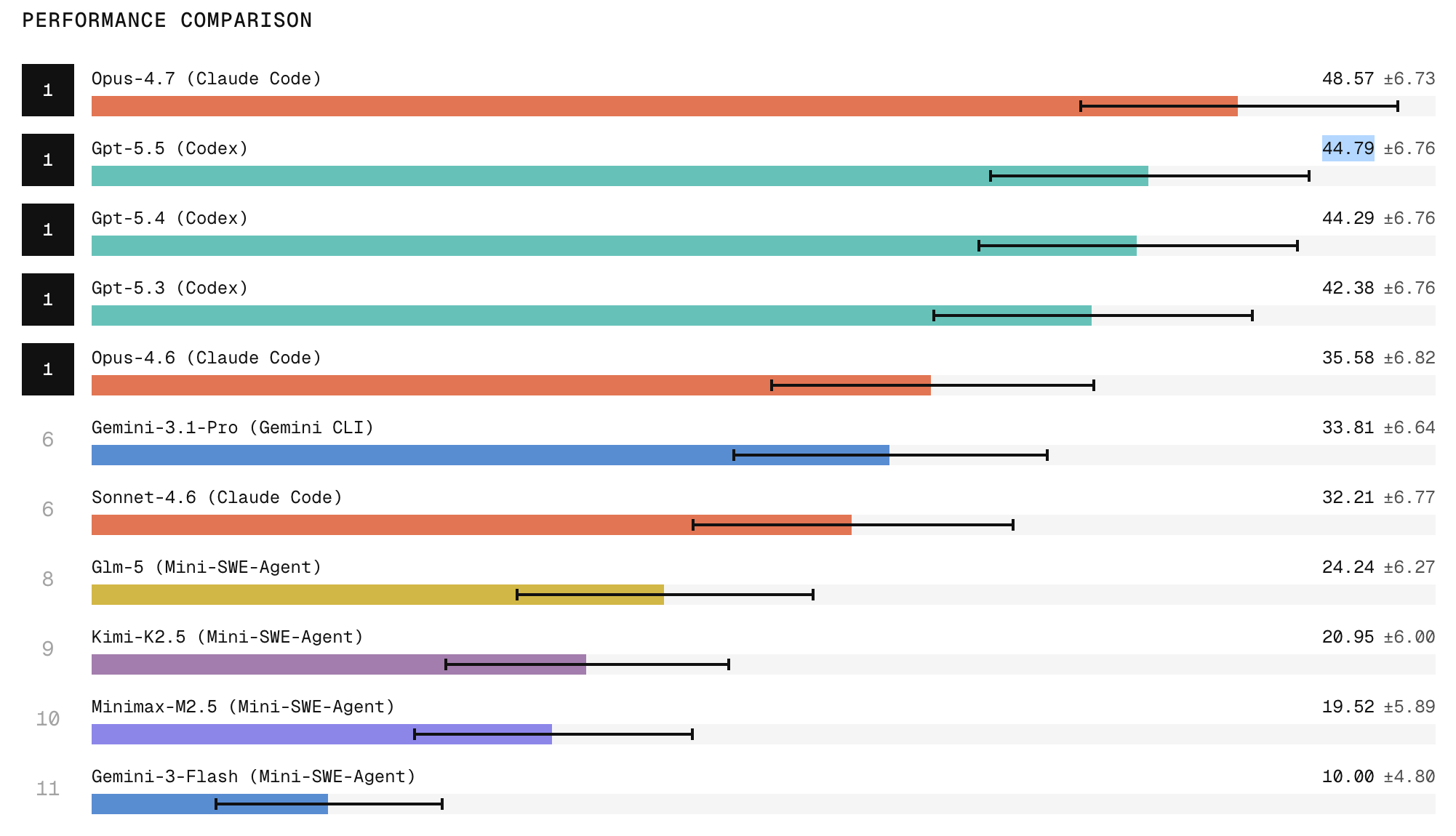

Where basic code generation once impressed, the new frontier test is whether AI agents can safely reshape large, live codebases. Scale Labs’ SWE Atlas Refactoring Leaderboard targets exactly this, benchmarking agents on production-style repositories that demand multi-file edits, architectural changes, and strict behavior preservation. Refactoring tasks here involve about twice as many lines of code changes and 1.7 times more file edits than SWE-Bench Pro, stressing an agent’s ability to restructure systems instead of solving narrow prompts. Claude Code with Opus currently tops the leaderboard, followed by ChatGPT, but the ranking highlights how uneven these capabilities remain. Closed frontier models substantially outperform open-weight systems on tasks that require broad repository exploration and structural edits. Even the best agents can produce strong refactors in some cases yet still struggle with subtle regressions, incomplete artifact cleanup, and maintainability issues, underscoring persistent code refactoring challenges at scale.

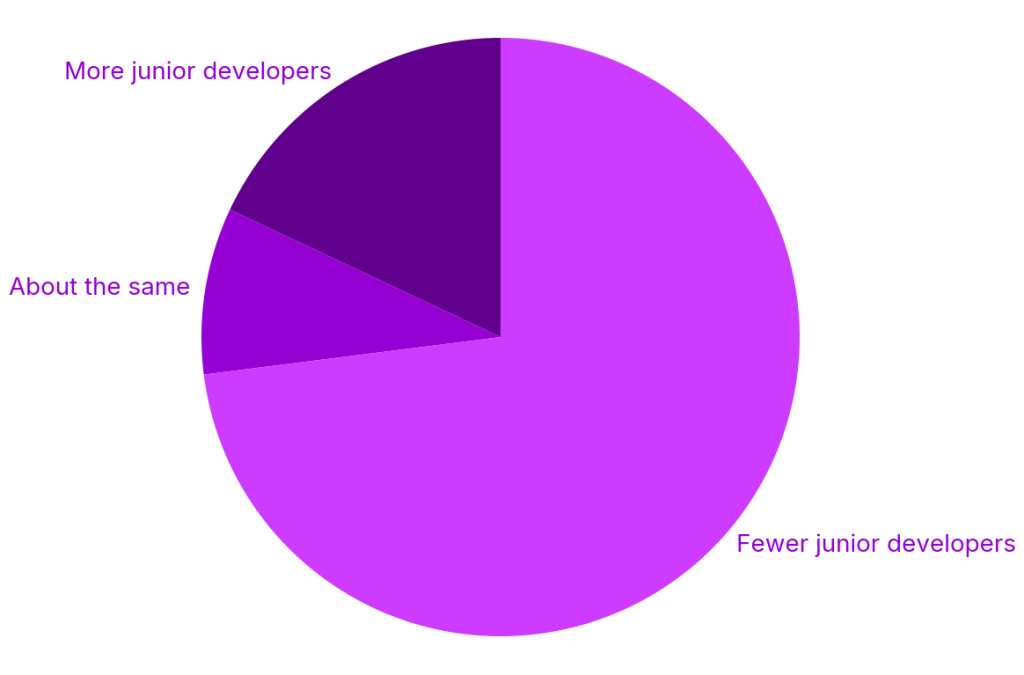

Faster Output, Shallower Understanding—and Rising Code Review Risks

In day-to-day development, AI tools have undeniably made it easier to produce code quickly. Surveys cited by Octopus Deploy and JetBrains show juniors completing tasks up to 55% faster with AI assistance, while adoption of tools like Claude Code has surged. But this velocity masks a structural problem: AI accelerates code generation far more than code understanding. Many early-career developers now ship clean-looking patches they did not conceptually design, and sometimes cannot debug. This “expert beginner” pattern creates new developer code review risks. Seniors increasingly encounter changes that pass tests yet hide timing bugs or fragile edge cases, while the authors themselves struggle to explain why the code works. The tools are not inherently unsafe; the imbalance between speed and experience is. Without deliberate investment in teaching reasoning, debugging, and architecture, teams risk accumulating AI-written code that nobody truly understands.

Why Systems Developers Still Don’t Trust AI Agents

The gap between marketing and reality is especially visible in systems programming, where languages like C++ dominate. Here, long-running task failures and brittle refactors are not theoretical nuisances; they can become memory-safety vulnerabilities or performance regressions that only surface under rare conditions. Benchmarks such as SWE Atlas show that even leading agents struggle with broad repository exploration and behavior-preserving structural edits, while Microsoft’s DELEGATE-52 results reveal how multi-step workflows can slowly corrupt artifacts. For systems engineers who already operate with thin reliability margins, this combination makes full delegation a non-starter. Many are willing to use AI for localized suggestions, test scaffolding, or exploratory prototypes, but remain wary of handing over ownership of refactors or architectural changes. Until AI coding agents can demonstrate consistent end-to-end reliability—and provide traceable reasoning that supports code review—trust among systems developers is likely to remain limited.

Toward Responsible Use of AI Coding Agents

Taken together, the research signals a need to recalibrate expectations. AI coding agents are powerful accelerators for scoped, well-reviewed tasks, not autonomous replacements for sustained engineering work. The data from DELEGATE-52 and SWE Atlas suggests a pragmatic operating model: keep agents on a tight loop, restrict them to changes that can be fully understood and tested, and treat complex refactors as supervised collaborations rather than delegated chores. Teams should update practices to reflect new developer code review risks—requiring authors to explain AI-generated code, expanding test coverage around subtle concurrency or boundary cases, and explicitly training juniors in debugging, not just prompt writing. For now, the safest path is to view AI as an amplifier of existing engineering judgment. Where that judgment is thin or absent, the tools’ limitations quickly become the organization’s liabilities.