The AI Productivity Hype Problem

Engineering leaders are hearing bold claims about AI development tools: faster coding, shorter cycles, and dramatic productivity gains. Yet most teams lack rigorous AI productivity metrics to verify whether these improvements are real, sustained, and visible in business outcomes. Industry figures are often mixed together as if they describe the same reality: controlled trials report significantly faster task completion, forecasts project future percentage gains, and surveys show that only a minority of teams report high productivity improvements today. These numbers describe different scopes, time horizons, and populations, but finance and leadership teams are still expected to budget based on them. Without a consistent engineering ROI measurement approach, it becomes easy to attribute any change—good or bad—to AI. This “AI washing” obscures which investments truly help teams deliver better software, and which simply shift work or introduce new instability into the delivery pipeline.

What the DORA Framework Adds to AI ROI Measurement

Google Cloud’s DORA team addresses this gap with a structured framework that connects AI-assisted development directly to software delivery performance and, ultimately, financial outcomes. Their model starts from the premise that AI is an amplifier, not a standalone solution. Value flows through a chain of capabilities: internal platforms, version control practices, and AI-accessible internal data. These capabilities shape classic DORA metrics such as throughput and stability, which in turn affect developer experience, user experience, and finally financial value. The report proposes using a standard ROI formula—value minus investment, divided by investment—while stressing that results should be treated as high-uncertainty estimates to guide discussion, not as precise calculations. By grounding AI productivity metrics in an explicit value model, the DORA framework helps organisations move beyond anecdotal reports and evaluate AI development tools using the same discipline applied to other large-scale engineering investments.

Strong Engineering Foundations Before AI Acceleration

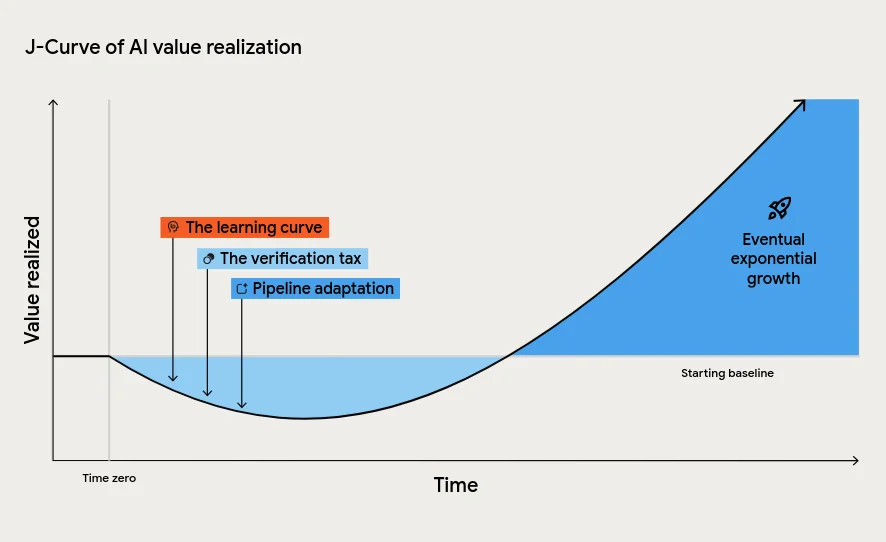

A central finding from the DORA research is that AI magnifies whatever already exists in the organisation. High-performing teams with clear workflows and robust platforms see their strengths amplified; struggling teams see greater chaos. The framework describes this using the J-curve of value realisation: productivity may dip initially due to the learning curve, the verification tax of reviewing AI-generated code, and the need to rework downstream processes like testing and approvals. Leaders who interpret this temporary dip as failure risk cancelling initiatives just before long-term gains appear. The instability tax is another critical concept: increased throughput without matching improvements in automation and batch size can raise change failure rates and downtime costs. DORA’s guidance is clear—invest in continuous integration, automated testing, and small, frequent changes so AI-driven speed does not overwhelm pipelines. In other words, strong engineering foundations are prerequisites for measurable AI ROI.

Separating Real Gains from Perception Bias

Even when engineers feel more productive with AI tools, perception can diverge from reality. Some studies associate AI adoption with higher throughput but lower delivery stability; others show experienced developers taking longer to complete tasks when using large language models. Meanwhile, platforms like Navigara highlight how commit-level metrics can expose whether AI-era productivity claims actually appear in the codebase over time. The DORA framework complements such granular metrics by situating them in a broader value chain, from software delivery performance to business results. Together, these approaches help teams identify which AI investments genuinely clear bottlenecks and which merely shift effort or add review burden. By tracking AI productivity metrics continuously and comparing them against a pre-AI baseline, organisations can distinguish short-lived spikes from sustained improvement and ensure that AI development tools are judged by measured outcomes, not by optimistic narratives or short-term experiments.