The Measurement Gap in AI-Assisted Development

Engineering leaders are hearing bold claims about AI coding tools: faster cycle times, higher throughput, and dramatic productivity jumps. GitHub reports 55% faster task completion with Copilot in controlled trials. Gartner forecasts 25–30% productivity gains by 2028 for teams that fully apply AI, while its current estimate for code-generation tools is 10% and only 34% of surveyed teams report high productivity gains today. These numbers describe different things—future forecasts, narrow task speed, and self-reported outcomes—yet are often treated as interchangeable. Meanwhile, the 2025 DORA research links AI use to higher throughput but lower delivery stability, and other studies show some experienced engineers actually slowing down when using large language models. Without a consistent engineering performance measurement approach, finance and leadership teams are asked to budget on anecdotes. To justify AI coding tools ROI, organisations need software development metrics that tie code-level changes to long-term delivery performance, not just short-term speed stories.

Using DORA Metrics to Track Real AI Productivity

DORA metrics give teams a common language for engineering performance measurement: deployment frequency, lead time for changes, change failure rate, and mean time to restore. Google Cloud’s DORA group treats AI as an amplifier that flows through these delivery metrics into business outcomes. Instead of asking, “How much code did the AI write?”, they ask, “Which bottlenecks did it remove?” When AI coding tools are introduced, teams can observe whether deployment frequency rises without inflating change failure rate, and whether lead times shorten without increasing mean time to restore. The 2025 DORA research warns that AI adoption often increases individual effectiveness while destabilising delivery, as more code moves faster through pipelines that were never designed for this volume. By grounding AI coding tools ROI in DORA metrics AI teams can distinguish between temporary spikes in activity and sustainable improvements in software development metrics that actually reach production reliably.

Foundations First: Why Systems Beat Individual Speed

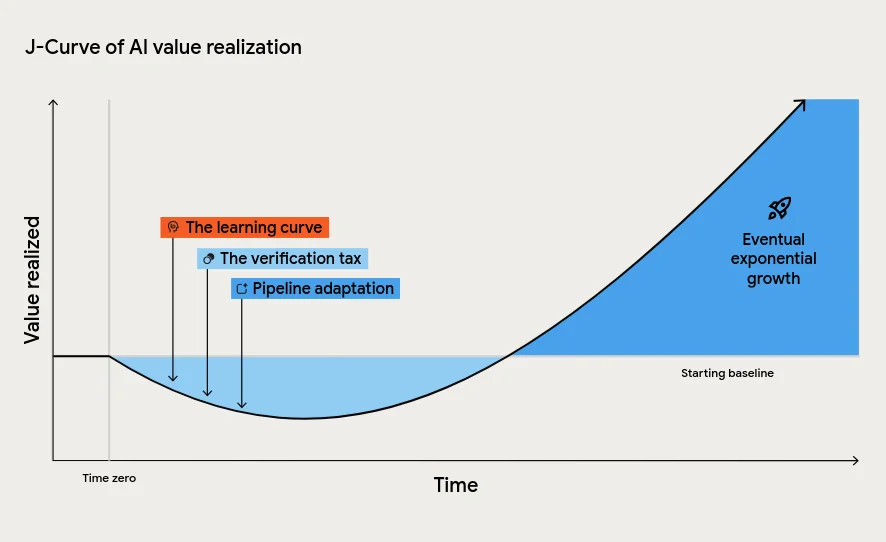

DORA’s latest ROI of AI-Assisted Software Development report argues that the biggest gains from AI come from strengthening the organisational system, not just giving developers new tools. A quality internal platform, clear workflows, strong version control practices, and AI-accessible internal data form the foundation. AI then amplifies what is already there, making high-performing organisations even more effective and magnifying dysfunction in struggling ones. The report also describes a J-curve of value realisation: most teams experience a temporary dip in productivity as they learn new workflows, pay a verification tax for reviewing AI-generated code, and adapt downstream processes such as testing and approvals. Leaders who expect instant returns may misinterpret this tuition cost of transformation as failure. To achieve sustainable AI coding tools ROI, teams must invest in engineering foundations, automated testing, and continuous integration so that any throughput gains registered by DORA metrics are durable rather than chaotic.

Measuring the ROI of AI Coding Tools with Value Models

Google Cloud’s DORA framework extends beyond raw software development metrics by linking engineering changes to financial outcomes through a value model. Value flows from seven capabilities—including internal platform quality, version control, and data accessibility—into improved DORA metrics, then into non-financial outcomes like developer and user experience, and finally into cost savings and revenue growth. Their ROI model uses the standard formula: value minus investment, divided by investment. In a worked example for a 500-person engineering organisation with a fully loaded salary of USD 176,000 (approx. RM809,600) per head, they estimate a first-year return of USD 11.6 million (approx. RM53.4 million) against an investment of USD 8.4 million (approx. RM38.7 million), yielding a 39% ROI and payback in about eight months. The authors stress these figures are high-uncertainty and should spark conversation, not serve as rigid targets, especially as AI inference costs fall and governance, verification, and upskilling become the dominant expenses.

From Hype to Evidence: A Practical Measurement Plan

To move beyond AI hype, start with a clear pre-AI baseline of your DORA metrics and complementary measures like Engineering Throughput Value (ETV), a per-commit metric designed to test AI-era productivity claims directly against your codebase. Tools such as Navigara score each team’s commits against their own history, highlighting real changes in throughput once AI is introduced. Then pilot AI coding tools in a limited scope, tracking deployment frequency, lead time, change failure rate, and mean time to restore, while also monitoring instability costs. Expect the J-curve: productivity may dip as verification practices and pipelines adapt. Use this period to strengthen automated tests, CI/CD, and small-batch delivery so increased code volume does not overwhelm your system. Finally, translate observed metric shifts into value using a structured ROI model, ensuring that claims of AI-driven performance improvements are backed by measurable, repeatable evidence rather than opinion.