A Low Latency AI Model Built for Scale

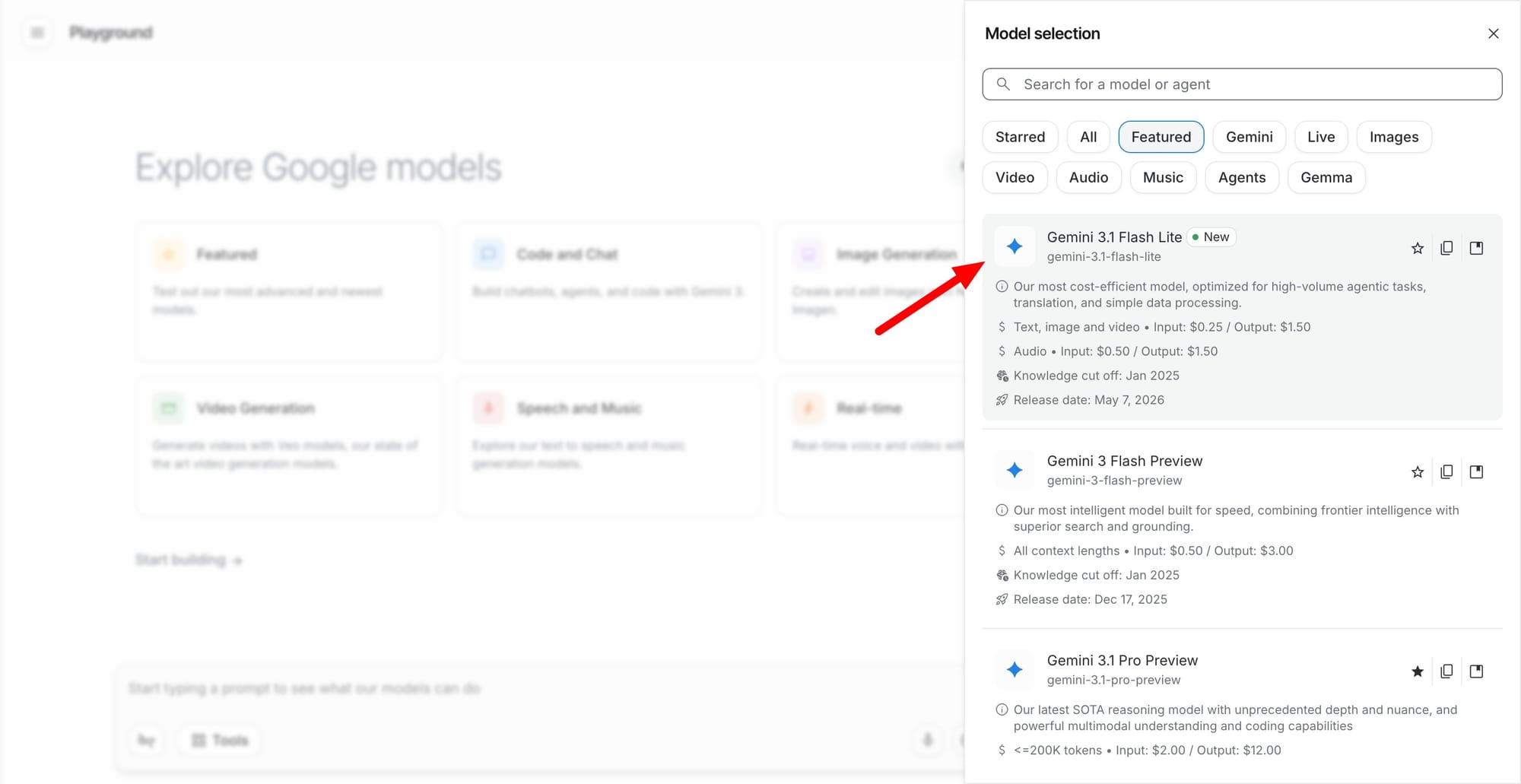

Gemini 3.1 Flash-Lite is the newest member of Google’s Gemini 3 series, now generally available through Google Cloud AI. The model is explicitly tuned for low-latency, high-volume processing, targeting engineering teams, customer support platforms, creative tools, and financial services that depend on rapid decision-making. Google positions Flash-Lite as its fastest and most cost-efficient Gemini 3 model to date, emphasizing reduced response times even under heavy concurrent loads. For lightweight tasks such as classification, responses arrive in well under a second, while full reply generation maintains a p95 latency of around 1.8 seconds. This performance profile is designed for applications that must serve thousands or millions of requests without bottlenecks. By combining speed and efficiency, Gemini 3.1 Flash-Lite aims to give developers and enterprises a practical alternative to larger, more resource-intensive models when throughput and responsiveness are the top priorities.

Multimodal Processing and Tool Calling Capabilities

Beyond raw speed, Gemini 3.1 Flash-Lite brings multimodal intelligence to high-volume processing environments. The model can handle both text and image input, allowing developers to build workflows that span natural language understanding, visual analysis, and content generation within a single low latency AI model. Early adopters are using Flash-Lite for agentic tasks such as tool calling, orchestration, and automation of repetitive processes. In practice, this means an AI agent can receive a user request, call external tools or APIs, process images or documents, and generate a coherent answer, all while keeping response times tight. These capabilities are particularly attractive for real-time developer tools, automated quality checks, and customer service chat systems that must interpret mixed media inputs. By consolidating text and image processing with robust tool integration, Flash-Lite simplifies architecture and reduces the need for multiple specialized models.

Balancing Speed, Cost, and Cognitive Performance

Gemini 3.1 Flash-Lite is designed around a sharper trade-off between speed, cost, and cognitive performance compared with previous Gemini iterations. While it is lighter than flagship models, it delivers sufficient reasoning and language capabilities for a wide range of enterprise use cases, particularly those where milliseconds matter more than maximal depth of understanding. Industry users such as JetBrains, Gladly, and Ramp report that Flash-Lite enables them to operate at scale without sacrificing reliability or quality of output. The model’s efficient architecture helps reduce computational overhead, which can translate into lower infrastructure requirements on Google Cloud AI. This makes it attractive for teams that need to serve continuous streams of requests—such as code suggestions, content moderation, or transactional support—while keeping operational complexity in check. Flash-Lite effectively targets the middle ground where practical performance and budget-conscious deployment intersect.

Enabling Faster AI Agents and Enterprise Automation

For developers building AI agents and automated workflows, Gemini 3.1 Flash-Lite offers a foundation optimized for responsiveness. Its sub-second classification and consistent p95 latency around 1.8 seconds under high concurrency allow agents to feel more interactive and reliable, even when serving large user bases. This responsiveness is critical for customer service bots, intelligent routing systems, real-time analytics, and developer assistants that must respond instantly to maintain user trust. Tool calling support further enhances automation, enabling agents to coordinate multiple services and datasets without adding significant delay. Because the model is available across Google Cloud, organizations can integrate it into existing pipelines and managed services, accelerating deployment. By combining low latency, high-volume processing, and multimodal intelligence, Gemini 3.1 Flash-Lite positions itself as a core building block for next-generation AI applications that demand both speed and scale.