Real-World GPT-5.5 Pricing: When Efficiency Claims Meet Billing Reality

OpenRouter’s April 2026 analysis has turned GPT-5.5 pricing from a theoretical concern into a concrete line item. After customers migrated from GPT-5.4, effective AI model costs for GPT-5.5 rose between 49 and 92 percent, depending on prompt-length bands. That jump goes far beyond a mild pricing tweak and directly affects production AI expenses for teams that had budgeted only for list-price changes. The data shows average cost per million tokens climbing from USD 4.89 (approx. RM22.50) to USD 9.37 (approx. RM43.10) for prompts under 2,000 tokens, with similar increases across other bands. These figures cut against OpenAI efficiency messaging that emphasises shorter answers and equal per-token latency. Instead, they reveal a gap between controlled benchmark conditions and the messy, high-volume traffic patterns that define real enterprise deployments.

How Completion Lengths Drive Production AI Expenses Upward

The core reason GPT-5.5 pricing bites harder in production lies in completion behaviour, not just headline API rates. For prompts above 10,000 tokens, GPT-5.5 does generate 19 to 34 percent fewer completion tokens, suggesting better token efficiency in long-context tasks. But most enterprise workloads spend their time in short and mid-range prompts, where OpenRouter logs show very different dynamics. In the 2,000 to 10,000 token band, median completions grew 52 percent, and even sub‑2,000 token prompts saw a 7 percent increase. That added verbosity consistently inflates token output, pushing average cost per million tokens to USD 3.81 (approx. RM17.50) in the 2,000–10,000 band and USD 2.15 (approx. RM9.90) in the 10,000–25,000 band. The result is a model that can appear lean on paper yet systematically raise production AI expenses when deployed at scale.

Short Prompts, Long Bills: Where Enterprise Workloads Actually Live

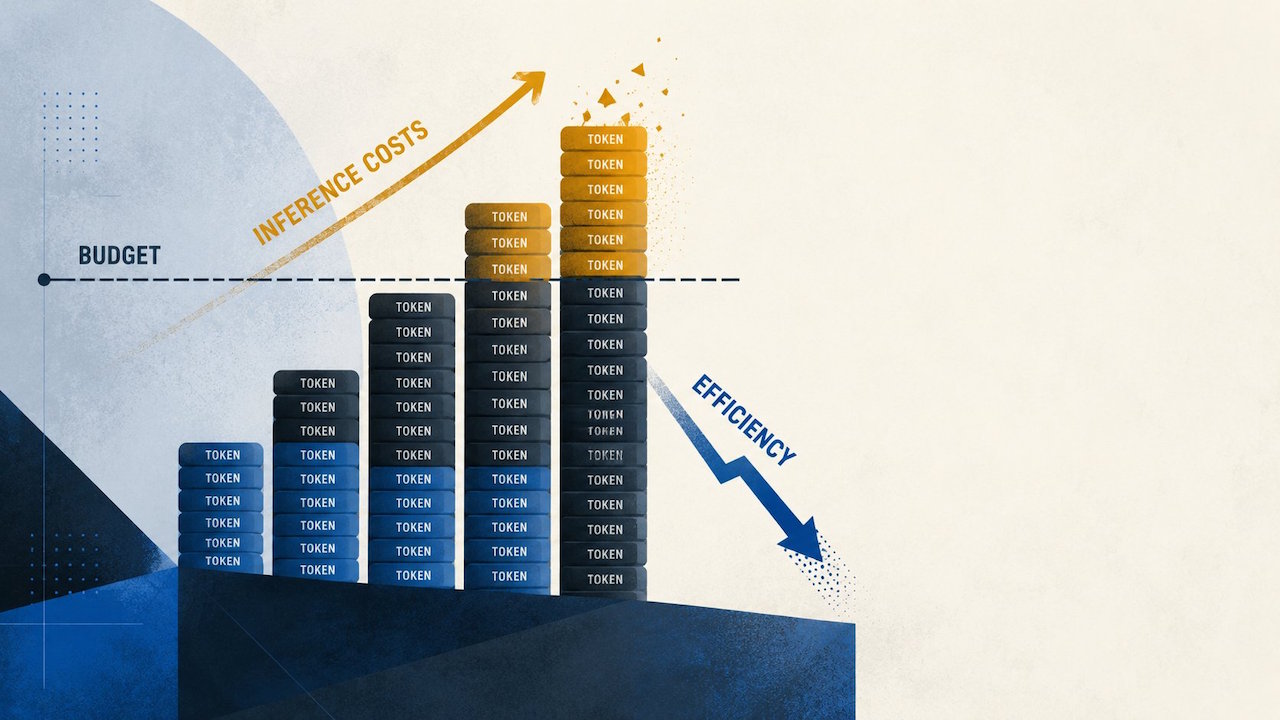

Most production AI systems today do not operate at the extreme context limits highlighted in marketing decks. Retrieval-augmented assistants, coding copilots, workflow agents, and customer-support bots typically cycle through short prompts, tool calls, retries, and follow-up questions. In that environment, even modest increases in completion length compound rapidly. OpenRouter’s data shows average cost per million tokens nearly doubling for short prompts and rising 49 to 85 percent in longer bands, despite GPT-5.5 being nominally more token efficient. Each additional tool retry or reformulated answer adds tokens on the output side, quietly shifting workloads into more expensive operating bands. This is where the gap emerges between OpenAI efficiency claims and the actual cost profile confronting platform teams. Without production traces, finance leaders may greenlight GPT-5.5 based on list prices, only to see monthly bills escalate as traffic ramps.

From Benchmarks to Budgets: Rethinking Model Evaluation for Enterprises

The GPT-5.5 rollout underlines how controlled benchmarks can mislead enterprise buyers about true AI model costs. In March 2026, GPT-5.4’s short-context baseline sat at USD 2.50 (approx. RM11.50) per million input tokens and USD 15 (approx. RM69.00) per million output tokens. GPT-5.5 now lists at USD 5 (approx. RM23.00) and USD 30 (approx. RM138.00) respectively, with GPT-5.5 Pro pushing that higher, yet earlier estimates suggested only a 19 percent API cost increase. OpenRouter’s production logs instead show a 49–92 percent jump in effective spending once real prompts, completion drift, and user behaviour enter the picture. For buyers comparing GPT-5.5 to rivals such as Claude Opus 4.7, the lesson is clear: model evaluation must extend beyond synthetic tests. Narrow operational pilots, workload-specific routing rules, and strict monitoring of completion lengths are now essential steps before committing any model to broad production use.

Practical Guardrails for Managing GPT-5.5 Production AI Expenses

Enterprises don’t need to abandon GPT-5.5, but they must treat its completion patterns as a budget-control issue. One approach is selective routing: reserve GPT-5.5 for jobs that genuinely exploit its long-context strengths, premium user tiers, or specific high-value flows, while keeping GPT-5.4 or cheaper models on default paths. Platform teams should capture per‑band token statistics, watching for mid-range prompts where completions expand the most. Finance and engineering can then align: list prices become just one input alongside real token traces, retry rates, and tool-call loops. Finally, buyers should run A/B tests against alternatives like Claude Opus 4.7, taking into account tokenisation differences that may shift apparent GPT-5.5 pricing. The central shift is cultural as much as technical—treating AI model costs as an operational metric to be tuned continuously, not a one-time procurement decision based on benchmarked OpenAI efficiency claims.