GPT-5.5 Instant Becomes the New ChatGPT Default

OpenAI has promoted the GPT-5.5 Instant model to become the new ChatGPT default, replacing GPT-5.3 Instant for most users. This is more than a routine version bump: for the majority of people, the default model is their entire experience of ChatGPT. OpenAI says the upgrade is aimed at clearer, more accurate answers across everyday tasks, from STEM questions and image analysis to deciding when to pull in web search. Rather than piling on new features, GPT-5.5 Instant focuses on doing the basics better—reducing mistakes, structuring replies more helpfully, and tailoring responses to individual users. Paid customers can still access GPT-5.3 Instant for a limited transition period, but the new model is rolling out broadly, including via the chat-latest API endpoint. In practical terms, anyone opening ChatGPT now is testing GPT-5.5 Instant’s promise of higher reliability by default.

Hallucination Reduction and Real AI Accuracy Improvements

The headline claim for GPT-5.5 Instant is AI hallucination reduction. OpenAI’s internal evaluations report 52.5% fewer hallucinated claims compared with GPT-5.3 Instant on high-stakes prompts in domains such as medicine, law, and finance. The company also cites a 37.3% drop in inaccurate statements on difficult, previously flagged conversations. Independent reviewers have seen progress, though not as dramatic across every scenario. Tests comparing GPT-5.5 with older Instant models found more careful reasoning, better error correction, and a stronger tendency to revisit earlier steps when something looks off. However, accuracy gains were uneven, and not every benchmark mirrored OpenAI’s improvements. Still, for students checking math, professionals validating financial reasoning, or users asking health-adjacent questions, the direction is clear: the ChatGPT default model is more likely to stay grounded, acknowledge uncertainty, and deliver answers that hold up under scrutiny more often than its predecessors.

Conciseness, Clarity, and Everyday Usefulness

OpenAI positions GPT-5.5 Instant as both more concise and more conversational, claiming responses that are around one-third shorter on average. Independent testing, however, paints a more nuanced picture. In side-by-side prompts about REST vs GraphQL, salary negotiation, and first-time home buying, GPT-5.2 often produced shorter, more scannable answers, while GPT-5.5 Instant leaned into fuller prose, extra context, and more sub-bullets. For some users, that added explanation makes responses feel more like a human conversation and less like a rigid outline. For others, especially those skimming for quick decisions, GPT-5.2’s tighter formatting can still feel clearer. What does change in GPT-5.5 Instant is how it manages user time: it is better at staying on-topic, explaining reasoning, and avoiding unnecessary digressions, even if it occasionally uses more words. The trade-off is conversational richness over strict brevity—a shift that may benefit everyday chats more than structured technical summaries.

Personalization, Memory Sources, and Context Handling

Alongside the GPT-5.5 Instant model, OpenAI is rolling out memory sources across all ChatGPT models, deepening personalization. Users can now see which saved memories, past chats, or uploaded files were used to shape a response and can correct or delete outdated information. GPT-5.5 Instant is tuned to draw more effectively on this context, including connected Gmail accounts when enabled, reducing the need to repeat preferences or project details. Reviewers found that while personalization is indeed stronger—showing better continuity across sessions and follow-up tasks—the experience is still evolving. GPT-5.5 Instant is more likely to remember ongoing work and adapt tone or examples to a user’s prior questions, but it does not always balance that context with the need for fresh, unbiased reasoning. Crucially, OpenAI says memory sources remain private when chats are shared, giving users more visibility into personalization without exposing personal context to others.

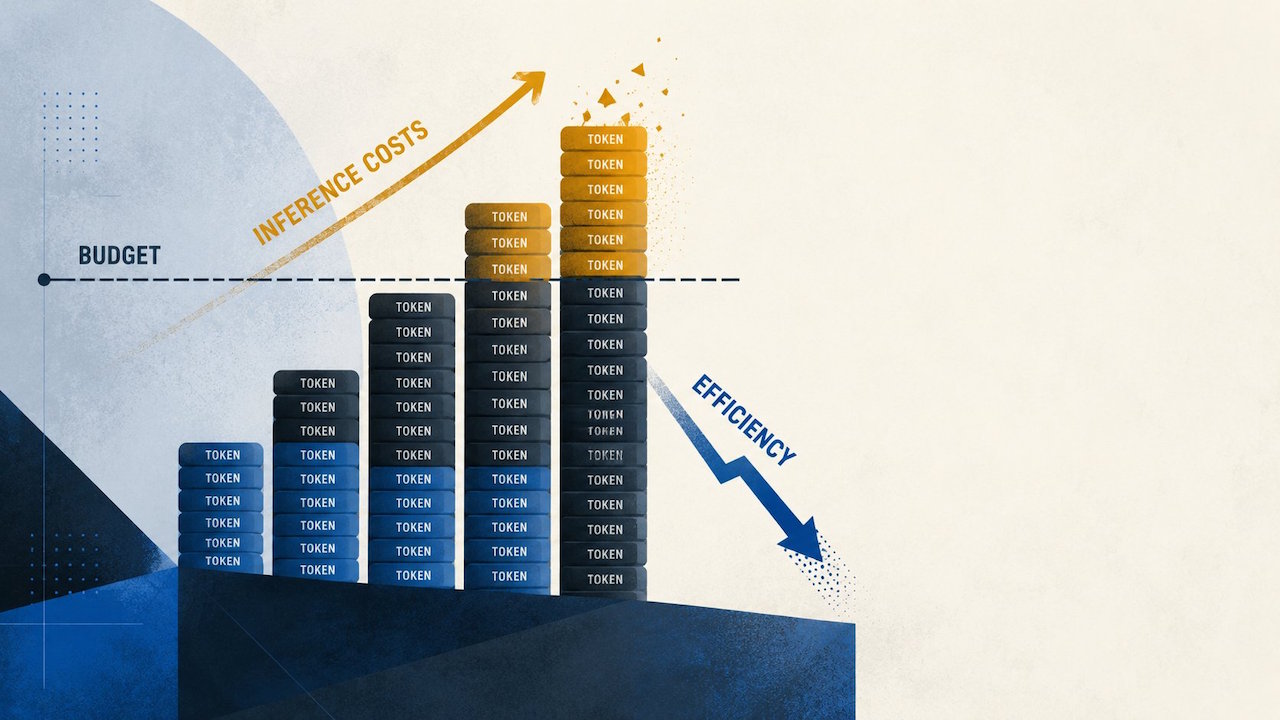

The Hidden Cost: GPT-5.5’s Performance-versus-Cost Trade-off

Improved reliability from the GPT-5.5 Instant model comes with a notable cost shift, especially for heavy API users. An analysis of real-world usage logs by OpenRouter found that after teams switched from GPT-5.4 to GPT-5.5, effective costs rose between 49% and 92%. This jump was not just about list prices; it reflected how the model behaves under production workloads. For very long prompts above 10,000 tokens, GPT-5.5 produced 19–34% fewer completion tokens, making some long-context tasks cheaper. But in the 2,000–10,000 token band—where many practical workloads live—completions were about 52% longer, inflating bills. Enterprises weighing GPT-5.5 against rivals like Claude Opus 4.7 are being warned to rely on production traces, not only benchmarks, before committing spend. For everyday ChatGPT users, the trade-off is simpler: better AI accuracy improvements and fewer hallucinations, but at infrastructure costs that may push some teams to be more selective about when and how they call the new default model.