Open-Source AI Tools Converge on Production-Grade Structure

A new wave of open source AI tools is redefining how teams design, test, and ship AI-powered software. Anthropic’s Petri alignment testing framework, GitHub’s Spec-Kit for spec-driven coding, and OpenAI’s Symphony orchestration spec all target different layers of the stack, yet converge on the same idea: AI needs structured, production-grade workflows. Instead of one-off prompts and ad hoc guardrails, these projects formalize how models are evaluated for safety, how agents plan code changes, and how autonomous systems execute work from ticket to merge. For developers, this signals a shift away from experimental playgrounds toward systems that can be audited, governed, and integrated into existing engineering pipelines. Together, they move open source AI tools from novelty to critical infrastructure, closing the gap between powerful models and the operational discipline needed to run them in real products.

Petri 3.0 and Meridian Labs: Alignment Testing Grows Up

Anthropic has handed stewardship of Petri, its open-source AI alignment testing tool, to nonprofit Meridian Labs while shipping Petri 3.0, the framework’s largest overhaul since launch. Petri has already been central to Anthropic’s internal AI alignment testing and is used by external evaluation pipelines to probe frontier models for dangerous or deceptive behavior. Version 3.0 restructures Petri so that the auditor model and the target model are cleanly separated, allowing researchers to independently swap in new auditors, models, or both without refactoring interleaved code. A new extension called Dish, currently in research preview, tackles a key AI alignment testing challenge: models often realize they are being evaluated and behave differently from real-world deployments. Dish embeds audits inside real agent scaffolds such as coding assistants, making evaluations more realistic and closer to production conditions for safety-critical AI deployments.

GitHub’s Spec-Kit: Spec-Driven Coding as Default AI Workflow

GitHub’s Spec-Kit brings spec-driven coding workflows to AI-assisted development by enforcing structure before any code is generated. Instead of throwing a single, loosely defined prompt at a coding agent, teams move through a staged flow: Specify, Plan, Tasks, and Implement. The Specify CLI and templates capture the product scenario, while planning and task breakdown steps produce explicit technical direction and assignable work units. Spec-Kit’s slash commands cover constitution, spec writing, planning, task decomposition, issue conversion, and implementation, with optional clarification, analysis, and checklist steps that act as review gates. These checkpoints let organizations maintain familiar engineering governance while leveraging AI for implementation. Task lists can be turned into trackable issues, creating an auditable bridge from intent to code. Early traction—tens of thousands of stars and thousands of forks—suggests developers are willing to trade some speed for predictable, inspectable AI workflows.

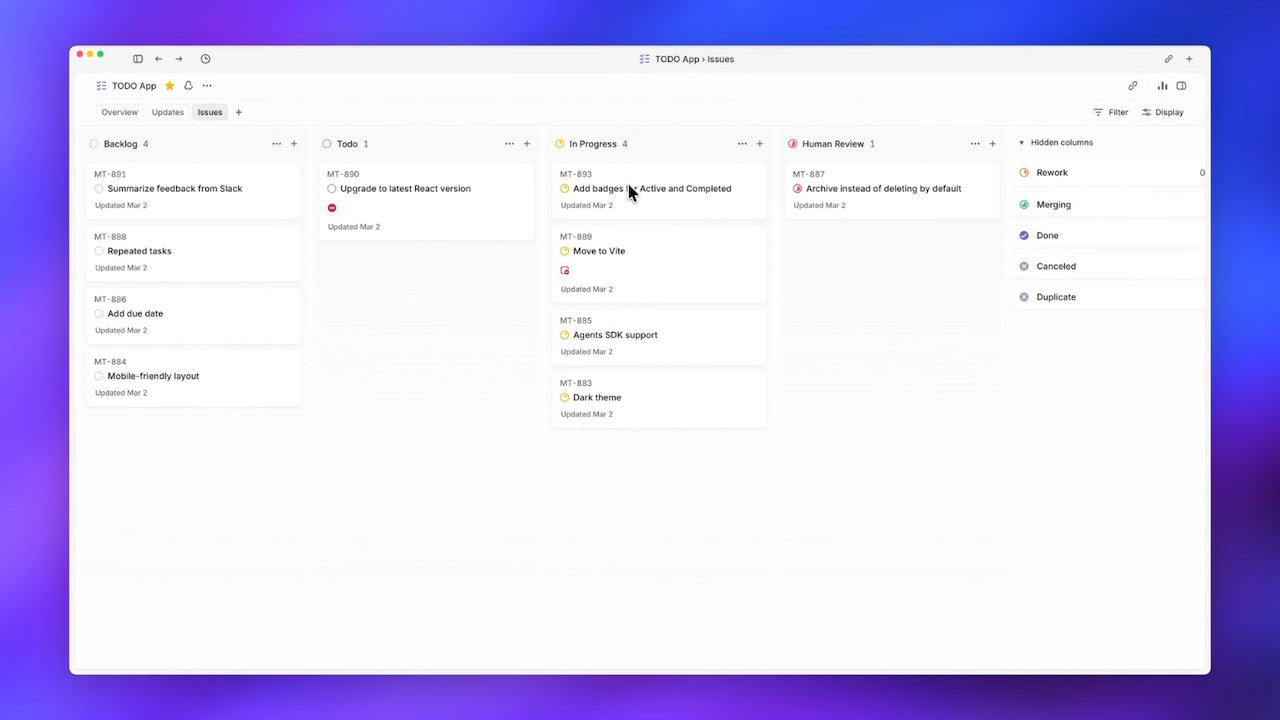

OpenAI’s Symphony: AI Agent Orchestration from Ticket to Merge

OpenAI’s Symphony spec focuses on AI agent orchestration, letting Codex-based agents autonomously pull work items and drive them to completion. The open-source reference, implemented in Elixir, treats a ticketing system like Linear as a state machine. Each open ticket spins up its own Codex agent and dedicated workspace, which runs continuously until the task is finished and the related pull request is merged. If an agent crashes mid-task, Symphony respawns it, removing humans from the dispatch loop and addressing a key bottleneck: limited human attention for supervising many parallel agents. OpenAI reports that internal teams using Symphony saw a sixfold increase in merged pull requests over three weeks, highlighting how automation at the orchestration layer can dramatically boost throughput. Outside forks already adapt Symphony for other stacks, signaling early interest in reusable patterns for AI agent orchestration.

What Developers Should Do Now: Align, Specify, Orchestrate

Taken together, Petri, Spec-Kit, and Symphony point toward an emerging pattern for production AI: align models rigorously, structure the work clearly, then orchestrate agents autonomously within those constraints. Petri 3.0 and its Dish and Bloom extensions focus on realistic AI alignment testing before deployment. Spec-Kit pushes teams to adopt spec-driven coding, baking in plans, tasks, and review checkpoints so AI-generated code remains traceable and governable. Symphony demonstrates how AI agent orchestration can remove humans from low-value coordination work while still aligning execution with the ticket system that teams already trust. For developers, the practical takeaway is to start piloting these tools where they naturally fit: Petri for safety evaluations, Spec-Kit for new feature workflows, and Symphony-like patterns for repetitive ticket handling. The trajectory is clear: the future of open source AI tools is not just smarter models, but smarter, safer, and more structured development pipelines.