From Developer SDK to AI-Powered VR Builder

Meta’s Immersive Web SDK (IWSDK) began as a developer-focused framework to streamline building WebXR experiences. It abstracted complex tasks such as physics, hand-tracking, movement, grab interactions, and spatial UI so teams could focus on creative design rather than engine-level plumbing. The latest update transforms IWSDK into an AI-powered VR builder by introducing what Meta calls an “agentic workflow.” Instead of merely suggesting snippets, integrated AI coding assistants like Claude Code, Cursor, GitHub Copilot, and Codex now take a more active role in constructing entire experiences. This evolution shifts the toolkit from a traditional code library into something closer to a no-code VR development environment, where prompts and assets guide the build process. By positioning IWSDK as open-source under a permissive license, Meta is signaling that it wants this framework to become a central, community-driven foundation for the immersive web.

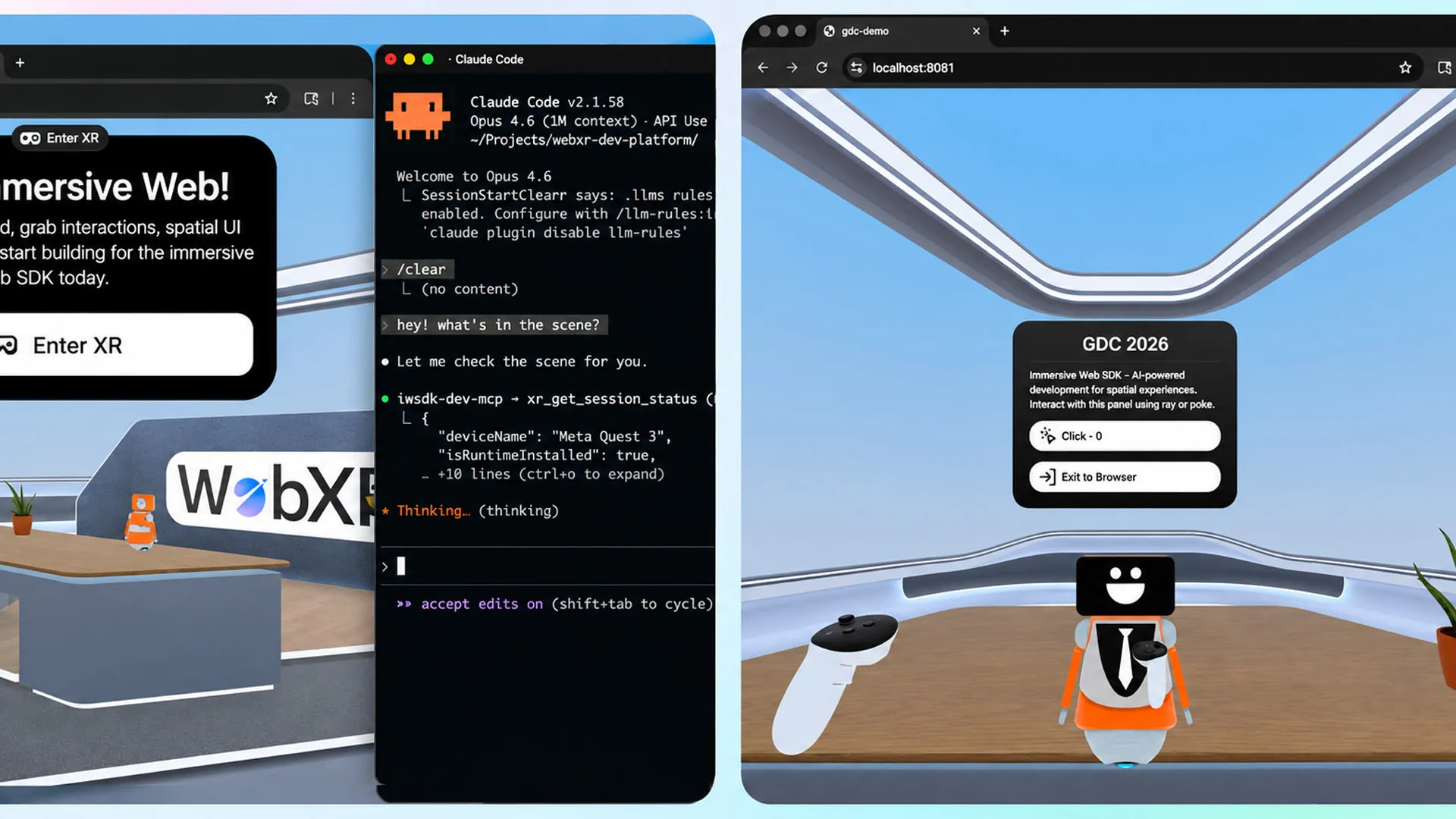

What Agentic AI Workflows Mean for WebXR Experiences

Agentic workflows change how VR projects are produced on the web. Rather than stopping at code generation, the AI iteratively writes, tests, and validates functionality, creating a closed-loop cycle that aims for reliability as well as speed. Meta highlights that this loop is crucial for building fully interactive WebXR experiences, not just scaffolding or boilerplate. The company demonstrated the approach by recreating its earlier Project Flowerbed gardening demo, originally composed of tens of thousands of lines of custom code. Using IWSDK’s AI workflow and pre-existing art assets, the team rebuilt the entire VR application in about 15 hours. This example underscores how an AI-powered VR builder can compress timelines dramatically, suggesting that complex scenes, interactions, and mechanics can be orchestrated through guided prompts instead of painstaking manual coding, while still running instantly in the browser via WebXR.

Democratizing VR Development for Non-Technical Creators

For non-technical creators and small studios, the most meaningful shift is the reduction of coding expertise required to ship immersive content. IWSDK’s AI integration effectively turns natural language instructions and design intentions into working VR scenes, interactions, and UI systems. This aligns with broader no-code VR development trends, but with an important twist: the agentic AI handles iterative debugging and validation, tasks that usually demand seasoned developers. As a result, storytellers, educators, and independent artists can experiment with WebXR experiences without building a dedicated engineering team. Small studios gain a force multiplier, using AI agents to prototype mechanics or rebuild existing concepts faster than traditional pipelines allow. By lowering the barrier to entry while keeping the tooling web-based and open-source, Meta is creating an on-ramp where experimentation is cheap in time and complexity, inviting a wider range of voices into VR.

The Immersive Web as Meta’s Strategic Platform

Meta’s push behind the Meta IWSDK toolkit fits a larger strategy: make the immersive web the most accessible gateway into VR. Web-based deployments avoid app store friction and long compile cycles; creators can test directly in a browser and share experiences via a simple URL across desktop and headsets. Meta notes that over one million monthly users already access WebXR content on Quest, indicating a sizable audience for browser-first VR. By enhancing productivity with AI and supporting multiple popular coding assistants, Meta is incentivizing developers to treat the web as their primary distribution channel. This ecosystem play benefits Meta’s hardware, but it also strengthens WebXR as a neutral platform where content is not locked into a single app marketplace. If the AI-powered VR builder delivers on its promise, the immersive web could evolve into a creative commons of interactive experiences authored by both professionals and newcomers.