From Grid Limits to Orbit: Why AI Infrastructure Is Leaving Earth

The explosion of AI workloads is colliding with hard limits on Earth’s power grids and land. Hyperscale data centers are straining local utilities, sparking opposition over land use, water consumption, and energy demand. In response, Google and SpaceX are exploring orbital data centers as a radical extension of existing AI infrastructure strategies. Google’s Project Suncatcher envisions solar-powered satellites equipped with Tensor Processing Units, effectively lifting part of its AI cloud into orbit. SpaceX, meanwhile, sees space-based computing as a future growth pillar alongside Starlink. Orbital data centers could tap near-continuous sunlight, avoid terrestrial planning battles, and reduce dependence on fragile grid infrastructure. The move signals a paradigm shift: instead of merely optimizing data halls on Earth, leading firms are asking whether compute itself should migrate off-planet to unlock new power and cooling envelopes. It’s an early-stage bet, but one that reflects mounting pressure on traditional AI infrastructure.

Inside Project Suncatcher and the Google–SpaceX Cloud Partnership

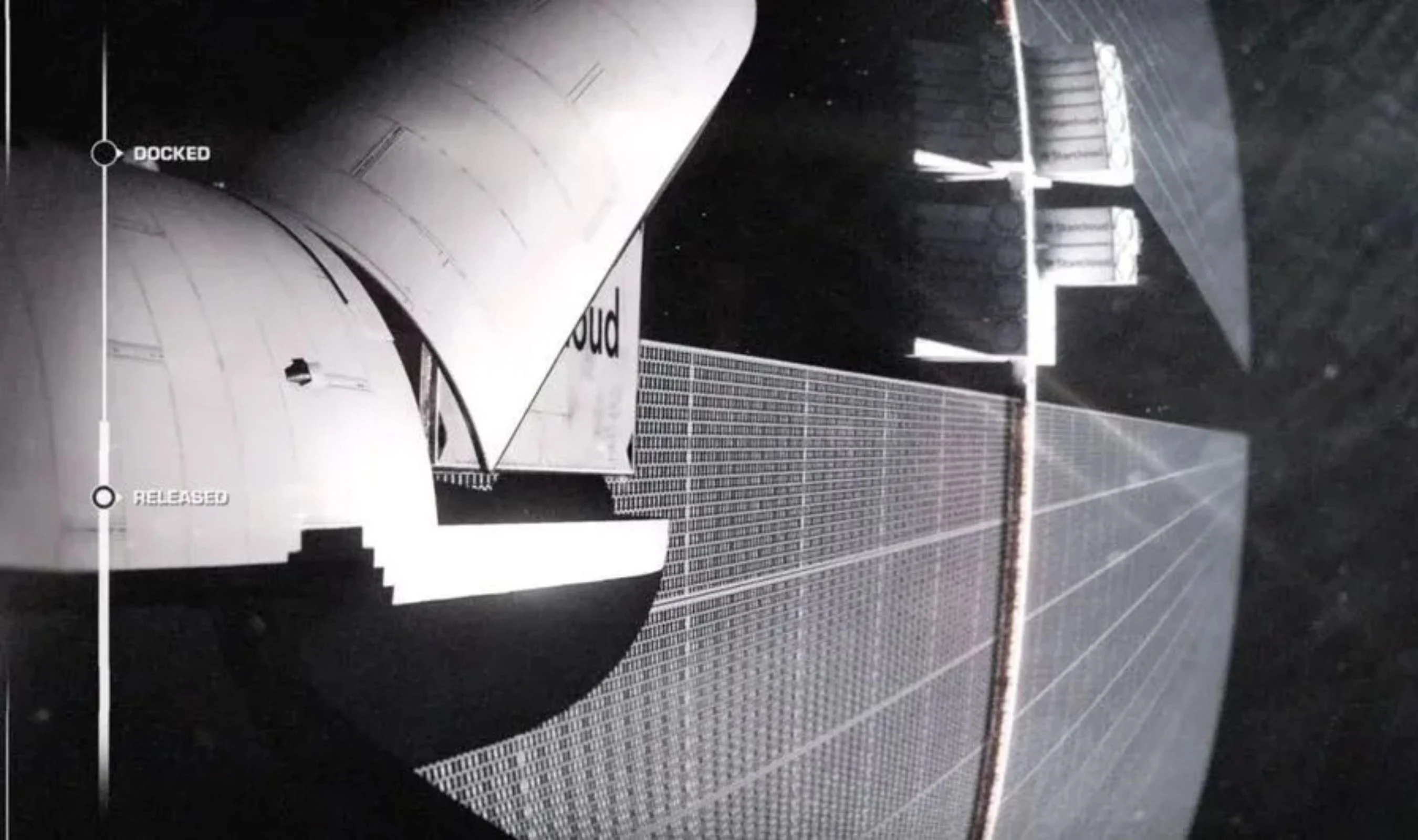

Google has begun turning the orbital data center concept into a structured experiment through Project Suncatcher. Announced as a moonshot initiative, the project plans two prototype satellites, built and operated by Planet and targeted for launch by early 2027. These satellites will host “tiny racks” of Google TPUs, testing whether core AI hardware can operate reliably in low Earth orbit under real radiation, thermal, and networking conditions. Google is in advanced talks with SpaceX to provide the launch backbone for these satellites, building on a relationship that includes Google’s 6.1% stake from an earlier USD 900 million (approx. RM4.14 billion) investment. The emerging Google cloud partnership with SpaceX is strategically significant: it could give SpaceX a marquee customer for its planned IPO while giving Google preferential access to reusable launch capacity. Both companies are also talking with other launch providers, underscoring that the orbital AI cloud is becoming a contested infrastructure frontier rather than a single-vendor play.

The Physics and Engineering Challenges of Space-Based Computing

Orbital data centers promise abundant solar power and physical security, but they face formidable engineering hurdles. Launch costs remain the first constraint: space-based computing only becomes attractive if reusable rockets drive prices low enough to offset satellite construction and replacement cycles. Even if launch becomes cheap, thermal management is a major challenge. Space is cold, but it lacks air, forcing designers to rely on radiators and finely tuned thermal systems to move heat away from dense AI chips. Every watt of compute becomes a thermal design problem. Radiation poses another risk. While Google reports its Trillium-generation TPUs survived simulated low Earth orbit radiation without damage, long-term commercial operation could expose systems to bit flips and hardware degradation. Finally, networking and latency models must be rethought. Orbital AI infrastructure will need to integrate with terrestrial fiber and cloud regions, shifting how workloads are scheduled between Earth-based and space-based computing clusters.

Compute as a Strategic Weapon: Anthropic, xAI, and the New Arms Race

The push into orbital data centers is unfolding against a broader scramble for terrestrial AI infrastructure. Anthropic’s recent agreement to use computing resources from xAI’s Colossus 1 Memphis data center, now under SpaceX’s control after its acquisition of xAI, highlights how traditional capacity is already being fiercely contested. This deal signals a world where AI firms must secure long-term access to compute—whether through exclusive data center contracts, custom hardware, or unconventional options like space-based clusters. SpaceX is pitching orbital data centers as a future low-cost option for AI computing, even as terrestrial facilities remain cheaper today when satellite and launch costs are included. The emerging pattern is clear: compute itself is becoming a strategic weapon. Companies that lock in power, chips, and launch capacity can run larger, more frequent AI experiments, potentially widening the gap with rivals still constrained by grid connections and local planning bottlenecks.

A New Architecture for Global AI: Latency, Edge, and User Devices

If orbital data centers move from experiment to deployment, they could reshape the architecture of global AI infrastructure. Space-based computing nodes, linked with terrestrial cloud regions and satellite networks like Starlink, would form a multi-layered fabric spanning ground and orbit. Computation could shift closer to orbital edge nodes, reducing latency for globally distributed AI services and altering how data flows are routed. This aligns with Google’s broader strategy, which includes new AI-native devices such as the internally referenced “Googlebook” laptop that integrates tightly with Gemini. In such a model, user hardware becomes a thin extension of a vast, distributed intelligence system across Earth and space. Space-based computing would handle power-hungry training and inference bursts, while terrestrial and device-level layers focus on responsiveness and specialization. The result could be a more resilient, scalable AI infrastructure, but one that concentrates power and capability in the hands of firms able to reach orbit.