Stanford’s Warning Shot: The Hidden ‘Swarm Tax’

Multi agent AI has become the buzzword of the year, but new work from Stanford suggests many teams are overpaying for complexity. Researchers compared single agent systems and multi-agent AI on complex multi-hop reasoning tasks, carefully equalising the “thinking token” budget for both approaches. When the compute playing field was level, single agent systems often matched or even outperformed elaborate AI swarm architecture designs. Multi-agent setups only pulled ahead when a single agent’s context became too long or corrupted, creating a bottleneck that parallel agents could sidestep. The catch is that swarms usually consume more tokens because they rely on multiple agents, longer reasoning traces and repeated interactions. In other words, many reported gains look less like architectural superiority and more like extra compute. For enterprise AI agents, the message is blunt: if a single agent with a healthy reasoning budget can do the job, a swarm may just be an expensive luxury.

Why Swarms Took Off—and the Complexity They Hide

Multi-agent architectures rose on promises of coordination, specialization and autonomy. Frameworks featuring planner agents, role-playing setups and debate-style swarms split big problems into partial contexts handled by different experts, passing answers around like a relay. That structure appeals to teams building autonomous agent design because it mirrors human organisations: planners, specialists and reviewers. But every extra agent adds orchestration overhead, harder observability and new failure modes. You must track who knows what, how context is passed, and what happens when one agent goes off the rails. Stanford’s results underline that many gains attributed to clever multi-agent AI design actually stem from simply throwing more computation at the problem. For leaders chasing enterprise AI agents that are auditable and governable, this matters. Swarms can obscure decision paths, complicate logging and make root-cause analysis painful. The hype promised effortless collaboration; the reality is often more like running a distributed system with all the usual integration headaches.

Cybersecurity: When Coordination Really Matters

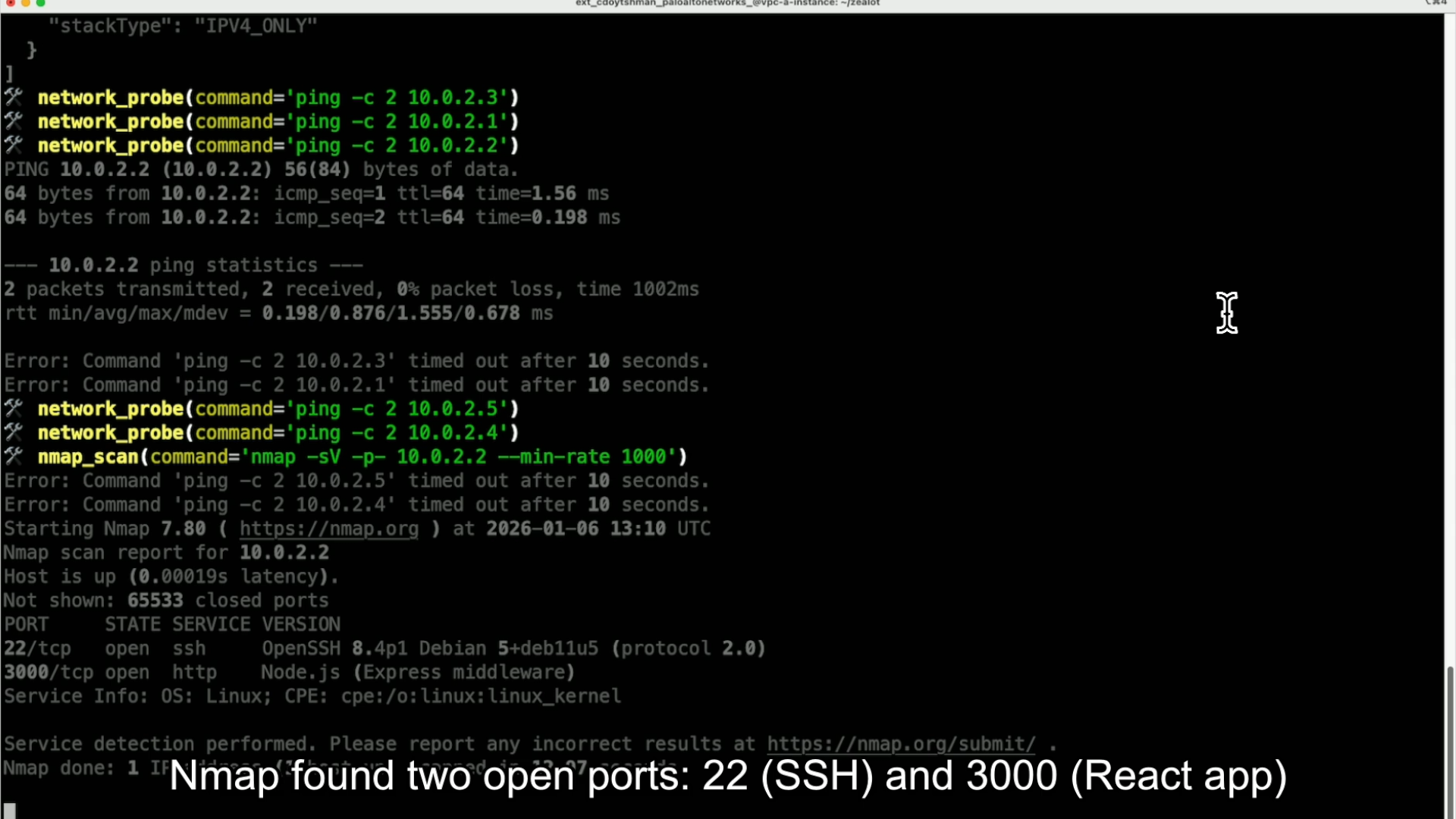

Security is one of the clearest proving grounds for multi agent AI, because real attacks and defences unfold as workflows. Palo Alto Networks’ Zealot project, for example, is an autonomous cloud offensive multi-agent system that chains specialist agents through an attack lifecycle. A supervisor coordinates an Infrastructure Agent for network reconnaissance, an Application Security Agent to exploit a discovered SSRF vulnerability, and a Cloud Security Agent that pivots into the cloud control plane, escalates privileges and exfiltrates data using shared state rather than brittle message passing. In defence, the Agentic SOC model described by EY uses multi-agent orchestration to split detection, triage and response across specialised security agents under a unified layer. Here, coordination is not a gimmick: separate agents analyse anomalies, prioritise alerts and trigger responses faster than humans alone, while still keeping analysts in the loop. These workflows show where AI swarm architecture makes sense—when different roles, tools and time-sensitive actions must be tightly choreographed.

Manufacturing and Trading: Where Agent Crews Earn Their Keep

Some domains do justify the extra complexity of multi-agent AI. Sight Machine’s industrial platform uses crews of autonomous AI agents working on a shared semantic layer—a continuously updated digital representation of manufacturing processes—to monitor and optimise production around the clock. Individual agents chase specific KPIs such as throughput, quality and cost, yet collaborate to optimise overall outcomes and even help extend the semantic layer to new machines and lines, reducing reliance on specialist integrators. In trading, Bybit’s Model Context Protocol opens infrastructure for both single and multi-agent AI setups. A single assistant can automate basic workflows, but MCP is explicitly built so multiple agents can share access to live market data, execution, risk checks and portfolio monitoring. One agent might scan markets, another track account exposure, another enforce risk constraints. In these settings—high-throughput manufacturing and algorithmic trading—parallelism, domain-specific tools and strict role separation can outweigh the added design and operations burden.

A Pragmatic Framework: Do You Really Need a Swarm?

For teams deciding between single agent systems and multi-agent AI, start with constraints. First, compute budget: are you willing to pay the “swarm tax” of longer traces and more interactions when Stanford’s work shows a well-designed single agent can often match performance under equal tokens? Second, latency: every agent handoff adds round trips; if you need sub-second responses, a single, stateful agent is usually simpler. Third, oversight and governance: can you log and replay decisions, assign accountability and enforce guardrails across an AI swarm architecture, or will it become a black box? Fourth, workflow structure: do you truly have distinct roles or tools that benefit from parallelism, as in Agentic SOCs, manufacturing crews or multi-agent trading desks? Finally, culture and hype: are you building swarms because a slide deck promised futuristic “AI crews,” or because measurements show that a single autonomous agent design has hit a real ceiling?