From Chatbot to Co‑Worker: What GPT‑5.5 Actually Changes

GPT‑5.5 is being framed less as a conversational toy and more as a practical colleague for heavy digital work. OpenAI says the model is tuned for coding, online research, data analysis, document creation, spreadsheets and hands‑on computer use, with an emphasis on multi‑step, execution‑heavy tasks. Rather than needing constant prompt babysitting, GPT‑5.5 can plan, act, check its own work and iterate until a task is done. The model’s “Thinking” mode is designed to give faster, more concise answers while handling difficult problems, and early evaluations show gains across professional benchmarks, including broad knowledge‑work tests and autonomous computer use. In day‑to‑day terms, that means a user can hand over a vague request like “turn this messy dataset and email thread into a board‑ready report,” and expect GPT‑5.5 to gather context, choose tools and output structured work with fewer follow‑up corrections than earlier models.

Inside GPT‑5.5 Coding: 400K Context and Agentic Workflows in Codex

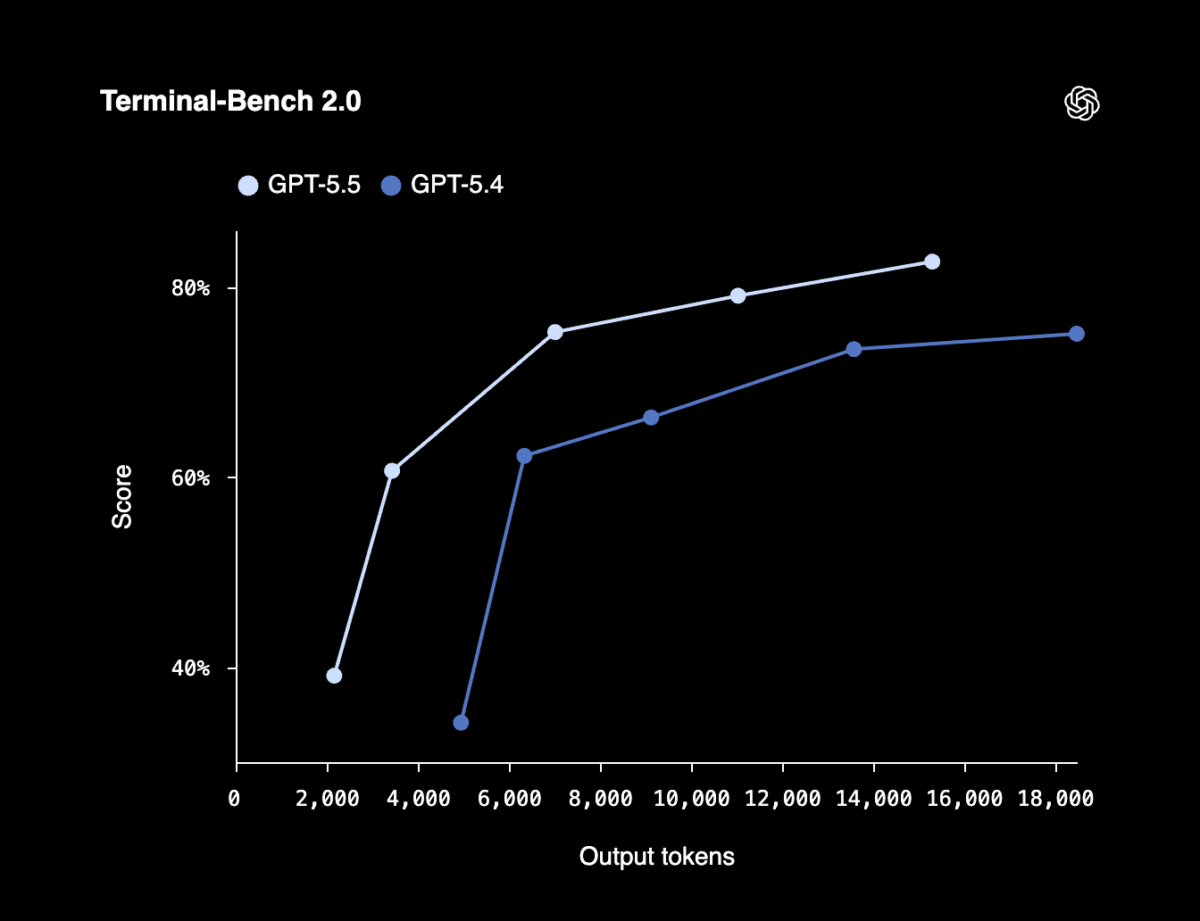

For developers, GPT‑5.5’s biggest leap is as an agentic coding assistant. In Codex, the model ships with a 400K context window, letting it read far more of a repository, documentation, logs and test output in one go. That matters for refactors, debugging and feature work that span dozens of files. On coding benchmarks focused on real workflows, GPT‑5.5 posts state‑of‑the‑art results: 82.7% on Terminal‑Bench 2.0 for complex command‑line tasks, and 58.6% on SWE‑Bench Pro for resolving real GitHub issues in a single pass. OpenAI reports improvements on long‑horizon engineering tasks that can take humans up to 20 hours. Early Codex testers say GPT‑5.5 stays on task longer, coordinates tools more reliably and can handle jobs like merging large, diverged branches or rewriting system components end‑to‑end from natural‑language prompts, while using fewer tokens and matching GPT‑5.4’s latency.

Nvidia’s Company‑Wide GPT‑5.5 Rollout: Debugging in Hours, Not Days

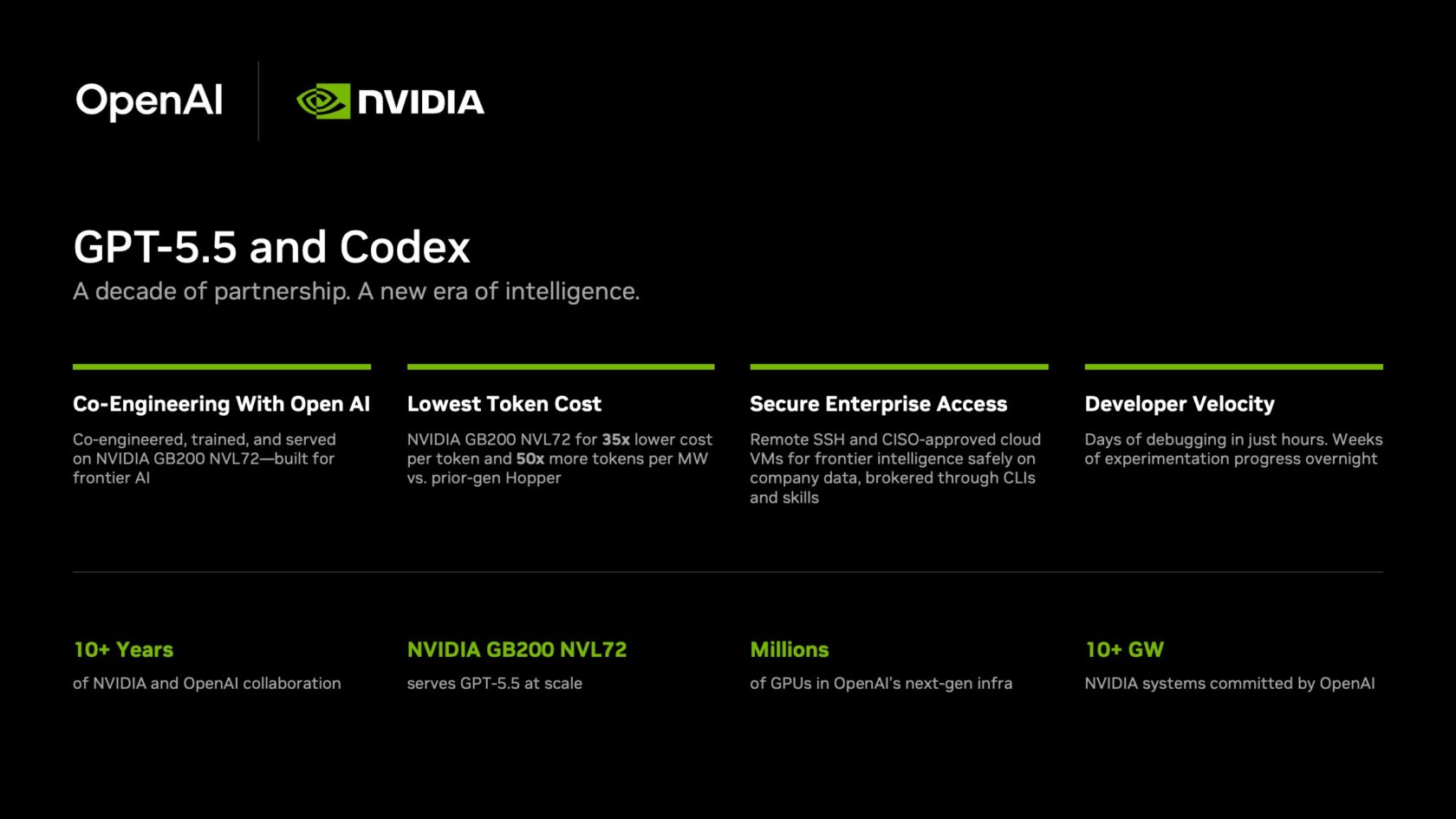

Nvidia offers an early look at GPT‑5.5 in real production work. More than 10,000 employees across engineering, product, legal, marketing, finance, sales, HR and operations are using the GPT‑5.5‑powered Codex app on Nvidia’s GB200 NVL72 systems. Internally, engineers report debugging cycles shrinking from days to hours and experimentation on complex multi‑file codebases dropping from weeks to overnight. Teams are shipping end‑to‑end features directly from natural‑language prompts with higher reliability and fewer wasted iterations than with prior models. Nvidia notes that GB200 NVL72 can deliver sharply lower cost per million tokens and much higher token throughput per megawatt compared with earlier infrastructure, making frontier‑model inference viable at enterprise scale. CEO Jensen Huang has urged all staff to treat Codex as an everyday tool, calling GPT‑5.5 “definitely the next level of AI” and backing the rollout with a dedicated Codex Lab at headquarters.

From Fintech Pilots to Workspace Agents: GPT‑5.5 in Enterprise Workflows

Beyond core developers, GPT‑5.5 is being wired into broader enterprise workflows. Fintech firm F2 is among the early adopters, tapping the new model for improved coding and data analysis tasks in its financial stack. OpenAI’s Workspace Agents push the idea further: enterprises can design agents that plug directly into Slack, Salesforce, Google Drive, Microsoft apps, Notion, Atlassian Rovo and more. Powered by Codex, these agents can write and run code, call plugins, pull data, generate decks or reports, and continue running in the background on schedules. Instead of one‑off chats, they behave like persistent co‑workers embedded in channels and business systems, handling recurring reporting, reconciliations or workflow hand‑offs across teams. With GPT‑5.5 arriving in platforms such as Microsoft Foundry, the model is quickly becoming a default layer for GPT 5.5 enterprise use, sitting inside existing tools rather than beside them.

Guardrails, Cybersecurity and Why Enterprises Are Leaning In

As GPT‑5.5 takes on more autonomous, agent‑like work, enterprises are scrutinising safety and cybersecurity. OpenAI positions the model as having stricter safeguards for coding, research and computer‑use scenarios, including better refusal behaviour and more constrained tool use when tasks edge into risky territory. GPT‑5.5 shows improvements on scientific and biosecurity‑related benchmarks, suggesting tighter handling of sensitive domains, and its deployment inside Codex and Workspace Agents is framed around enterprise‑grade controls: role‑based access to tools, workspace‑scoped data and auditable actions. Nvidia’s internal rollout underscores this focus, with Codex deployed on secure infrastructure designed to sit safely inside corporate networks. For CIOs, the pitch is that GPT‑5.5 can take on more of the workflow—running scripts, touching production‑adjacent systems, collating internal documents—without sacrificing oversight. Those enhanced guardrails are becoming a prerequisite as organisations move from experimentation to relying on GPT‑5.5 as an embedded co‑worker.