Why Custom GPT Instructions Drift—Even When You Set Clear Rules

Many teams are discovering that custom GPTs often ignore carefully written guidelines. You might specify “no em dashes” or “avoid certain phrases,” only to see those exact patterns reappear in the output. This isn’t always a sign that your instructions are bad or that the model is broken. It usually means your guidance isn’t embedded into a repeatable system. Large language models generate text statistically; they don’t “remember” your style rules across tasks unless you keep reintroducing them. When you build a custom GPT once and assume it now fully understands your brand, you’re setting yourself up for instruction drift. Over time, the model falls back to generic patterns and occasional hallucinations. To get reliable AI instruction compliance, you need a process that translates institutional knowledge—how you think, write, and review—into structured prompts that are reinforced every single time.

Turn Institutional Knowledge into Standardized Prompt Frameworks

The real fix for custom GPT consistency is standardization. Before you spin up a new GPT or workflow, capture the rules you already follow: editorial voice, formatting conventions, trusted sources, banned phrases, even what counts as “AI slop.” Treat this like a lightweight style guide for machines. Then convert those standards into a reusable prompt framework: sections for context, audience, objectives, must-include elements, and must-avoid patterns. Attach or reference the same documents for every task, rather than assuming the system remembers prior chats. This repeatable infrastructure ensures that your knowledge—not a generic default—sets the baseline for each output. The model still brings creative flexibility, but within well-defined rails. Over time, these frameworks become living documents you refine as you learn which instructions meaningfully improve quality, and which can be simplified or removed.

Building Prompt Libraries for Content Teams and Creators

For content creators, marketers, and communicators, prompt engineering tips only work at scale when you turn them into libraries. Instead of one-off prompts scattered across chats, build a catalog of templates: press releases, blog posts, internal memos, campaign briefs, FAQs, or AI search-optimized copy. Each template should bake in your standards—headline formulas, lede structures, tone guidelines, citation rules, and compliance requirements. Modern AI writing hubs already encourage this pattern by letting teams lock templates or workflows so every draft starts from the same blueprint. That framework reduces blank-page anxiety and keeps junior contributors aligned with brand voice. As you test these prompts across assets, track which ones yield fewer revisions and better AI instruction compliance. Retire weak templates, promote strong ones, and treat your prompt library as core infrastructure, not a side project.

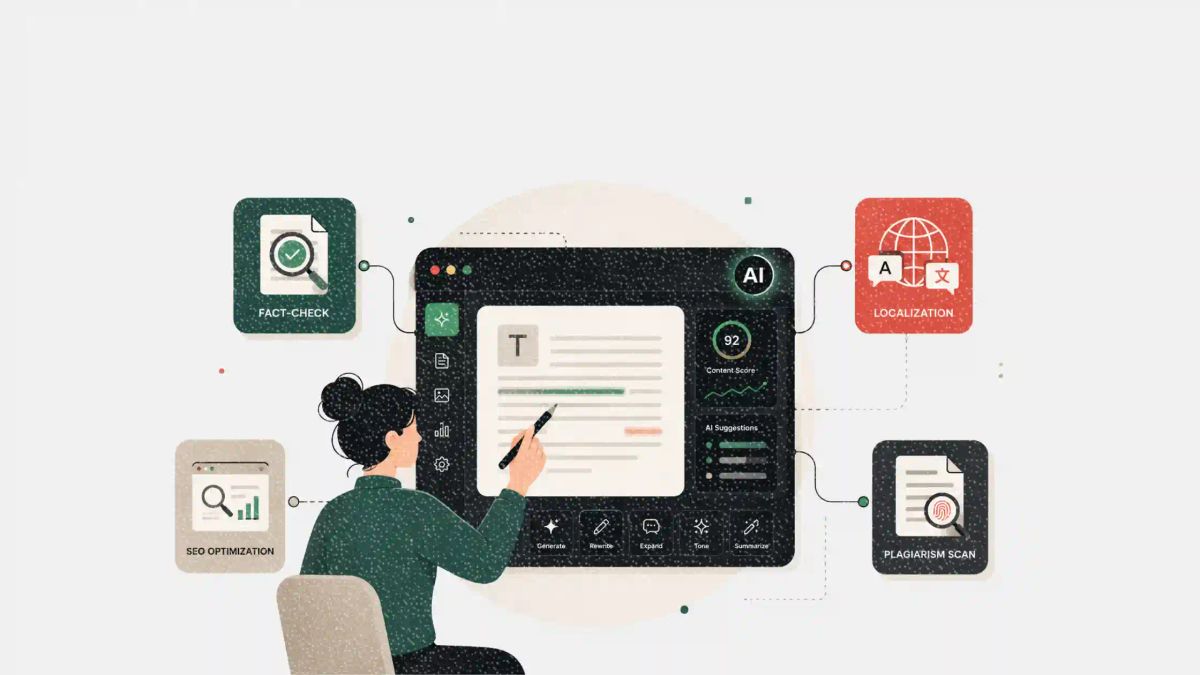

Using Step-by-Step Workflows to Reduce Hallucinations and Errors

Custom GPTs are more reliable when you stop asking them to do everything at once. Break complex tasks into ordered steps: first outline, then draft, then fact-check, and finally localize or repurpose. Clearly label each step and restate only the relevant instructions, such as hallucination guidelines, source trust maps, or localization constraints. Some modern tools support this naturally, letting you move from ideation to revision and SEO refinement within a single environment. Others let you attach PDFs or style sheets to each prompt so the system is reminded of your rules every time. This stepwise approach reduces hallucinations by narrowing the model’s focus and giving you checkpoints to correct course. Think of it as talking to a very capable intern: the more you sequence instructions and review intermediate outputs, the more consistent—and less chaotic—the results become.

Maintaining Long-Term Consistency Across AI-Driven Workflows

Instruction drift becomes most painful when multiple people and projects rely on the same AI workflows. Without shared standards, one teammate’s “brand voice” contradicts another’s, and the custom GPT ping-pongs between styles. To prevent this, treat AI governance like any other editorial process: align on a single source of truth for guidelines, centralize your prompt libraries, and embed them in the tools everyone already uses. Unified platforms that handle ideation, drafting, revision, and localization make it easier to keep rules consistent across channels. Regularly audit outputs for recurring errors, update your banned phrases and “AI tells,” and feed those changes back into the frameworks. Over time, the combination of shared templates, iterative refinement, and disciplined reinforcement turns your custom GPT from a fickle experiment into a dependable collaborator that reflects how your organization actually communicates.