Vision Pro Is Changing, Not Vanishing

Claims that the Apple Vision Pro future is over stem largely from reports about team reshuffles and a delayed Vision Pro sequel. Some commentary has framed this as Apple abandoning its mixed‑reality headset after a disappointing debut. Yet reporting from multiple outlets indicates something more nuanced. The Vision Products Group has been partially broken up and folded into other organizations, but development work on Vision Pro hardware and visionOS continues. One analysis notes that the team would not learn of its own dissolution from rumors, contrasting the situation with Apple’s handling of other cancelled projects. Sales estimates of around 600,000 units in the first year hardly match the profile of a product quietly erased from the roadmap. Instead, Apple appears to be redistributing talent and focus around a broader spatial computing strategy rather than betting everything on rapid-fire headset sequels.

AI Spatial Intelligence: The Real Foundation of Apple’s Strategy

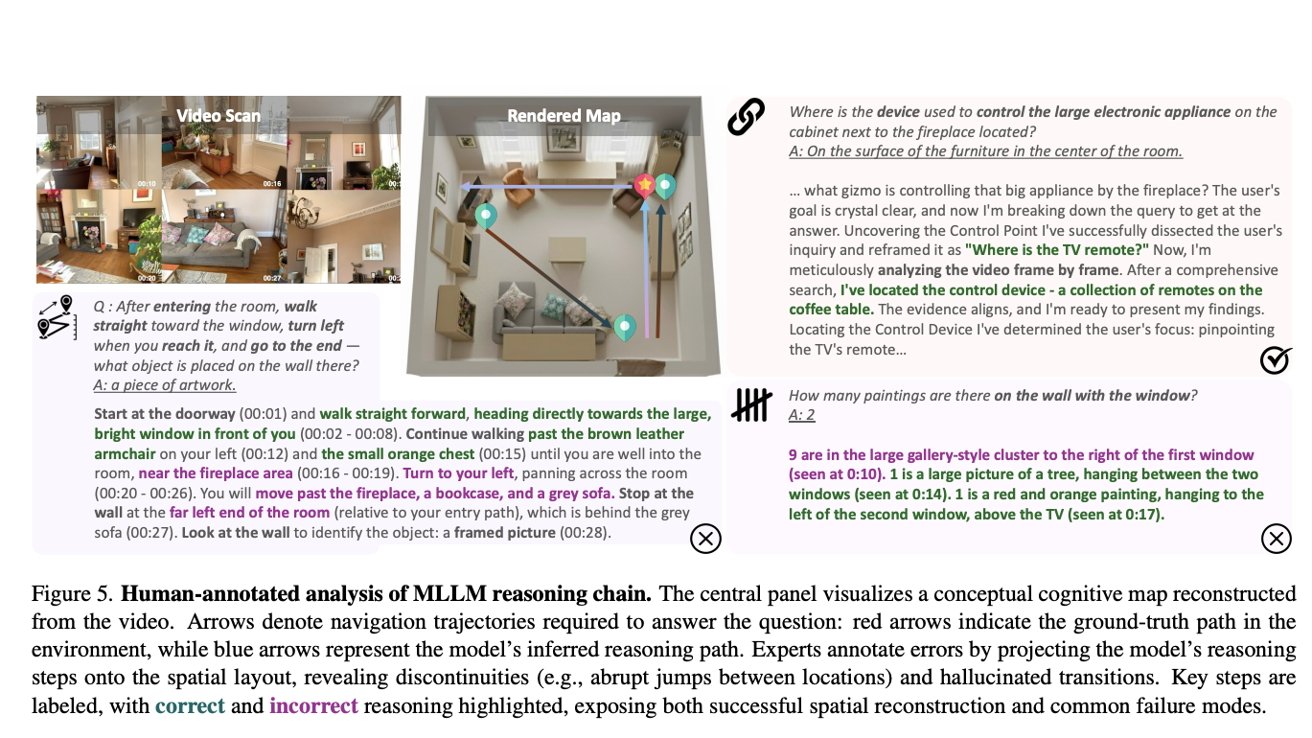

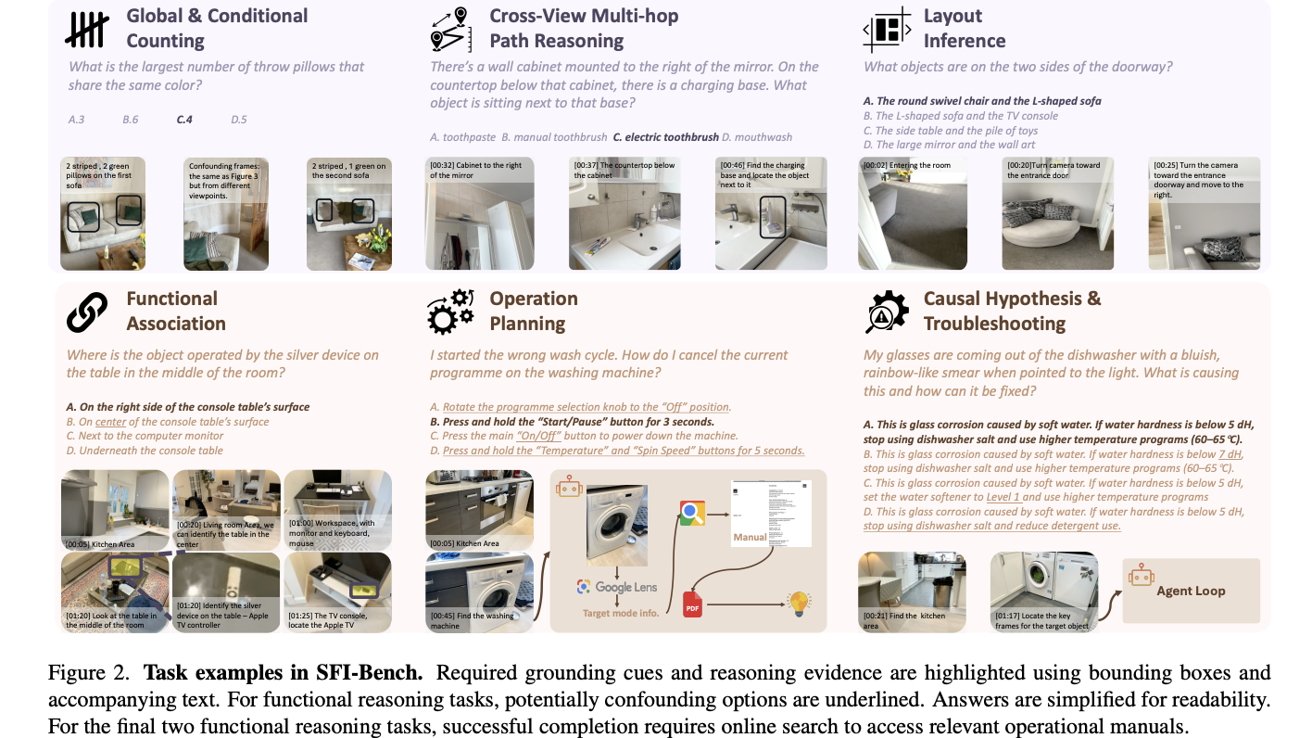

Behind the headlines, Apple is investing heavily in AI systems that deeply understand physical spaces. Recent work from its machine learning teams introduces SFI-Bench, a Spatial-Functional Intelligence Benchmark for multimodal large language models. Rather than just recognizing objects or room layouts, these models are tested on what things are for, how they’re operated and how to troubleshoot them. Example tasks include finding the largest set of matching bottles on a shelf, cancelling a washing machine program or identifying a TV remote’s function. Research findings show that even leading models still struggle with spatial memory and logical reasoning, especially when working offline. This kind of spatial computing strategy points directly to future experiences where a headset, phone or Apple smart glasses can perceive your environment and offer contextual help, not just overlay floating windows.

Accessibility First: Sign Language and 3D Understanding

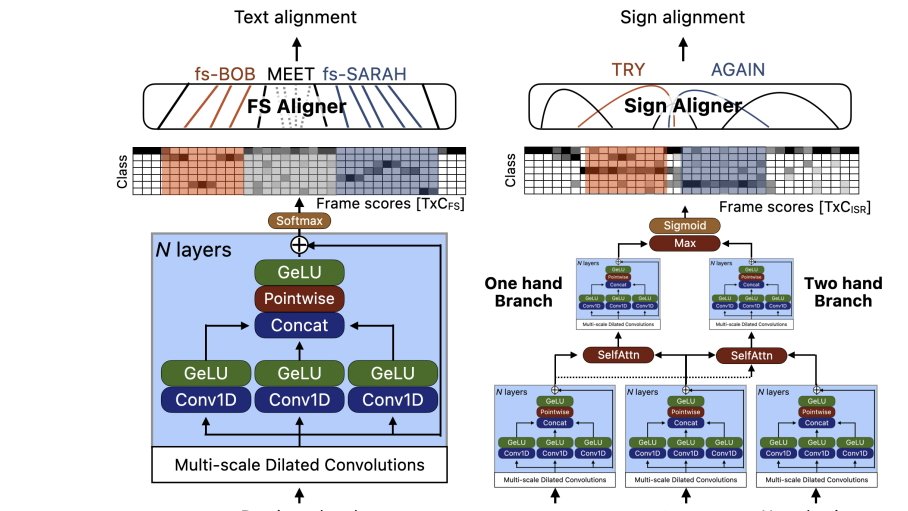

Apple’s spatial computing research is not only about convenience; accessibility is a core pillar. New studies on sign language annotation explore how AI can better interpret complex hand movements and align them with spoken or written language. The work involves sophisticated neural networks that process temporal sequences and distinguish between one-hand and two-hand signing, improving recognition accuracy in real-world video. Alongside this, research into 3D head modelling and environmental scanning supports more natural, inclusive interfaces—think eye contact that feels right in a virtual meeting, or captions and sign-support that adapt to where people are in a room. These efforts show Apple isn’t simply tweaking headset hardware; it is building the underlying perception stack for a world where spatial computing devices, from Vision Pro to future Apple smart glasses, are more usable for everyone.

Why Smart Glasses Are Taking Priority Over a Vision Pro Sequel

According to recent reporting, Apple does not have a full Vision Pro sequel in active development and is unlikely to ship one for at least two years. Instead, engineering resources are shifting toward making the current headset lighter and smaller over time while prioritizing an entirely new category: Apple smart glasses. Job listings that reference Vision Pro and visionOS are reportedly focused more on glasses hardware and ongoing system maintenance than on a next-generation headset. Internally, Vision Pro work is said to be split into dedicated hardware and software groups, while some executives move to projects like Siri enhancements, AirPods with AI cameras and an AI pendant. That pattern suggests Apple sees Vision Pro as an important but transitional device—an early anchor for visionOS while the company prepares more mainstream, everyday wearables that bring spatial computing out of the living room.

A Longer-Term Vision for Spatial Computing

Taken together, these threads point to a long game rather than a cancelled experiment. The Apple Vision Pro future appears to be as a reference platform and developer testbed for visionOS, spatially aware AI and new interaction models. Meanwhile, Apple’s spatial computing strategy is widening to include wearables that feel closer to regular eyewear than to a bulky headset. Smart glasses, powered by models trained with tools like SFI-Bench and informed by sign language research, could offer glanceable information, hands-free assistance and environment-aware guidance without the friction of a full mixed-reality rig. For consumers, the practical takeaway is simple: don’t expect an annual Vision Pro sequel cadence. Expect slower, more deliberate hardware updates paired with rapid progress in software, AI and accessibility—laying the groundwork for when spatial computing is as ordinary as pulling a phone from your pocket.