The Illusion of Speed: AI Productivity Gains Without Proof

Engineering leaders increasingly hear that AI coding tools are making teams faster, yet very few can prove it. Vendors highlight eye-catching statistics: controlled trials where developers complete tasks 55% faster, forecasts of 25–30% productivity gains in coming years, and surveys where only a third of teams report high productivity improvements. These numbers describe different things—isolated tasks, future expectations, and current perceptions—yet they are often treated as equivalent. Meanwhile, finance leaders are asked to budget against this noisy mix of evidence, and executives attribute headcount decisions or delivery wins to AI without clear traceability. In this fog, teams risk mistaking localized speed-ups or anecdotal success for sustainable, organization-wide performance gains. The core problem is not the tools themselves; it is the lack of standardized engineering metrics AI that can distinguish real improvement from optimism, hype, and misattribution.

Why DORA Metrics Still Matter in the AI Era

As AI-assisted development spreads, some practitioners argue that DORA metrics are outdated. Yet Google Cloud’s DORA team takes the opposite stance: they position DORA as the backbone for AI productivity measurement. Their updated ROI of AI-Assisted Software Development report describes AI as an amplifier of existing systems, not a standalone transformation. Delivery metrics such as deployment frequency, lead time, change failure rate, and time to restore become the canonical way to observe whether AI-driven changes are improving or destabilizing software delivery. Research cited by DORA shows that AI adoption correlates with higher throughput but also lower delivery stability, a trade-off that only becomes visible when teams track these metrics consistently. Rather than replacing DORA, AI makes its disciplined, outcome-focused measurement even more critical, turning abstract claims about productivity into observable impacts on flow, quality, and reliability.

From Perception to Evidence: New Metrics Like Engineering Throughput Value

To close the gap between perceived and actual productivity, some platforms are introducing code-centric metrics. Navigara’s Engineering Throughput Value (ETV) is one such attempt: a per-commit measure designed to test AI-era performance claims directly against the codebase. ETV scores each team’s commits against its own pre-AI baseline, providing a before-and-after view grounded in real development activity rather than surveys or forecasts. This approach seeks to address a familiar pattern: AI being credited for productivity improvements that cannot be tied to specific workflow changes or measurable outcomes. By analyzing commit-level behavior across large open-source projects from major technology companies, Navigara argues that organizations can gain a more objective understanding of how AI actually changes throughput. While ETV does not replace broader frameworks like DORA, it complements them by adding a fine-grained lens on everyday engineering work.

The J-Curve and the Need for Strong Engineering Foundations

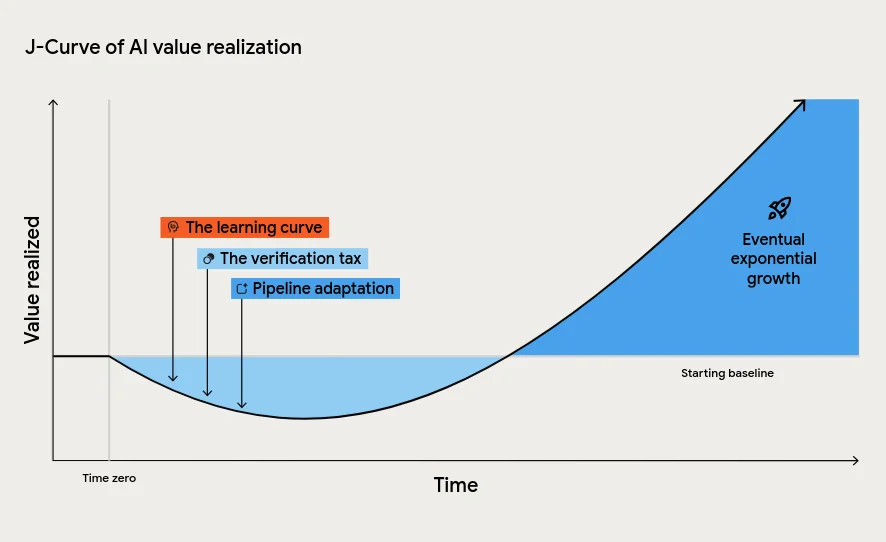

DORA’s latest report warns leaders not to misread the early impact of AI on engineering performance. Most organizations will encounter a “J-Curve” of value realization: productivity dips before it rises. This temporary decline is driven by the learning curve as teams change habits, the verification tax of reviewing AI-generated code, and the pressure on downstream processes like testing and approvals when code volume increases. Without strong foundations—robust internal platforms, disciplined version control, and AI-accessible internal data—these stresses compound into chaos rather than improvement. The report even factors in an instability tax, where increased change failure rates translate into downtime costs, to emphasize that more code shipped faster is not automatically better. DORA’s message is clear: AI will magnify whatever system you already have. Investing in continuous integration, automated testing, and small-batch work is essential to convert AI’s raw potential into reliable software development ROI.

Standardized Metrics as the Bridge Between AI Hype and Real ROI

For engineering leaders, the path to trustworthy AI productivity gains runs through standardized metrics, not anecdotes. Combining frameworks like DORA with code-level measures such as Engineering Throughput Value allows organizations to observe both macro outcomes and micro behavior. This dual lens helps distinguish between short-term bursts of activity and sustained improvements in flow, quality, and stability. It also supports more credible financial conversations, linking changes in delivery metrics to non-financial outcomes like developer experience and then to cost savings or revenue impact. Crucially, these metrics expose trade-offs: increased throughput that harms stability, or localized AI wins that get lost in downstream bottlenecks. In an environment where AI can be blamed for layoffs or credited for wins without evidence, disciplined measurement becomes a governance tool. Teams that treat AI as one variable in a measured system, rather than a magic solution, will be best positioned to realise its true return on investment.