From Manual Audits to Frontier AI Models in Security Workflows

Security teams have long relied on a mix of manual reviews, static analysis tools, and traditional scanners to uncover flaws in complex systems. Frontier AI models are now redefining this workflow by acting as always-on research partners that can reason over massive codebases, infrastructure configurations, and telemetry data. Instead of spot-checking selected components, bug detection AI can continuously comb through foundational software and R&D environments, surfacing subtle logic errors, risky integrations, and neglected attack paths. This shift is particularly important for vulnerability discovery in organizations that already meet formal audit standards such as SOC 2 Type II or ISO 27001, yet still maintain large backlogs of lower-priority issues. With advanced models embedded into development and security pipelines, the bottleneck moves from finding vulnerabilities to deciding which of many newly discovered weaknesses to remediate first, forcing enterprises to rethink how they rank and address risk.

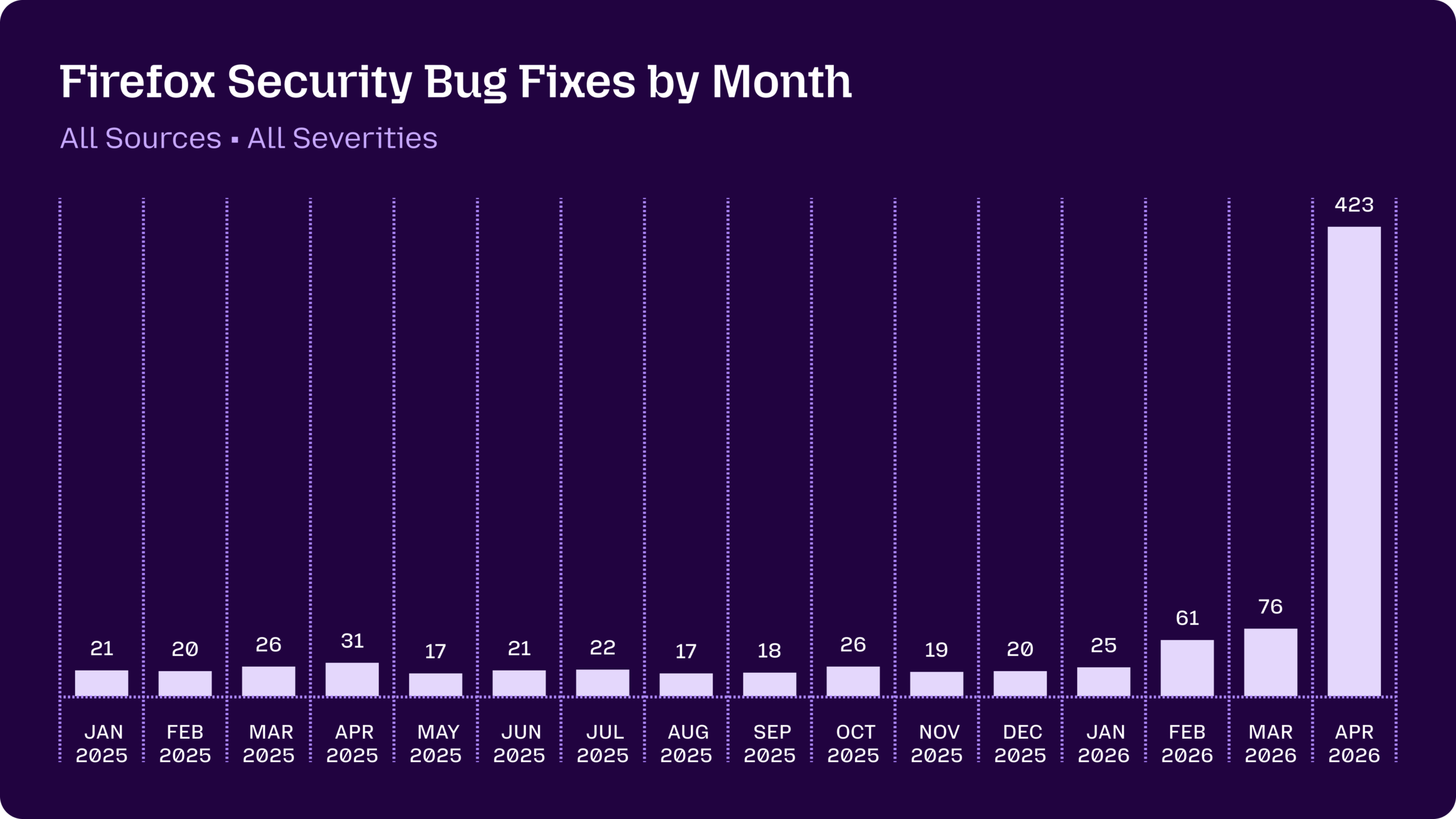

Mozilla and Claude Mythos: A Case Study in Accelerated Bug Discovery

Mozilla’s recent experience with Claude Mythos Preview illustrates the scale of change frontier AI models can bring. For much of 2025, Firefox security fixes hovered in the teens and twenties each month. After Anthropic’s Claude Opus 4.6 scanned the Firefox codebase over a two-week period in January 2026, the model uncovered 22 vulnerabilities, 14 of them high-severity. The real inflection point came once Mozilla joined Anthropic’s Project Glasswing and gained early access to Claude Mythos Preview. In April, Firefox patched 423 security bugs, with Mythos alone surfacing 271 of them. Only three issues warranted standalone CVEs; the rest involved lower-severity flaws, defense-in-depth hardening, and fixes in long-dormant code paths unlikely to be audited manually. This highlights how bug detection AI can rapidly exhaust the “long tail” of vulnerabilities that traditional teams and tools often overlook, radically compressing the timeline for hardening widely used software.

Competitive Advantage and the New Security Access Divide

Anthropic’s Project Glasswing and similar programs are creating a new access-driven divide in cybersecurity R&D. Glasswing partners receive Claude Mythos Preview to secure foundational layers of the global technology stack, from cloud platforms and operating systems to browsers, chips, and security infrastructure. The partner roster effectively maps which components will be hardened first: major cloud providers, OS maintainers, chipmakers, browser vendors, networking and endpoint-security firms. OpenAI’s Trusted Access for Cyber follows a comparable model, giving verified defenders access to more capable, more permissive cyber-focused systems. For organizations inside these vetted programs, frontier AI models provide a head start in vulnerability discovery and remediation, potentially translating into more resilient services and stronger R&D security practices. For those on the outside, the risk is being left with comparatively weaker tooling while attackers increasingly gain access to similarly advanced, less restricted models from other providers that are willing to sell broadly.

Low-Severity Flaws, Exploit Chaining, and R&D Attack Surfaces

Frontier AI models are also changing how defenders think about severity. Historically, medium- and low-priority issues often languished in backlogs while teams focused on critical CVEs. But systems like Claude Mythos and emerging GPT-based cyber models excel at chaining multiple modest weaknesses into high-impact attack paths. In a research-heavy environment, that might mean linking a medium-severity bug in a vendor portal with a misconfigured LIMS integration to reach sensitive experimental data. Each flaw alone might score around the middle of a standard 0–10 scale and fail to trigger urgent remediation, yet together they provide a direct route to intellectual property and regulated information. Early testers report that frontier AI models can discover and chain such paths at a speed and scale no human team can match, reinforcing calls for organizations to reassess backlogs and treat clusters of low-severity vulnerabilities as potential critical risks.

Beyond Code: Human Risk, Social Engineering, and the Next Phase of Defense

The same capabilities that make Claude Mythos powerful for code analysis also raise concerns beyond traditional software vulnerabilities. Anthropic’s own documentation notes that non-experts have used Mythos Preview to generate complete, working exploits in short order. Experts warn that similarly capable models can automate large-scale, multi-channel social engineering: crafting convincing emails, text messages, phone scripts, and professional-looking social profiles that unfold slowly and strategically over time. This turns the “human attack surface” into a first-class security concern, especially for executives and researchers with access to sensitive R&D data. Defending against such threats requires more than patching code; it demands structured human risk management programs that treat psychology, behavior, and training as core control surfaces. For now, access to the most capable models is restricted to vetted defenders, but as other providers release comparable systems more broadly, enterprises will need AI-augmented defenses that protect both systems and people.