AI voice clarity: when cloned speech outperforms humans

New research suggests that AI voice clarity is no longer a futuristic promise but a present reality. A study published in The Journal of the Acoustical Society of America found that AI-cloned voices can be more intelligible than natural human speech, especially in noisy environments. Led by Patti Adank of University College London and Han Wang of the University of Roehampton, the team compared cloned voices with original human recordings under challenging listening conditions. Surprisingly, listeners reported up to a 20% intelligibility boost for voice clones, even among elderly participants and people using cochlear implant simulations. Unlike traditional text-to-speech systems that require hours of studio audio, modern voice cloning can reproduce a distinct vocal fingerprint from roughly ten seconds of speech. That makes it far easier to deploy personalized, consistent voices across assistants, accessibility tools and customer service channels—while also raising expectations for how clear synthetic voice vs human speech should sound in everyday use.

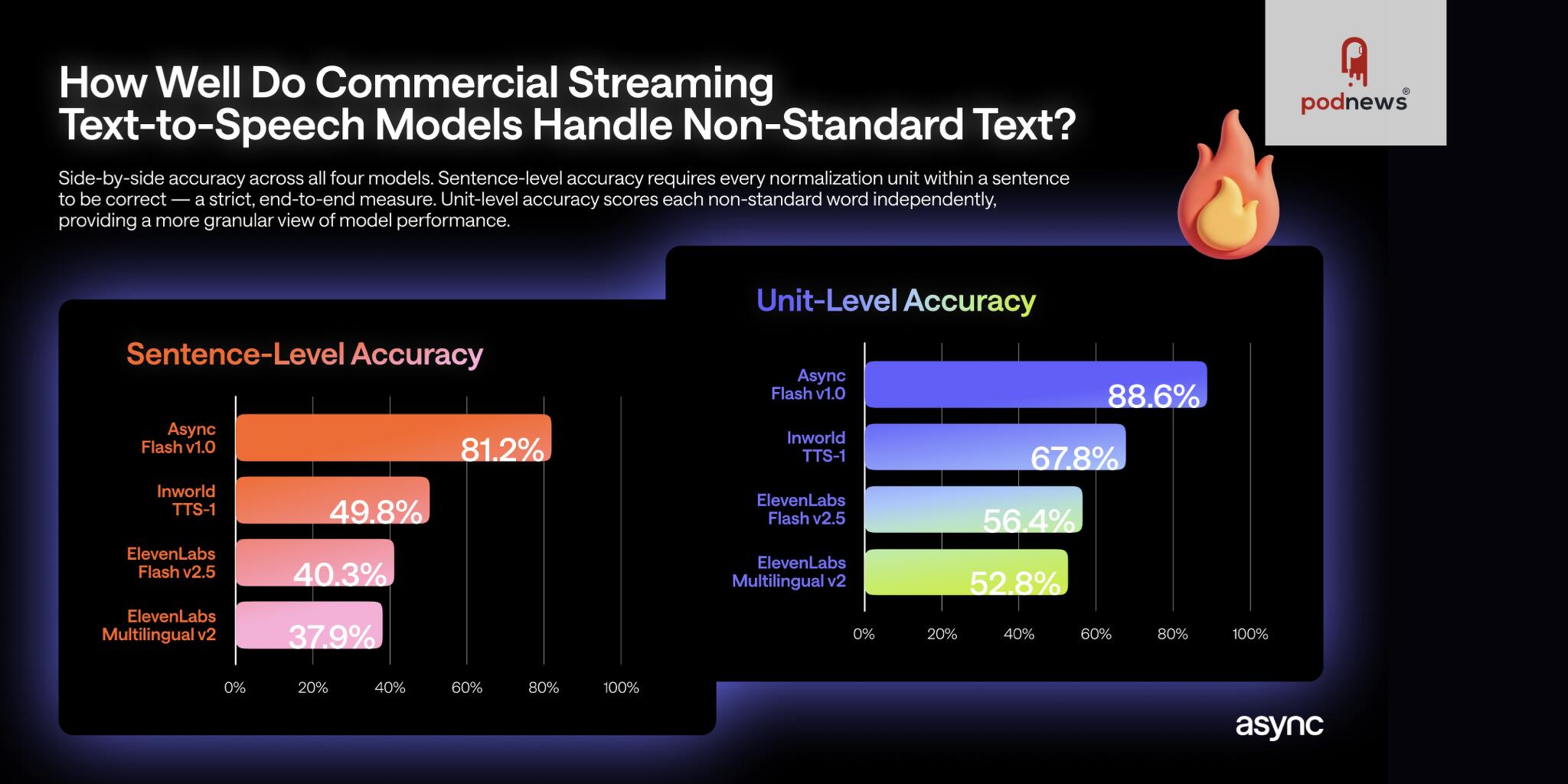

The hidden weakness: text to speech benchmark exposes number-handling failures

Clarity alone does not guarantee reliability. Async’s new open text to speech benchmark focuses on a notoriously fragile area for voice agents: non-standard text such as dates, currencies, phone numbers and other numerical content. Testing more than 1,000 sentences and 2,200 non-standard items like “03/15/2024” or “$4.99” across 31 categories, the benchmark evaluated leading streaming TTS systems exactly as they run in production voice agents, without any preprocessing. The results reveal significant failure rates, with many systems mishandling numerical formats over 60% of the time in some categories. Critically, these normalization failures rarely show up in dashboards, because latency and audio validity remain normal. A model update can improve perceived naturalness while quietly breaking phone number or price pronunciation. Async Flash v1.0 topped the benchmark, but the broader findings highlight a major gap: even advanced streaming TTS numbers pipelines often mis-speak the very details users care about most.

Voice agent accuracy meets speed and cost: what the latest benchmarks say

Against this backdrop, performance benchmarks from Voice.ai show how quickly core text-to-speech capabilities are advancing. In an expert analysis that compared nine platforms on voice quality, real-time performance and enterprise readiness, Voice.ai achieved a leading position on the speed–quality Pareto frontier, with a mean time to first byte of 96 ms and the lowest cost per million characters among real-time-viable providers. The platform scored a 3.44 mean opinion score for voice quality, while also delivering rapid voice cloning from about ten seconds of audio and support for fully autonomous voice agents that can handle calls, routing and scheduling at scale. For enterprises, these numbers signal that raw audio performance—latency, naturalness, throughput and price—has become intensely competitive. The next differentiator is likely to be voice agent accuracy on fine-grained content, particularly streaming TTS numbers and other structured data that can make or break user trust.

Opportunities and risks for everyday users

For everyday users, these benchmarks paint a nuanced picture. On the positive side, clearer synthetic voices promise better accessibility for people with hearing difficulties, more understandable announcements in noisy spaces and smoother interactions with customer support bots. Voice cloning allows services to offer familiar, consistent voices across devices without repeated recording sessions. Yet the Async benchmark shows that voice agent accuracy is still fragile where it matters: mispronounced payment confirmations, callback numbers or scheduled dates can generate confusion, compliance issues and real harm. Because such failures do not necessarily affect latency or audio quality, they can slip into production unnoticed. Combined with increasingly natural-sounding voices from providers like Voice.ai, this creates an uncomfortable paradox: users may trust what they hear more just as the underlying systems remain error-prone on critical details, and scammers may exploit that realism to deliver persuasive but misleading synthetic messages.

How enterprises should read the new voice AI benchmarks

For teams choosing or designing voice AI, the message is clear: do not evaluate systems on sound quality alone. Benchmarks around AI voice clarity, latency and cost are necessary but incomplete. Enterprises should demand text to speech benchmark data specifically covering dates, currencies, phone numbers and identifiers, ideally using their own domain-specific phrases and streaming interfaces. Any deployment of customer-facing voice agents should treat normalization as a first-class concern, with explicit tests, regression monitoring and fallbacks when a model cannot confidently read structured inputs. Developers may need to combine robust text preprocessing with providers that demonstrate strong streaming TTS numbers performance, rather than relying solely on end-to-end models. Ultimately, the most successful systems will pair near-human naturalness with rigorous accuracy on the small but crucial details that drive trust—so that synthetic voice vs human is no longer a trade-off between sounding good and being correct.