AI images have turned video calls and profiles into weapons

The trust we place in faces on screen is becoming a major vulnerability. A recent crypto incident shows how far AI image scams have come: a founder joined what looked like a routine Microsoft Teams call with an industry contact he had previously met on video. The face and voice matched his memory and even included two other supposed colleagues, yet the entire session was part of a social‑engineering attack that ended with a fake software update command intended to infect his laptop. Microsoft and Google’s Mandiant unit have separately documented campaigns that mimic familiar Teams and Zoom workflows, sometimes using deepfake‑style executive videos to convince victims to run malicious commands or install fake apps. Video calls, once treated as a final layer of authentication, can now be fully fabricated or manipulated using generative AI, making even “familiar” faces and voices unreliable proof of identity.

From GPT Image 2 misuse to conflict disinformation

More powerful image models are supercharging disinformation. Within days of OpenAI’s photorealistic GPT Image 2 release, researchers confirmed its first documented use in a coordinated influence operation, showing that the gap between launch and weaponisation is now measured in hours. Meta’s latest adversarial threat reporting describes criminal and state‑linked networks industrialising generative AI to mass‑produce fake personas and propaganda at a pace detection tools struggle to match. NewsGuard has tracked an unprecedented surge of AI-generated imagery around the Iran conflict, while investigators such as Bellingcat and Cyfluence have flagged AI images and videos being used in election campaigns and to fabricate protests that never occurred. Earlier models gave themselves away with warped hands, messy text and inconsistent lighting. GPT Image 2’s near-perfect text rendering and photorealistic faces erase many of those visual “tells”, making forged documents, fake screenshots and staged news photos look disturbingly credible in social feeds and group chats.

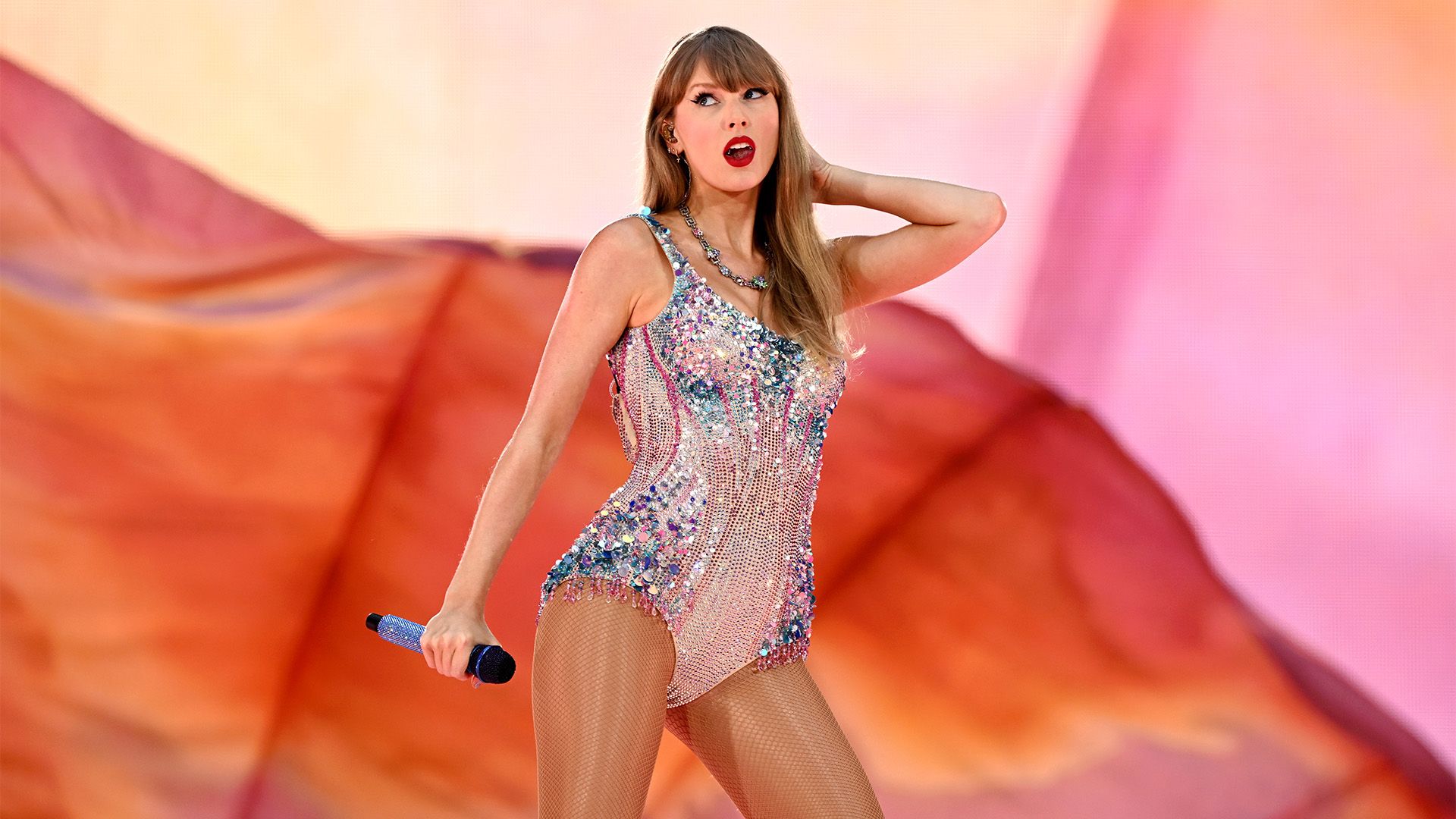

Why celebrities like Taylor Swift are early deepfake targets

High‑profile figures are becoming test cases for the social impact of synthetic media. Taylor Swift’s likeness has already appeared in countless AI deepfake examples, from explicit imagery to fabricated political messages. In response, she has moved to trademark her voice and stage image, filing applications that cover specific photos from her tour and audio clips where she introduces herself. Legal experts say this marks a shift from protecting creative works to protecting personal identity itself. Other celebrities, including actor Matthew McConaughey, have pursued similar strategies to fence off their voice and image from AI misuse. These cases matter beyond celebrity culture: they highlight how anyone’s photos, videos or audio can be captured, cloned and repurposed without consent. For Malaysian users, the Swift story is a warning that deepfakes are no longer fringe curiosities; they are becoming mainstream tools for harassment, political manipulation and scams that exploit our instinct to trust familiar faces.

Practical deepfake detection tips for Malaysian users

Even as AI improves, many fakes still leave traces if you know where to look. Start with the visuals: check for inconsistent lighting or shadows, skin that looks overly smooth or plastic, and fingers, ears or jewellery that appear distorted or duplicated. Text on signs, T‑shirts or documents is a key tell – AI often produces fonts that look almost right but include warped letters or inconsistent spacing. In video, watch the mouth closely: do lip movements truly match the audio, especially on tricky sounds, or do they feel slightly out of sync? Listen for strange pacing, flat emotion or abrupt changes in tone. Context also matters. Is the account new, with few genuine interactions? Is the image or video too perfectly aligned with a political agenda, an investment opportunity or a shocking rumour? When something feels off, reverse‑image search, verify through official channels and avoid acting based on a single piece of media.

What platforms, regulators and everyday users can do next

Platforms and regulators are racing to catch up with AI image scams and celebrity AI deepfakes, experimenting with watermarking, stricter content policies and takedown pathways. Meta’s threat reporting shows social networks are actively removing coordinated influence operations that rely on synthetic media, while legal moves by public figures like Taylor Swift signal growing pressure for clearer identity and likeness protections. But everyday users remain the first line of defence. In Malaysia, that means building simple habits: treat unexpected video calls, especially about money or crypto, as unverified until confirmed through a separate channel; never paste commands into Terminal or Command Prompt just because a meeting pop‑up tells you to; and enable multi‑factor authentication on important accounts and wallets. When you encounter suspected fakes, use in‑app reporting tools, warn friends and colleagues, and wait for verification before sharing. In an era of frictionless fakery, a few seconds of scepticism can block a very real loss.