From Static Tiles to Conversationally Built Widgets

Android 17 marks a turning point for Android 17 widgets by weaving Gemini AI features directly into the home screen experience. Instead of relying on prebuilt tiles designed by developers, users can now describe what they want in plain language and have Gemini assemble a working widget in response. A request like “Create a panel showing today’s meetings, weather, and a quick note field” becomes a dynamic, interactive interface without any code. This AI-driven approach breaks the old model where customization depended on technical skill or niche apps. It also gives Android’s interface a new sense of adaptability, as widgets can evolve alongside users’ needs rather than remaining static components. In practice, this turns the home screen into a canvas for custom widget generation, where conversation replaces configuration menus and scripting.

Gemini Inside: Generating Workflows and Widgets Alike

The same Android AI integration that powers home screen widgets also extends into everyday browsing. Gemini can perform complex tasks such as booking workflows directly inside Chrome on Android, reducing the number of manual steps typically required. A user might outline their goal—booking a table, reserving tickets, or completing a purchase—and Gemini can guide or automate much of the process. This blurs the boundary between app, browser, and assistant, positioning Gemini as an orchestration layer for tasks that once demanded multiple taps and context switches. The logic that interprets natural language in Chrome is closely related to the engine that translates a widget request into layout, data sources, and actions. Together, they showcase how Gemini AI features move beyond chat to become a design and execution tool for the broader Android experience.

Democratizing App-Like Experiences Without Code

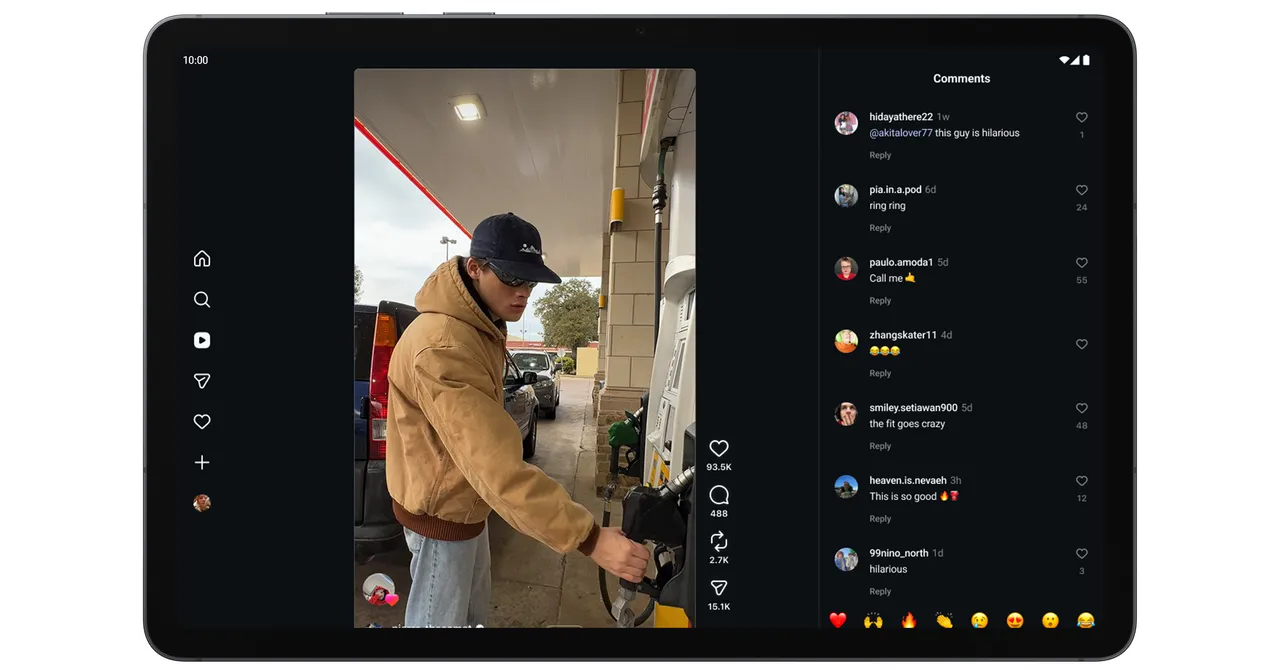

Traditionally, building a widget or lightweight app-like experience required design skills, APIs, and code—barriers that kept customization in the hands of developers and power users. With Android 17’s custom widget generation, Gemini takes on the role of both designer and integrator, allowing mainstream users to assemble interfaces that reflect their workflows. Someone planning a trip could ask for a single widget aggregating flight details, hotel reservations, packing lists, and maps. A student might request a study dashboard that combines deadlines, reading progress, and reminders. Rather than editing layouts or connecting services manually, users describe outcomes and let the system handle implementation details. This reimagines the home screen as a personalized control center, where Android AI integration adapts to individual habits and preferences, and where new experiences emerge from dialogue rather than development tools.

A Smarter, More Personal Android Ecosystem

Gemini’s deeper presence in Android 17 signals Google’s broader shift toward an operating system that learns, adapts, and builds with the user. Widgets are an early, visible expression of that ambition: each conversationally created panel is both a product of AI and a reflection of personal context. As Gemini gains awareness of a user’s routines, preferred apps, and typical tasks, the system can propose or refine widgets proactively, turning Android from a static platform into a living workspace. The ability to generate interfaces and execute workflows from natural language also sets the stage for more modular, AI-assembled experiences that span phones, tablets, and other form factors. In this vision, Android AI integration is less about a single assistant app and more about infusing intelligence into every layer of interaction, from the browser tab to the home screen tile.