What Makes GPT‑5.5 and Modern Models Truly Agentic

Agentic AI coding marks a shift from chatbots that answer questions to systems that can plan and execute work across tools and apps. OpenAI’s GPT‑5.5 exemplifies this change. It is positioned as the company’s “strongest” model for agentic coding, built specifically for messy, multi-part tasks that require planning, coordinated tool use, and iterative refinement. In Codex, GPT‑5.5 focuses on preserving context, double‑checking assumptions, and reasoning through ambiguous glitches, so it behaves more like a colleague operating the computer with you than a text-only assistant. OpenAI also emphasizes tighter security safeguards and larger context windows through Codex, which make it more viable for complex projects and enterprise workloads. Crucially, GPT‑5.5 is designed to complete longer sequences of work in a single pass, raising expectations that AI coding agents can move beyond snippet-level assistance into end‑to‑end feature delivery for developers.

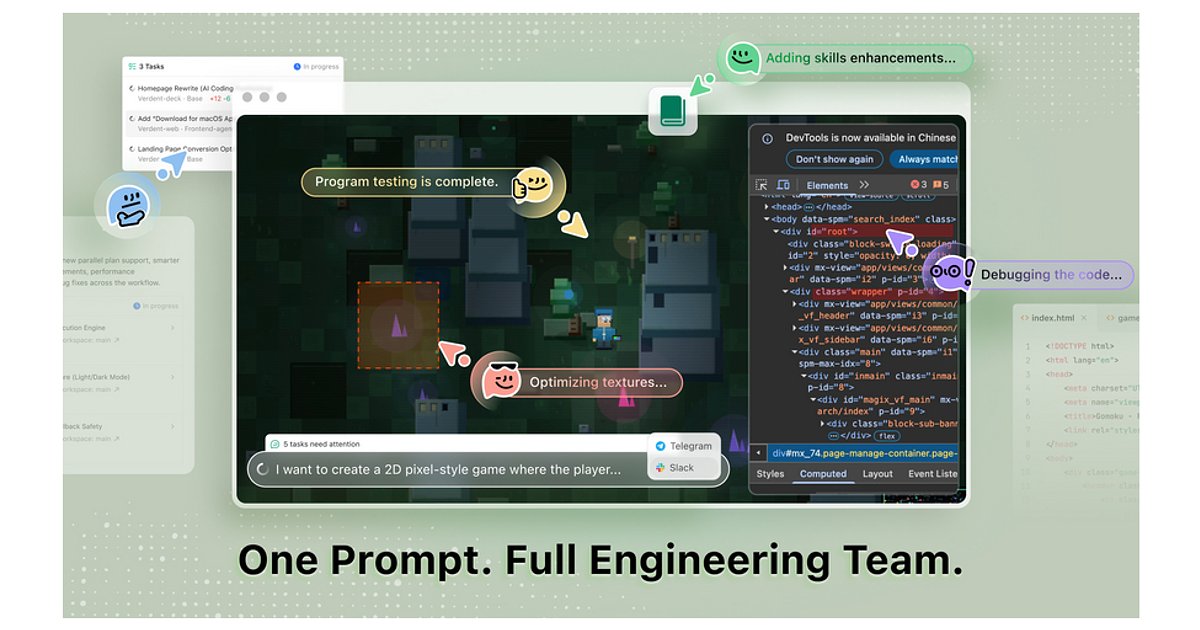

Verdent’s AI Engineering Team for Non‑Coders

Verdent pushes the agentic idea further by framing its platform as an AI engineering team rather than a coding assistant. Instead of dragging and dropping components, non‑technical users describe what they want in a chat-first interface, and the agent handles planning, scaffolding, integrations, and iteration across the entire software development lifecycle. The system enters a plan mode, asks clarifying questions, and can produce flowcharts and architecture diagrams before writing code, helping users validate the design. Verdent keeps long‑term context: it remembers stack choices, prior decisions, and the current codebase, so projects can resume seamlessly and even progress asynchronously through Slack or Telegram. Early users include a photographer who built a custom e‑commerce site with CRM and a factory supplier who deployed a workflow and billing app. These stories illustrate how an AI engineering team can compress the gap between an idea and a shipped product for people who cannot hire full development staff.

Enterprise AI Agent Platforms and the New Tooling Stack

Agentic AI coding is not limited to startups and individual tools. Google Cloud is repositioning its enterprise stack around AI coding agents and broader autonomous systems. Its Gemini Enterprise Agent Platform provides infrastructure for building agents that execute multi‑step tasks across applications with minimal oversight, supported by the Gemini Enterprise platform and AI Hypercomputer system. Features like Memory Bank and Memory Profile give agents persistent context, allowing them to recall past interactions instead of starting from scratch each session. Agent Simulation offers a testbed where developers can evaluate agent behavior before deployment, a critical step for enterprise AI tools that must meet governance and reliability expectations. Combined with GPT‑5.5 for developers and Verdent’s AI engineering team vision, these moves signal an ecosystem where agents are first‑class citizens in cloud platforms, shaping how organisations design pipelines, integrate APIs, and monitor AI-driven workflows at scale.

Everyday Use Cases: From CRUD Apps to Automated Refactors

For everyday developers, AI coding agents promise to streamline the repetitive, glue-like work that consumes most project time. GPT‑5.5’s strength in multi-part planning and tool use makes it well-suited to spinning up CRUD apps, wiring authentication, and connecting APIs while maintaining broader context. Verdent’s end‑to‑end approach extends this by orchestrating everything from initial planning to deployment, so non‑coders can stand up workflow systems, billing tools, or education platforms by describing outcomes rather than implementation details. Enterprise AI tools like Google’s agent platform add observability, giving teams dashboards and inboxes where bots post progress reports. Across these systems, practical workflows include bulk refactors, test and deployment orchestration, and ongoing maintenance tasks. Instead of manually updating dozens of services, developers can delegate structured jobs to AI coding agents while focusing on higher-level architecture, user experience, and domain logic that still benefit from human judgment.

Limits, Risks and How Developers Should Experiment Now

Despite rapid progress, agentic AI coding is not a drop-in replacement for engineering teams. Multi‑step plans can still go off course, and debugging a black‑box agent that silently edits files or hops between tools poses new challenges. OpenAI itself notes that GPT‑5.5 is not ready for certain critical responsibilities, underscoring the need for careful governance in enterprise AI tools. Verdent’s promise of an AI engineering team depends on robust alignment and clear validation loops, while Google’s Agent Simulation hints at how much testing is required before agents handle production workloads. For developers, the pragmatic path is to experiment at the edges: use GPT‑5.5 for developers to automate refactors, generate tests, or scaffold services; try Verdent-style systems for proofs of concept or internal tools; and leverage enterprise AI tools for monitored workflows. Treat agents as powerful collaborators whose output is reviewed, tested, and gradually trusted—rather than autonomous owners of critical production code.