Why Equinox Offers a Lightweight AI Workflow in JAX

Equinox is a lightweight neural network library built directly on top of JAX. Instead of hiding JAX behind a large framework, it leans into core JAX concepts, especially PyTrees. In Equinox, a model is just a PyTree: a nested collection of arrays and Python objects that JAX understands. This design makes parameter handling explicit yet concise, and it integrates naturally with transformations such as jit, grad, vmap, and pmap. For practitioners, this PyTree model design means you can treat your neural network like any other piece of data. You can copy it, serialize it, or transform it without special container classes. Equinox adds a thin layer of convenience in the form of eqx.Module, filtered transformations, and utilities for handling stateful layers. The result is a JAX neural networks toolkit that feels closer to writing plain Python, while still enabling a scalable, production-ready, lightweight AI workflow.

Designing a PyTree Model with eqx.Module

The core building block in an Equinox JAX tutorial is eqx.Module. You define a model by subclassing eqx.Module and declaring fields for weights, biases, and configuration. Any array-like field becomes part of the trainable parameters, while non-array fields act as static metadata. This clear separation allows JAX to distinguish what should be differentiated and what should remain constant. Filtered transforms are where this really pays off. By using utilities such as eqx.filter_grad and eqx.filter_jit, you can apply JAX transformations only to parameter fields, leaving configuration and other Python objects untouched. Stateful layers, such as those with running statistics, are handled explicitly by separating parameter and state fields, which keeps the training logic transparent. Altogether, this pattern encourages clean, testable code where every parameter and non-parameter is visible, instead of being hidden inside opaque framework abstractions.

An End-to-End Equinox Training Workflow in JAX

A practical equinox training guide begins with dataset preparation. You typically load data into JAX arrays, split into training and validation sets, and determine hyperparameters like batch size, epochs, and steps per epoch. Inside the training loop, you shuffle data each epoch using jax.random.permutation, then iterate over mini-batches and call a train_step function that returns the updated model, optimizer state, and loss. Using JAX transformations, train_step is often wrapped with eqx.filter_jit and eqx.filter_grad to accelerate computation while differentiating only over parameters. After each epoch, you run an evaluate function on the validation set to track performance, logging train and validation losses for later visualization. This end-to-end pattern—from random key management to loss tracking—encourages reproducible experiments. When training finishes, you can serialize the model with eqx.tree_serialise_leaves, reload it into a compatible skeleton, and verify that all parameter arrays match, enabling confident deployment or further experimentation.

Experiment Faster: Serialization, Variants, and Practical Debugging

Equinox makes experimentation more practical by embracing simple serialization and modular design. With eqx.tree_serialise_leaves, you can store model weights to disk, then later reconstruct them by instantiating a compatible model skeleton and calling eqx.tree_deserialise_leaves. This keeps architectures and parameters decoupled, so you can swap variants—such as changing depth or width—while reusing existing training utilities. Because models are PyTrees, it is straightforward to track different model variants in a single codebase: each variant is just another eqx.Module. You can write concise training functions that accept any compatible module, making it easy to A/B test architectures or hyperparameters. Debugging remains approachable: print or log PyTree leaves, inspect shapes, or disable jit on filtered transforms to step through code. Profiling is equally direct, as you rely on standard JAX tooling to analyze where time is spent, helping you scale experiments without overwhelming boilerplate.

Integrating Equinox Models into Broader AI Workflows

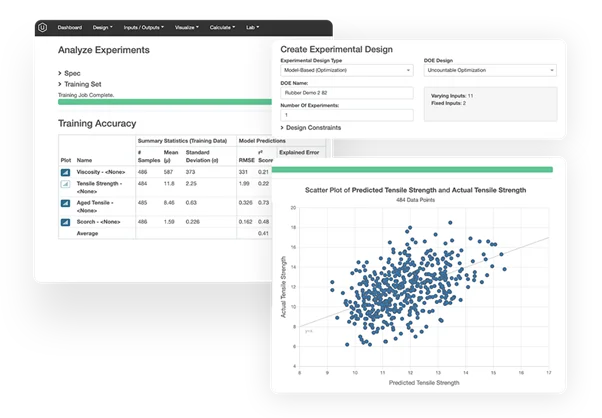

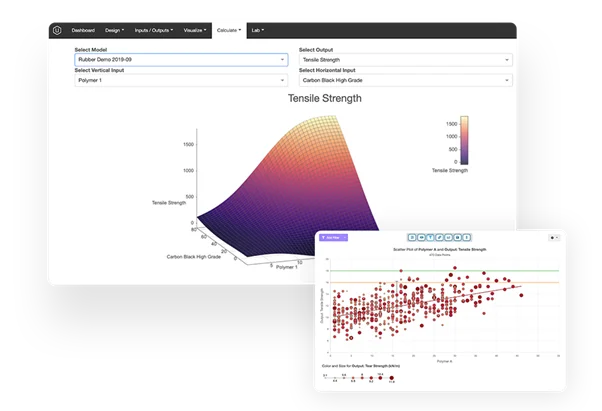

Equinox fits naturally into broader AI workflows that combine data platforms, experiment management, and deployment pipelines. In environments where teams depend on structured experimental data and predictive modeling, having models that are simple to serialize and reload is crucial for repeatable research and production use. Because Equinox models are plain JAX functions and PyTrees, they can be exported, wrapped in serving APIs, or embedded inside larger systems with minimal glue code. This design also complements platforms that focus on end-to-end product development and data utilization, where predictive models sit at the core of decision-making. By keeping models explicit and lightweight, Equinox helps practitioners maintain a clear line from raw data through training and evaluation to deployment. As you scale from small prototypes to more complex pipelines, this clarity reduces technical debt, simplifies collaboration between teams, and ensures that your JAX neural networks remain understandable, testable, and ready for integration into real-world workflows.