From Traditional Data Centers to AI Factories

Conventional enterprise storage was built around transactional databases, virtual machines, and file shares, where durability and steady IOPS dominated design choices. AI factories flip those assumptions. Modern AI workloads, especially large-scale training and continuous inference, operate as data pipelines rather than discrete applications. Instead of periodic reads and writes, GPUs demand sustained, high-throughput access to massive datasets, model checkpoints, and context caches. As organizations move into the era of agentic AI—where models plan, reason, and retain longer context—storage becomes a primary enabler of intelligence rather than a background utility. This forces a rethink of AI storage architecture and enterprise AI infrastructure: it is no longer enough to incrementally scale legacy SAN or NAS. Storage now must be co-designed with compute and networking so that every watt, rack unit, and PCIe lane contributes to keeping GPUs fully utilized.

Agentic AI and the New Demand Curve for Storage

The shift from simple inferencing to agentic AI fundamentally changes storage patterns. Agentic workflows require models to maintain larger context windows, persist intermediate reasoning steps, and revisit historical data on demand. This translates into far more reads and writes to nearline storage, plus expanded capacity for long-lived context and knowledge bases. The result is a demand curve that grows not only in terabytes but also in bandwidth and concurrency. An AI factory may need to support simultaneous model training, evaluation, and multi-tenant inference, each with different access patterns. Traditional storage metrics such as IOPS per LUN or backup window length become less relevant. Instead, architects focus on end-to-end pipeline throughput, tail latency to GPUs, and how rapidly data can be staged, cached, and recycled. In this environment, storage system design becomes a critical differentiator for both AI performance and cost efficiency.

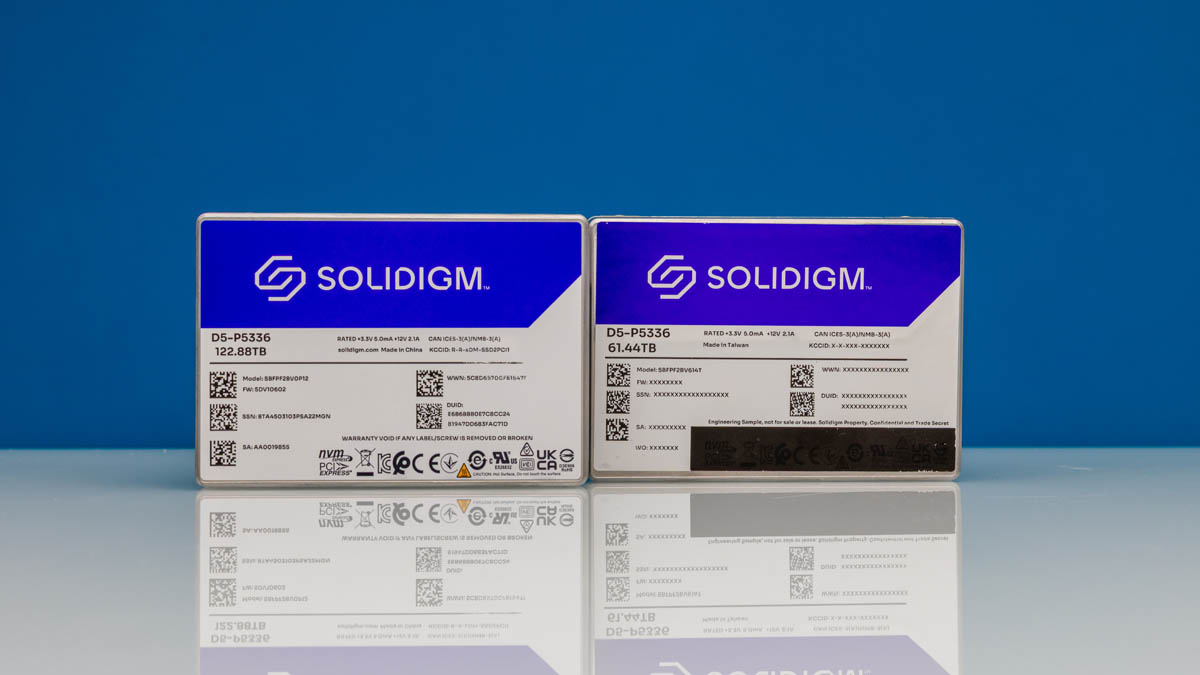

The Rise of a Middle Tier: Flash Between HBM and NAS

AI factories increasingly rely on a three-tier model: ultra-fast on-package memory such as HBM, a high-performance flash tier, and large-capacity networked storage. Direct-attached HBM remains irreplaceable for tensor operations but is extremely costly per terabyte, while traditional network-attached storage offers scale but cannot feed GPUs quickly enough. This gap is driving a new middle tier of AI factory storage based on high-capacity flash SSDs. Entire wafers of NAND now populate single drives, enabling tens of terabytes per device and, in aggregate, exabyte-scale clusters. Because many AI data artifacts—like preprocessed corpora or intermediate embeddings—can be regenerated, this tier does not always require strict, legacy-grade durability. That relaxation allows more aggressive trade-offs for density and performance. As AI storage architecture matures, this middle tier becomes the operational heartbeat, staging training data, hosting model artifacts, and buffering KV caches between cold archives and hot GPU memory.

KV Caches as a Distinct Storage Tier

Key-value (KV) caches are emerging as a distinct, high-performance layer inside AI factories. When large language models and other generative systems reuse context, KV caches avoid recomputing attention states, sharply reducing GPU cycles for repeated queries or long-running sessions. Unlike conventional enterprise storage, where data loss is unacceptable, KV cache contents are ephemeral and recomputable. This difference relaxes resilience requirements and opens the door to specialized storage system design focused purely on speed and latency. Architecturally, KV caches sit between GPU memory and flash tiers, serving as a working set for active sequences and prompts. Performance planning shifts from traditional RAID rebuild times to metrics like tokens per second, cache hit rate, and end-to-end response time. Organizations that treat KV caches as first-class storage—not just an implementation detail—can significantly boost AI factory efficiency without proportionally increasing GPU count or power consumption.

Extreme Co-Design and Planning for Exabyte-Scale AI Factories

As AI factories scale toward gigawatt-class data centers, storage cannot be planned in isolation. Vendors are embracing “extreme co-design,” aligning SSD form factors, thermals, and power delivery with GPU racks and high-speed networking. Innovations like liquid-cooled SSDs illustrate how tightly storage is being integrated into the thermal and power budgets of AI infrastructure. Forward-looking estimates suggest that a single large AI factory could require tens of exabytes of flash to operate efficiently, driven by ever-growing models, datasets, and agentic contexts. For enterprise AI infrastructure teams, capacity planning now involves modeling model lifecycle data, concurrency of training and inference, and growth in KV cache requirements. Success will depend on balancing raw capacity, bandwidth, and energy efficiency while respecting space and power limits. Organizations that adopt co-designed, tiered AI factory storage today will be better positioned to scale with the next wave of AI workloads.