Mythos AI Security: Marketing Narrative vs. Measurable Results

Mythos AI security ambitions sit at the center of a fast-rising narrative about frontier AI models reshaping vulnerability discovery. Anthropic has framed Mythos as so capable at AI bug hunting that broad public release would be risky, and early partners in Project Glasswing are being positioned as beneficiaries of this new power. Yet across high-profile trials, the story is more nuanced than the marketing implies. Reports emphasize Mythos’s ability to scan complex codebases and uncover previously missed flaws, but the public record of confirmed, high-impact vulnerabilities is relatively thin. For defenders, this raises a familiar question: is vulnerability discovery AI genuinely changing the threat landscape, or mainly reshaping expectations and sales decks? As organizations consider integrating Mythos-tier systems into security workflows, they must weigh glossy claims against the still-limited evidence of transformative, real-world security outcomes.

cURL’s Low-Severity Find Highlights Limits of AI Bug Hunting

The clearest public test case for Mythos in open source is cURL, a widely used data transfer tool with a long security history. Its creator, Daniel Stenberg, participated in Anthropic’s Project Glasswing to evaluate Mythos as a vulnerability discovery AI. Instead of direct access, he received a single report generated by someone else with Mythos access, listing five supposed “confirmed security vulnerabilities.” After several hours of review, Stenberg and the cURL security team concluded that three items were false positives and another was just a regular bug. Only one issue qualified as a real vulnerability—and it was low severity, slated for disclosure alongside an upcoming release and not expected to be life‑threatening. Stenberg described Mythos’s bug-hunting performance as “primarily marketing,” arguing that a tool hyped as too dangerous to release publicly should have delivered more than one modest flaw in such a widely scrutinized codebase.

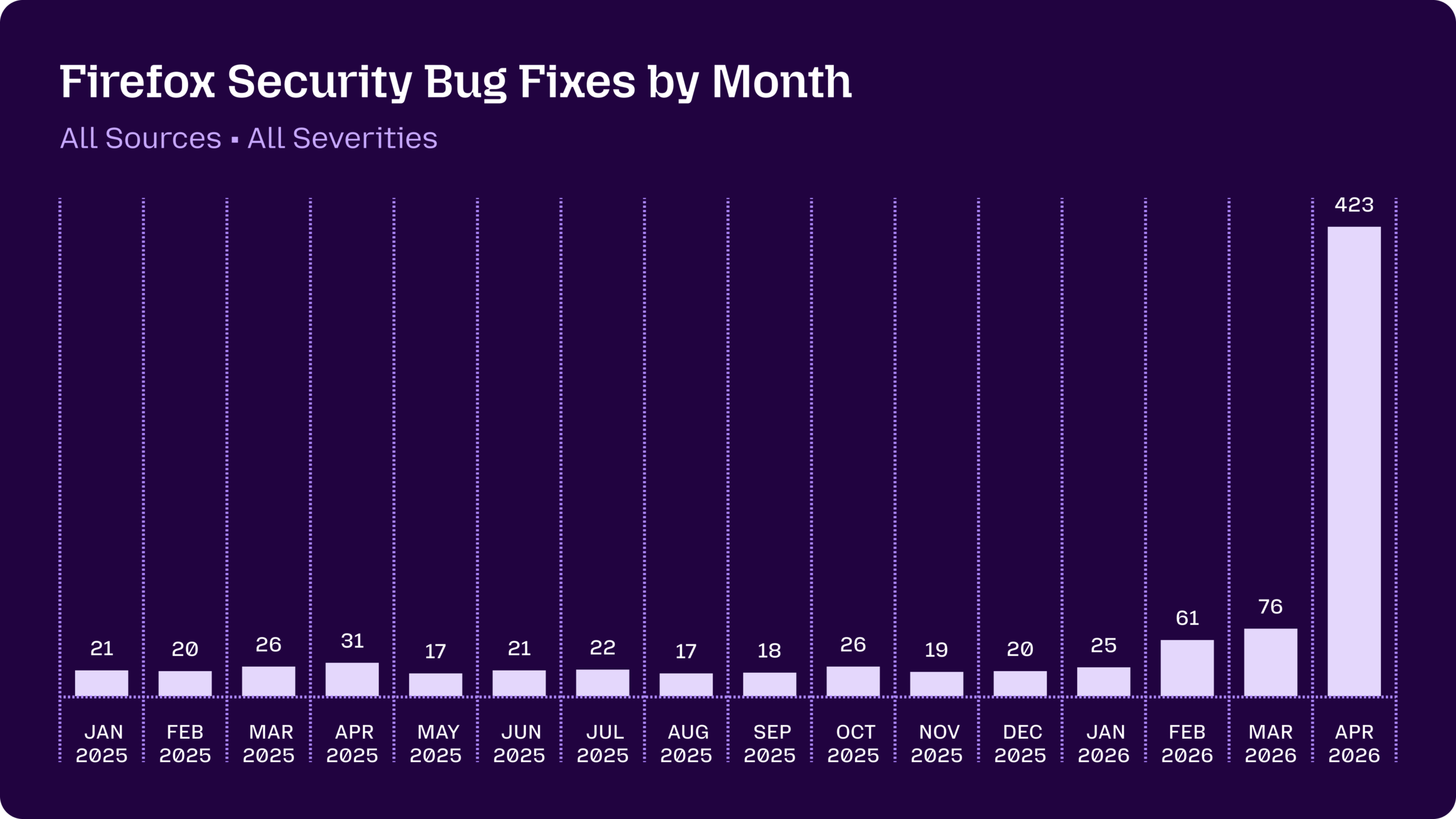

Firefox’s Bug Surge: Mythos Power or Better AI Middleware?

Mozilla’s Firefox security team offers a contrasting, more optimistic datapoint, but even there Mythos is only part of the story. Firefox historically fixed security bugs in the teens to mid‑twenties per month, with 31 issues patched last April. After deploying Anthropic’s Opus 4.6 model in January, Mozilla logged 22 new vulnerabilities in two weeks, 14 of them high severity. The real spike came later: in April, Firefox shipped fixes for 423 security bugs, with Mythos Preview credited for surfacing 271. Yet only three of those warranted standalone CVEs; the rest were lower-severity hardening issues and obscure code paths. Firefox engineers stress that the breakthrough was as much about improved agentic harnesses—middleware that steers models and filters noise—as about Mythos itself. Their experience suggests AI bug hunting gains may hinge less on a single frontier model and more on how organizations integrate and orchestrate these systems.

Unclear Evidence Around the macOS Security Bypass

Mythos’s reputation leapt again when security researchers claimed it had enabled a novel macOS security bypass. According to their account, Mythos chained two separate macOS bugs into an escalation exploit that corrupted memory and gained access to protected parts of a device. The Wall Street Journal reported that the team was impressed enough to drive to Apple’s headquarters to brief engineers in person. Yet many details remain deliberately vague, pending Apple’s review and potential patching of the flaws. It is not fully clear where Mythos’s contribution stopped—did it independently discover both bugs, propose the chain, or simply assist human researchers? Apple has acknowledged it is validating the findings but has not confirmed the exploit’s specifics. Until technical write-ups or patches are public, this macOS security bypass functions more as a suggestive anecdote than a definitive proof of Mythos’s unique offensive capabilities.

Frontier AI Models, Access Control, and Industry Skepticism

Across these case studies, Mythos illustrates both the promise and peril of frontier AI models in cybersecurity. On one hand, tools that can scan massive codebases and surface hundreds of issues could help organizations tackle long‑ignored vulnerability backlogs, particularly in R&D environments spanning source code, cloud infrastructure, and scientific systems. On the other hand, concentrated access to powerful models raises questions about who can weaponize them, and how much real advantage they offer over existing security practices. The gap between Mythos AI security marketing and documented outcomes—one low‑severity cURL bug, a Firefox bug surge dominated by non‑CVE hardening, and an unverified macOS exploit—has fueled skepticism among practitioners. For now, Mythos looks less like an autonomous security breakthrough and more like a sophisticated assistant whose impact is highly contingent on human oversight, careful access control, and the quality of the surrounding tooling.