From Autocomplete to AI Teammates: What Agentic Really Means

Agentic AI tools go far beyond classic autocomplete. Where yesterday’s AI coding assistants focused on suggesting the next line, today’s “AI software engineer” models can plan, execute, and iterate on whole tasks. Cognition’s Devin is a leading example: it turns a natural-language request into a structured plan, works inside its own editor and command-line environment, and continuously tests and validates its own code, positioning itself as a digital colleague rather than a simple helper. At Google, internal Gemini-powered agentic workflows already generate the majority of new code, with human engineers increasingly acting as reviewers and orchestrators instead of line-by-line authors. This shift aligns with the rise of “vibe coding,” where developers guide AI with intent and context while the system handles much of the heavy lifting. For PC enthusiasts, the key change is that AI is starting to behave less like a smart IDE plugin and more like a semi-autonomous teammate embedded across the developer AI workflow.

Agents Across the SDLC: Devin, CodeRabbit, mabl, PolyAI, Moderne and More

Agentic AI tools are spreading across every phase of the software development lifecycle. Devin aims for end-to-end automation, from feasibility planning and implementation to self-checking for bugs and vulnerabilities inside a full development environment. CodeRabbit extends this idea into collaboration: its Slack agent follows work across planning, coding, testing, deployment, and maintenance, pulling context from GitHub, GitLab, Jira, Notion, monitoring stacks, and cloud platforms so one agent can track decisions and fixes over time. mabl tackles the quality gap created by fast AI coding agents with an “agentic testing platform” that independently enforces team standards and delivers what it calls Active Coverage, rather than letting agents grade their own work. Moderne, built on the OpenRewrite project, focuses on giving coding agents deterministic, governed infrastructure so they can reason accurately across massive codebases. As these systems mature, the AI teammate is no longer just writing functions—it’s coordinating tickets, tests, refactors, and long-running maintenance tasks around your code.

Security Moves Into the Agent Loop: Mythos and DevSecOps Upgrades

As AI-generated code volume explodes, security is being pushed closer to the point of generation. Microsoft is integrating Anthropic’s Claude Mythos Preview directly into its Security Development Lifecycle so vulnerabilities are detected and fixed earlier, leveraging Mythos’s ability to write and analyse code and uncover large numbers of significant flaws across operating systems and applications. More broadly, DevSecOps experts describe a shift from reactive validation to continuous, intelligent enforcement: security tooling is being wired into AI coding assistants and agents, providing policies, secure coding patterns, and secrets checks as code is produced, not afterwards. Large language models are increasingly used for vulnerability scanning and even for proposing remediation, with fixes surfaced through IDEs and pull requests. The result is a more machine-mediated pipeline where security teams govern AI behaviour as much as human behaviour. For developers on personal machines, this means more of your linting, SAST-style checks, and remediation suggestions will be triggered automatically as agents generate and modify code.

Enterprises Standardize on Agents: Codex, Gemini and Changing Developer Expectations

Enterprise adoption is turning agentic AI tools from optional add-ons into standard infrastructure. OpenAI’s Codex is now used weekly by millions of developers, and companies like Virgin Atlantic, Ramp, Notion, Cisco, and Rakuten rely on it across testing, code review, feature development, and incident response. Cognizant is embedding Codex throughout its global software engineering organization so that code generation, refactoring, testing, and documentation become AI-accelerated by default. At Google, internal Gemini models already generate most new code and handle large-scale migrations far faster than human-only efforts. Consulting and services giants such as Infosys, Accenture, PwC, or automotive suppliers like Valeo are following similar trajectories with Codex- or Gemini-style systems integrated across large engineering teams. For individual developers and PC tinkerers, this enterprise normalization signals a new baseline: future job descriptions will increasingly assume fluency with AI coding assistants, comfort in supervising agents, and the ability to design workflows where human judgment steers automated execution.

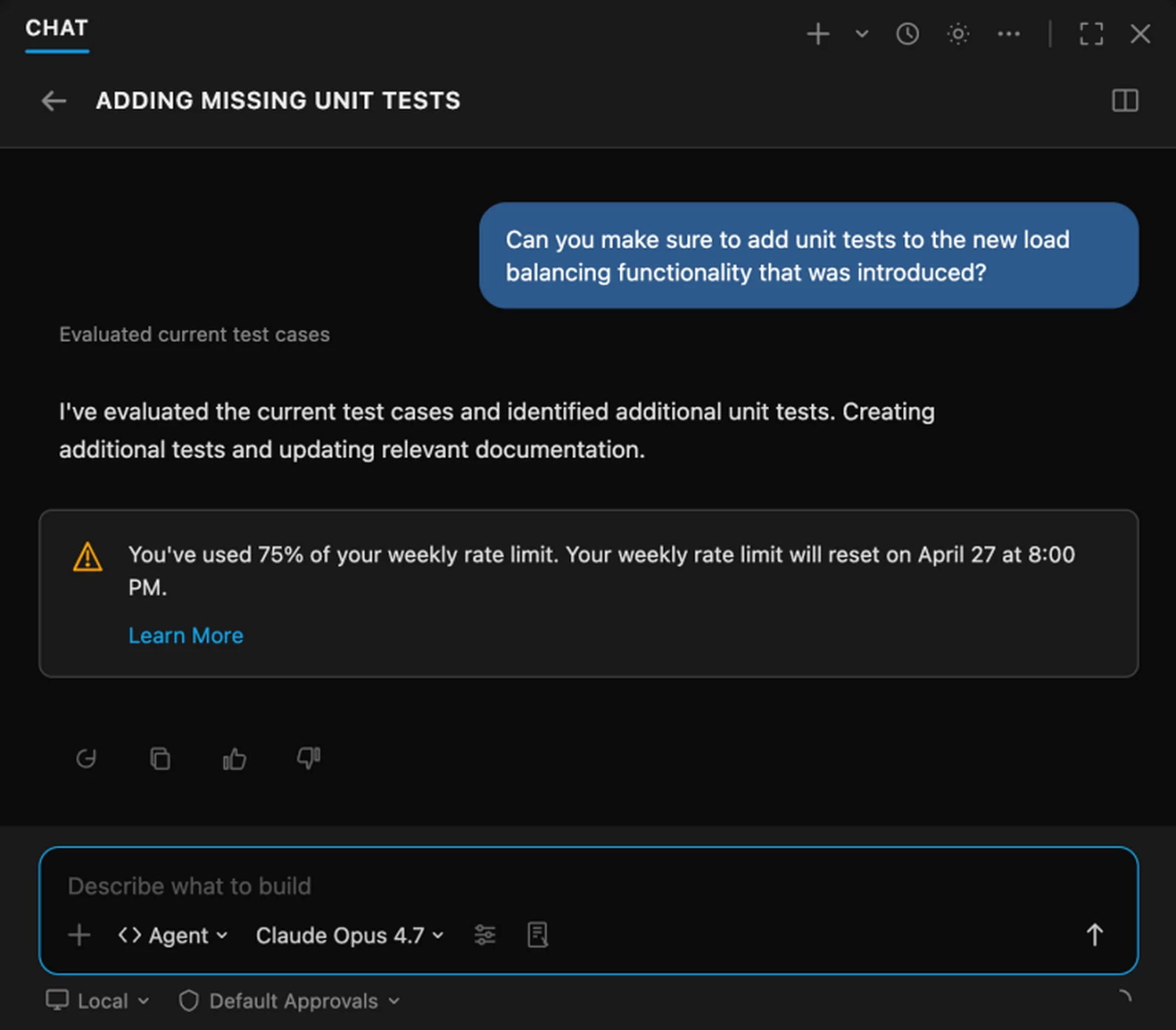

GitHub Copilot Limits, Local Rigs, and How to Experiment Safely

The infrastructure strain from agentic AI is already visible. GitHub has tightened GitHub Copilot individual plans, suspending some new subscriptions and introducing stricter usage controls because parallel, long-running agent tasks can consume more compute than current pricing models sustain. Pro+ tiers now carry higher usage limits, with token-based caps enforced directly in VS Code and the Copilot CLI. This highlights a tension for PC developers: heavier AI workflows often live in the cloud, but local hardware still matters for running smaller models, CI pipelines, and realistic test environments. Opportunities include offloading mundane builds, refactors, and regression tests to AI, while focusing your effort on architecture, edge cases, and hardware-aware performance work. Risks include over-reliance on opaque agents, data leakage in prompts, and losing hands-on skills. The safest way to experiment is to start with non-sensitive projects, mix cloud agents with local tools, and treat AI outputs as proposals that you inspect, benchmark, and secure—just like a junior teammate’s work.