From Overbuilt Stacks to Connected Enterprise ML Systems

Many enterprises are racing to adopt AI, but their machine learning lifecycle management often grows in a fragmented, tool-first way. Teams stack multiple platforms across data, models, orchestration, applications, and governance without a unifying architecture. Early pilots succeed because models run in isolation, yet complexity explodes once they’re deployed across products, teams, and regions. Overlapping tools, brittle integrations, and opaque dependencies turn the stack into an operational burden. What’s missing is not more technology, but a coherent model governance architecture that shows how each layer contributes to outcomes and how components interact. As AI agent-based systems and retrieval-augmented generation expand, orchestration and data consistency become more critical than individual models. Without a structural view of the entire enterprise ML system, organizations struggle to monitor performance, manage cost, and safely scale AI beyond experimentation.

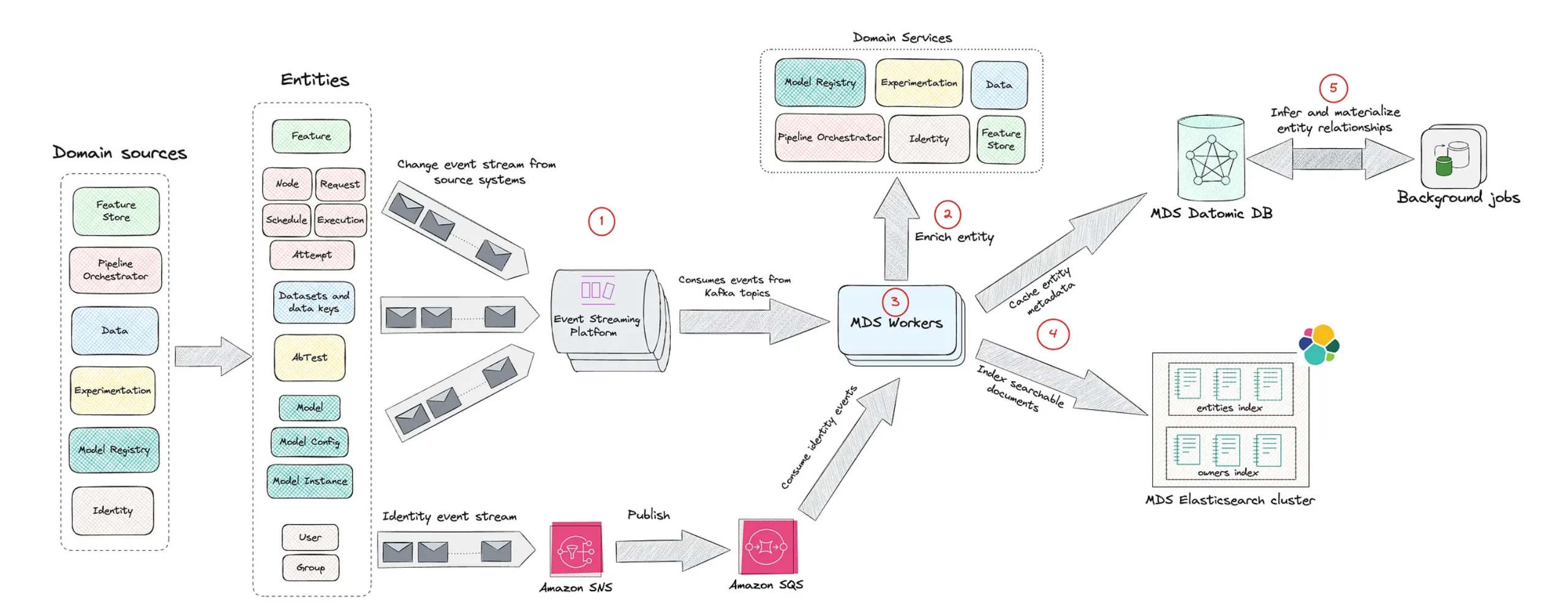

What Netflix’s Model Lifecycle Graph Actually Does

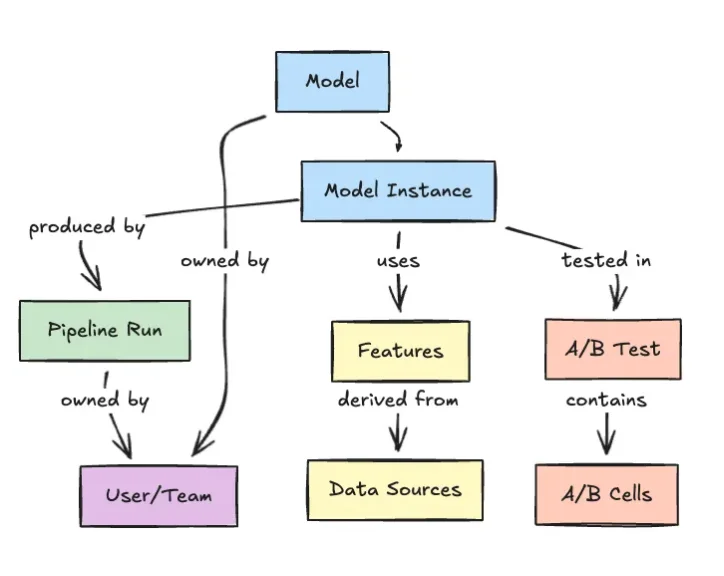

Netflix’s Model Lifecycle Graph rethinks how large-scale ML platforms are represented. Instead of treating pipelines as linear stages, Netflix models datasets, features, models, evaluations, workflows, and production services as interconnected nodes in a graph. Each edge captures a dependency or relationship: which datasets feed which features, which models consume those features, how evaluations are run, and where models are deployed. This graph-centric view turns ML metadata into first-class infrastructure. Engineers can traverse lineage chains to see where a model originated, what upstream data it relies on, and how changes will ripple through downstream systems. The same structure boosts discoverability by helping teams find reusable assets and understand how existing models are assembled. As ML portfolios grow across multiple teams, the graph becomes the map that keeps the system navigable instead of a tangle of isolated workflows.

Why Data Lineage Tracking is a Safety Mechanism, Not a Nice-to-Have

In complex enterprise ML systems, failures rarely stem from a single broken model. They emerge from untracked dependencies and invisible data changes. Without robust data lineage tracking, teams can’t reliably answer basic questions: Which upstream dataset changed? Which features depend on it? Which production services could be impacted? Modern architectures like data mesh, data fabric, and ELT-based pipelines multiply these dependencies, as datasets and features evolve independently across domains. Netflix’s graph-based approach tackles this head-on by encoding lineage as traversable graph relationships. That lets teams perform impact analysis before a change, monitor propagation after deployment, and quickly diagnose issues when metrics drift. In practice, data lineage becomes a safety mechanism for model governance architecture—reducing the risk of silent failures, preventing inconsistent behavior across applications, and preserving trust in AI-driven decisions at scale.

Integrating Governance and Orchestration Into the ML Graph

Governance is often bolted on as a separate layer, but Netflix’s Model Lifecycle Graph suggests it should be embedded in the same fabric as data and models. By modeling workflows, evaluations, and production services alongside datasets and models, the graph naturally supports governance policies: who owns a dataset, which models are approved, what evaluations must run before deployment, and how models are routed into applications. This aligns with emerging views that orchestration matters more than any single model. In an orchestration-led architecture, decisions about data flows, model selection, and fallback mechanisms sit at the center of machine learning lifecycle management. When those decisions are encoded in a shared graph, teams gain unified observability across experimentation and production. The result is an enterprise ML system where governance, cost control, and reliability are enforced through structure, not manual processes.

How Enterprises Can Adapt Netflix’s Graph Mindset

Most organizations won’t replicate Netflix’s platform exactly, but they can adopt the same graph mindset. Start by treating ML assets—datasets, features, models, evaluations, and workflows—as entities in a shared catalog rather than artifacts buried in separate tools. Capture relationships explicitly: which pipelines generate which datasets, which models consume them, and where outputs are served. Integrate monitoring signals, data drift detection, and production readiness checks into this structure so that operational health is visible along each lineage path. As agent-based systems and RAG use cases expand, this integrated view becomes essential for safe scaling. Instead of adding yet another dashboard, focus on building a metadata-centric backbone that connects existing tools. The goal is the same one Netflix is pursuing: a connected model lifecycle graph that keeps enterprise AI transparent, governable, and reusable as it grows.