From Buzzword to Bedside: What Multimodal Healthcare AI Really Means

Multimodal healthcare AI describes systems that learn from multiple kinds of medical data at once: genomic variants, radiology imaging, clinical notes, structured EHR fields and continuous wearable health data. In practice, this means a model predicting cancer recurrence does not just read a pathology slide or a genome file; it fuses molecular drivers from genomics, anatomical context from imaging, symptom trajectories hidden in clinical notes, and physiology patterns from wearables. The motivation is straightforward: each modality is incomplete on its own, especially in messy clinical environments where up to 80% of data is unstructured text and images. Single-modality models often fail when real-world complexity and missing data appear. By contrast, multimodal healthcare AI can preserve modality-specific signal while remaining robust when one stream is unavailable, allowing early detection workflows, sepsis risk prediction and precision oncology tools to operate on richer, more resilient views of the patient journey.

Inside the Lakehouse: Databricks’ Production Architecture for Hospital AI

Moving from research prototypes to live clinical systems hinges less on model cleverness and more on AI production architecture. The Databricks lakehouse pattern lands each modality—genomics, imaging metadata and embeddings, text-derived entities from clinical notes, and streaming wearable health data—into governed Delta tables. Unity Catalog enforces data classification, fine-grained access controls, audit logs, lineage and controlled sharing, so every feature used by a clinical notes large model or genomics imaging fusion pipeline can be traced back to source datasets. Reproducibility is handled via dataset versioning, time travel and MLflow-based experiment tracking. Lakehouse and Lakeflow pipelines orchestrate ETL, feature generation and model serving in one environment rather than maintaining separate stacks per modality. This reduces fragile point-to-point integrations and unnecessary copies of sensitive data, creating a single multimodal substrate that can support both batch training and low-latency inference in regulated hospital settings.

Fusion Strategies: How Models Actually Combine Genomics, Imaging and Wearables

Under the hood, multimodal healthcare AI depends on how different signals are fused. Early fusion simply concatenates raw or lightly processed features before training; it can work for small, tightly controlled cohorts but struggles with high-dimensional genomics and large feature sets. Intermediate fusion encodes each modality—say, genomics, imaging and structured EHR—into separate latent representations, then merges them, making it well-suited to genomics imaging fusion scenarios. Late fusion trains per-modality models and combines their predictions, a practical approach when modalities are often missing at prediction time. Attention-based fusion goes further, learning dynamic weights over modalities and time, ideal for longitudinal wearables plus repeated imaging or notes. In clinical environments, the choice is not academic: fusion strategies shape robustness, interpretability and safety. Late or attention-based schemes can degrade gracefully when data is missing, while modular encoders simplify auditing and validation of each modality’s contribution before deployment.

From Lab Demo to Ward Round: Governance, Audit Trails and Triage Assistants

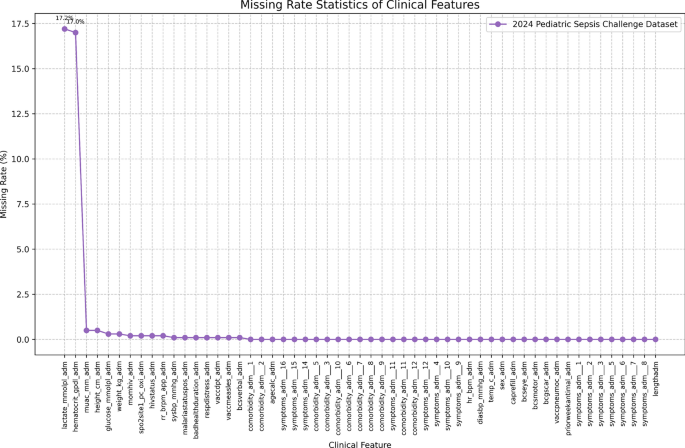

Production multimodal stacks are being wired into workflows that range from early sepsis prediction to oncology decision support. Research on explainable predictors for early sepsis and ICU risk models shows the value of combining structured vitals, lab biomarkers and clinical narratives, but real deployment requires auditable pipelines and strict access control. With Unity Catalog-style governance, hospitals can track who accessed which patient features, enforce row- and column-level protections for PHI, and reconstruct the exact dataset used to train or update a model. This is critical when multimodal decision support systems recommend treatment pathways or flag deteriorating patients. Future multimodal assistants could summarize clinical notes, cross-reference imaging and genomics, and contextualize signals from wearable health data to support triage decisions. Because lineage is preserved, clinicians and regulators can review how a recommendation was generated, reducing the gap between cutting-edge models and the documentation expectations of regulated care environments.

From Hospital Stack to Consumer Wearables: Opportunities and Limits

The same AI production architecture underpinning hospital multimodal systems is poised to spill into consumer health. As wearables stream heart rate, sleep, activity and emerging biosignals, lakehouse-style platforms can join this wearable health data with imaging summaries, lab results and even genomic risk markers, enabling longitudinal monitoring and early warning systems that echo precision medicine workflows. For tech-savvy patients, future assistants might translate complex genomics imaging fusion outputs and clinical notes large model summaries into everyday language, helping them understand risk and treatment trade-offs at home. Yet risks remain significant. Bias can creep in when some groups have less imaging or genomics data, or when wearable adoption is uneven. Interoperability across hospital systems and device vendors is still fragile, and evaluating multimodal model behavior is harder than benchmarking single-task classifiers. Robust governance, transparent fusion designs and conservative clinical validation will be essential as these stacks move closer to consumer devices and home diagnostics.