From Sora to a Fragmented AI Video Generator Landscape

After the hype cycle around Sora style tools, text-to-video has not settled into a single winning platform. Instead, the underlying capability is diffusing into several environments: social networks, creator-focused workflows and professional production tools. Analysts now see Grok capturing the largest observed traffic for AI video, with Runway video AI, Google’s Veo and Flow, Kling AI video and a long tail of smaller platforms sharing the rest. No unified market or dominant standard has emerged, which means creators must pick a toolset based on their use case rather than brand prestige. For indie filmmakers and YouTubers, this fragmentation is both a headache and an opportunity. There is no Hollywood-grade “one button movie” yet, but there are many specialised AI video generator options that can slot into existing editing pipelines, especially for short, eye-catching clips and concept pieces.

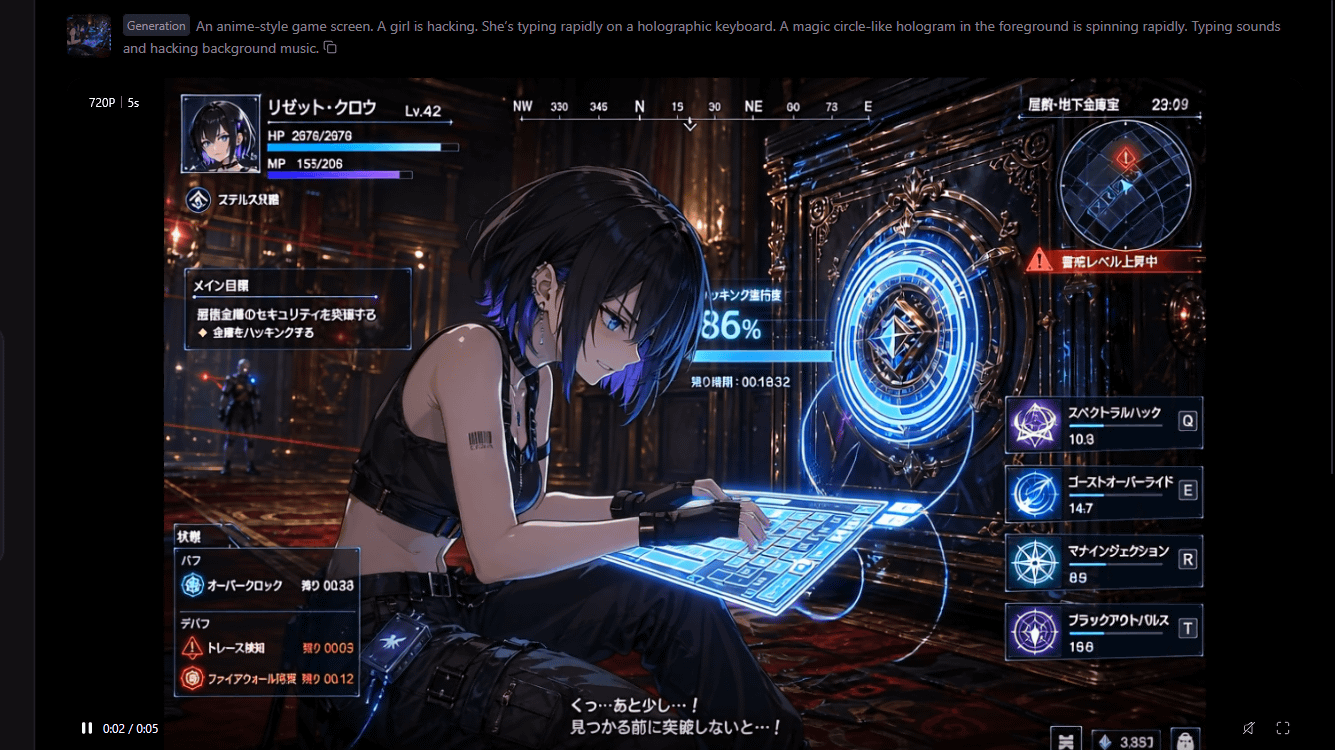

HappyHorse 1.0 Review: A New Contender with Anime and Japanese Dialogue

HappyHorse 1.0 has quickly become one of the most talked‑about AI video generators, not only for its performance but also for its accessibility. Launched via an official site that lets users sign in with a Google account, it supports prompts for both live‑action and anime-style footage and can output Japanese dialogue, including typing sounds and background music. In benchmarking on Artificial Analysis, HappyHorse 1.0 ranked at the top for generating silent videos from text, videos with sound from text and videos from images, outperforming models from Google and xAI. In practical use, creators can upload an image as the first frame, add a textual description and select resolution and duration. A 5‑second, 720p clip was reported to render in about 90 seconds, indicating that while the system is powerful, it is currently optimised for short, high‑impact sequences rather than full episodes or long-form films.

Runway, Kling and Open Workflows: Pipelines, Not Magic Buttons

Runway video AI and Kling AI video represent the more professional end of today’s ecosystem, aimed at creators who already understand editing timelines, compositing and shot design. Rather than replacing an NLE (non-linear editor), they sit alongside tools like Premiere or DaVinci as powerful generators of short clips, transitions and stylised shots. In parallel, OpenAI’s Image Generation 2.0 adds another piece of the puzzle. It can produce complex layouts, multi-panel comic pages and cohesive image sequences with consistent style, colour and perspective. That makes it ideal for storyboards, animatics or visual lookbooks which can then be fed into video models as reference frames. The real power today lies in stitching these tools together: still-image generation for boards and keyframes, text-to-video for motion studies, and conventional editing to assemble, time and refine everything into a coherent piece that still feels authored rather than auto-generated.

Capabilities and Limits: Length, Motion, Text and Control

Across HappyHorse 1.0, Runway, Kling and similar systems, the sweet spot remains short clips measured in seconds, not minutes. Tools handle simple character movement, basic camera moves and atmospheric effects well, but motion consistency across many shots is still fragile. Models can drift on character details between clips, making it hard to maintain continuity for long narratives without heavy curation. Text legibility inside the frame—on signs, phones or UI elements—remains hit‑and‑miss, which is why creators often composite real text in post. Control is improving: HappyHorse lets you specify an initial image frame; Image Generation 2.0 lets you plan multi-panel sequences with layout‑aware prompts; Runway and Kling offer various modes for image‑to‑video and text‑to‑video. Still, these are more like highly opinionated collaborators than obedient cameras. Expect to iterate, discard failures and rely on traditional editing and VFX skills to polish usable results.

What This Means for Malaysian Indies and Southeast Asian Creators

For now, Hollywood and major streamers largely sit on the outside, watching as AI video seeps into three zones: social content, creator workflows and specialised production tools. That leaves surprising room for Malaysian and Southeast Asian indie creators to experiment without waiting for studio approval. YouTubers can use an AI video generator like HappyHorse 1.0 to create anime-style channel intros, VTuber‑adjacent avatars or short story beats with Japanese dialogue. Filmmakers can pair Image Generation 2.0 storyboards with Runway or Kling clips to assemble concept trailers, pitch animatics or proof‑of‑concept scenes. Music creators can build lyric videos by mixing AI‑generated visual sequences with conventional motion graphics, while live‑action teams can drop in occasional AI‑generated shots for dream sequences, holographic interfaces or establishing shots. The realistic opportunity today is hybrid: AI for fast, cheap visual experimentation; human craft for structure, pacing and emotional clarity.