Meta Pushes WebXR Development Beyond Traditional Coding

Meta has rolled out a major update to its open-source Immersive Web SDK (IWSDK), sharpening its focus on AI-powered VR creation for the web. IWSDK is built around WebXR, the browser standard that lets VR experiences run directly inside a web page instead of a native app. That means creators can share immersive content via a simple URL, and users can jump in instantly on compatible headsets or desktops without app store friction or long downloads. Originally unveiled at Meta Connect, the framework already handled heavy lifting for physics, hand tracking, locomotion, grab interactions, and spatial UI. The latest update extends that mission from simplifying low-level engineering to rethinking how VR is built altogether. By weaving AI agents into the workflow, Meta is positioning IWSDK as a kind of no-code VR builder that bridges the gap between complex WebXR development and designers who think more in ideas than in syntax.

Inside the Agentic AI Workflow: From Prompt to Playable VR

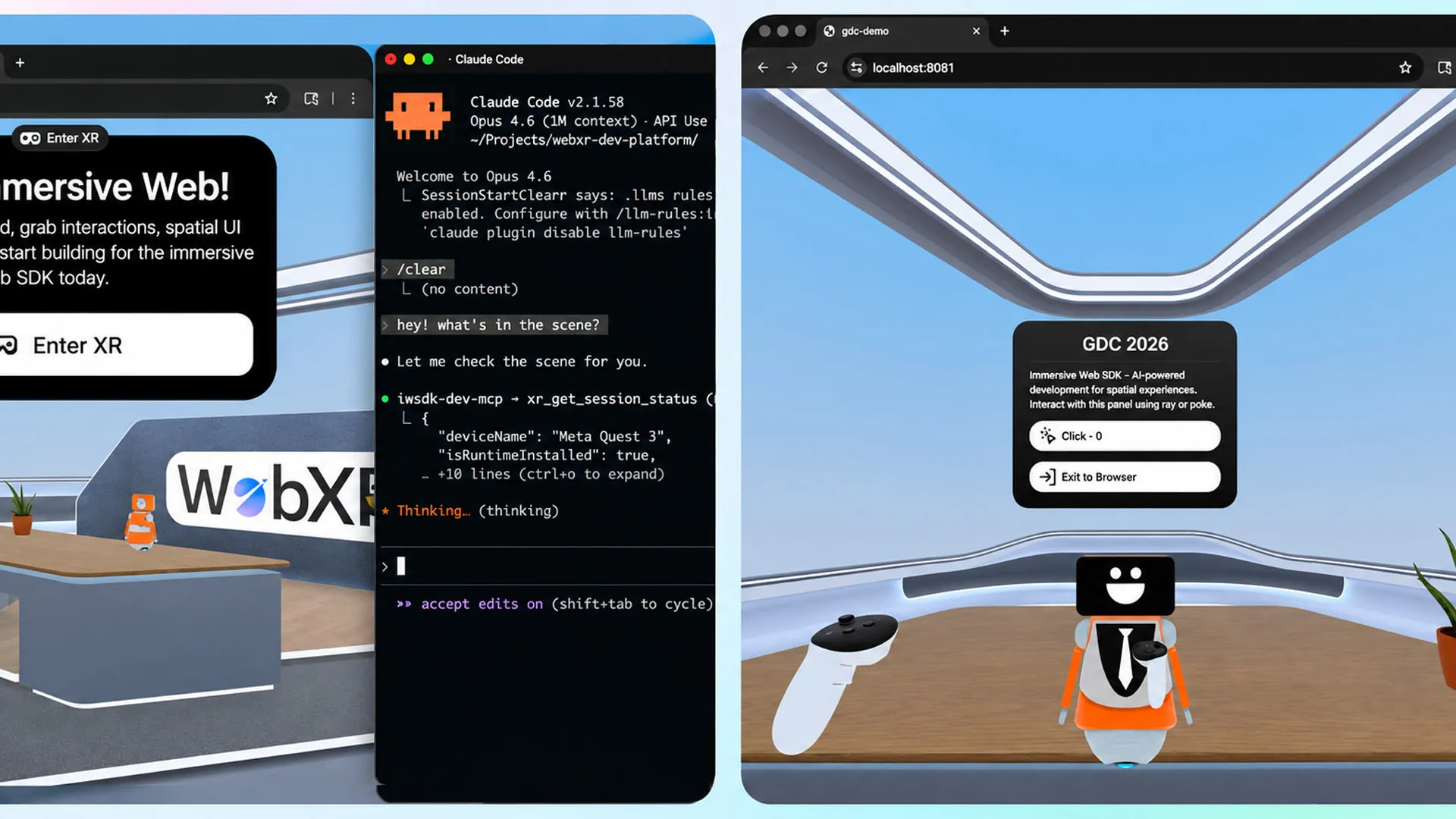

The headline feature of the update is an “agentic workflow” that integrates AI coding assistants such as Claude Code, Cursor, GitHub Copilot, and Codex directly into the IWSDK pipeline. Agentic here means the AI does more than spit out code snippets on request. It iteratively generates, tests, and validates the code, closing the loop between idea, implementation, and debugging. Meta emphasizes that this workflow is designed for full, interactive applications rather than minor edits or boilerplate generation. In practice, creators describe the VR experience they want, rely on IWSDK’s abstractions for interaction and physics, and let the AI agents assemble the project step by step. Because the content targets WebXR, each iteration can be tested immediately in the browser. This feedback-rich loop turns what used to be a lengthy development cycle into a rapid, AI-assisted conversation about design and behavior.

Project Flowerbed Shows What AI-Built WebXR Can Do

To showcase the new capabilities, Meta revisited its 2022 VR gardening demo, Project Flowerbed, which originally relied on tens of thousands of lines of hand-written code. Using existing art assets but rebuilding the logic through IWSDK’s AI workflow, Meta says the experience was recreated in only 15 hours. The company presents this as proof that the system is capable of more than prototyping or cosmetic tweaks: it can deliver a complete, interactive, web-based VR experience driven by WebXR. For teams used to months of development and debugging, compressing that effort into hours reframes what’s possible on tight timelines or limited budgets. It also highlights how higher-level IWSDK components—like ready-made interaction patterns and spatial UI—combine with AI agents to remove much of the friction that previously made advanced WebXR development a specialist pursuit.

Lowering Barriers for Non-Technical Creators and Small Teams

By turning IWSDK into a kind of no-code VR builder, Meta is directly targeting designers, artists, and small studios that may lack deep engineering resources. Instead of wrestling with low-level APIs and complex build systems, these creators can focus on narrative, interaction design, and visual style, while AI handles scaffolding, wiring, and iteration. The web-native deployment model reinforces this accessibility: experiences can be tested instantly in a browser, shared via a single link, and accessed across desktop and VR headsets without traditional distribution hurdles. Meta notes that WebXR content on its Quest platform already draws more than one million monthly users, suggesting a growing audience for web-based VR experiences. For educators, marketers, or indie storytellers, the combination of open-source tooling, AI-powered VR creation, and frictionless web delivery could dramatically lower the threshold for experimenting with immersive media.

An Open-Source Foundation for the Immersive Web

Crucially, IWSDK remains an open-source project under an MIT license, with source code available on GitHub for anyone to inspect, extend, or fork. That openness aligns with Meta’s stated goal of making immersive web development more accessible, while still giving advanced developers room to customize or contribute. Because the framework abstracts common VR tasks—movement, hand interactions, physics, spatial UI—it doubles as both a learning environment and a production-ready toolkit. The new agentic AI layer builds on this foundation rather than replacing it, offering a productivity boost without locking creators into a closed ecosystem. As WebXR matures and more browsers and devices support it, a widely available, AI-augmented SDK could become a de facto starting point for web-based VR experiences. Meta’s latest update signals that the future of immersive content creation may be as much about prompting and iterating as it is about traditional coding.