Why AI Productivity Claims Need Proof, Not Hype

Engineering leaders are hearing bold claims about AI coding tools ROI: 55% faster task completion in trials, 25–30% projected productivity gains in forecasts, and mixed self-reported benefits from teams. Yet these figures describe different things—controlled experiments, future predictions, and current sentiment—so they can’t be used interchangeably. Recent research also shows that AI adoption can correlate with higher throughput but lower stability, and in some contexts even slower completion times for experienced engineers. The net effect is a widening measurement gap: leaders feel pressure to justify AI investments, but lack rigorous development productivity metrics and engineering velocity tracking that connect AI usage to real outcomes. To move beyond anecdotes, organisations need a repeatable way to validate whether AI is accelerating delivery, degrading reliability, or simply shifting work around. That starts with grounding AI evaluation in DORA metrics measurement and commit-level analysis, not in marketing promises.

Use DORA Metrics as the Spine of Your AI Measurement Strategy

DORA’s framework gives engineering leaders a common language for measuring software delivery performance: deployment frequency, lead time for changes, change failure rate, and time to restore service. The latest ROI of AI-assisted development report extends this by mapping how AI-driven improvements in these metrics can translate into business value. Crucially, it treats AI as an amplifier of existing systems. If your version control practices, internal platform, and workflow clarity are weak, AI may create local speed-ups that vanish in downstream chaos. If your foundations are strong, AI can enhance throughput while maintaining or even improving quality. DORA recommends tracing value flow from technical capabilities through delivery metrics into non-financial outcomes like developer and user experience, and finally into cost savings and revenue. For leaders, this means measuring AI coding tools ROI by how they move DORA metrics over time, not by how much code they generate.

Build Strong Engineering Foundations Before Scaling AI

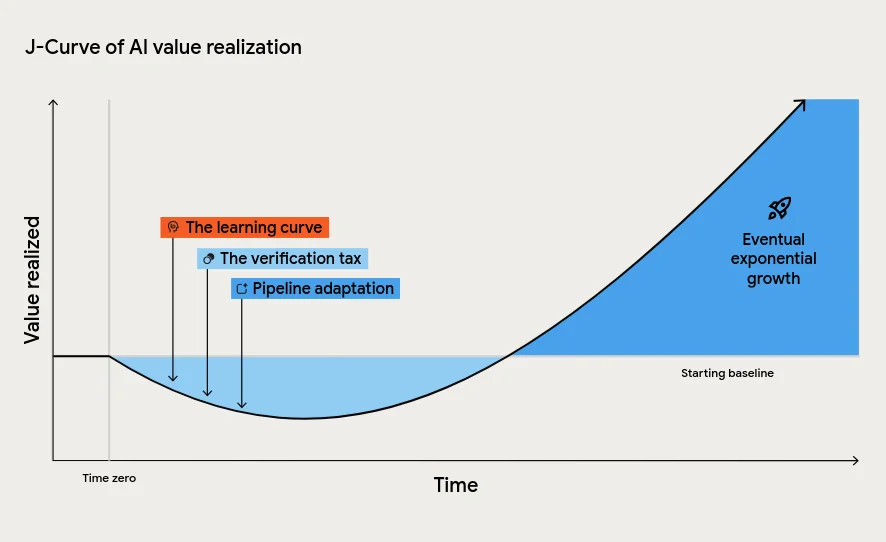

DORA’s research emphasises that AI will not fix broken systems; it magnifies what already exists. High-performing teams with robust CI/CD, automated testing, and small-batch delivery see AI accelerate an already healthy pipeline. Struggling teams, by contrast, often experience more instability as AI increases code volume without improving verification, release, and operations practices. The report describes a J-curve of value realisation: most organisations face an initial productivity dip as they absorb the learning curve, pay a verification tax to review AI-generated code, and adapt downstream processes. Leaders who misinterpret this as failure and pull the plug prematurely forfeit long-term gains. Instead, they should invest in platform quality, clear workflows, and AI-accessible internal data while deliberately managing the instability tax. Only when these foundations are in place can you trust that improvements in engineering velocity tracking represent sustainable gains, not fragile spikes.

Combine DORA Metrics with Commit-Level Throughput Value

Traditional DORA metrics measurement gives a robust view of delivery performance, but it can miss what’s happening inside the codebase itself. New approaches like Engineering Throughput Value (ETV) aim to close this gap by scoring every commit against a pre-AI baseline. Instead of relying on surveys or generic benchmarks, teams compare their own post-AI behaviour to their own history. This helps distinguish genuine throughput gains from AI-washing—claims of productivity improvements that can’t be traced to specific workflow changes or measurable outcomes. ETV-style analysis can reveal whether AI assistance is increasing meaningful contributions or simply inflating churn and rework. For engineering leaders, combining ETV with DORA’s four key metrics provides a more complete picture: commit-level value shows what engineers are doing differently, while delivery metrics show how that translates into deployment speed and reliability. Together, they form a stronger foundation for AI coding tools ROI assessments.

Look Beyond Cycle Time: Measuring Sustained ROI

Shorter cycle times after adopting AI coding tools are encouraging, but they don’t tell the whole story. DORA’s latest ROI model demonstrates that AI can simultaneously increase individual effectiveness and worsen system stability, leading to more incidents or rollbacks. The model even accounts for negative financial impacts when change failure rates tick up, highlighting that not all throughput gains are beneficial. Leaders should therefore track a balanced set of development productivity metrics: DORA’s delivery measures, defect rates, incident duration, verification effort, and developer experience indicators such as cognitive load and satisfaction. They should also pay close attention to how quickly the organisation moves through the J-curve of adoption. The goal is not a temporary burst of speed, but a sustained improvement in engineering velocity tracking that aligns with business value. Measure AI by the bottlenecks it removes and the stability it preserves—not just the code it helps produce.