From General-Purpose Workhorses to AI-Oriented CPUs

For years, CPUs have been designed as flexible, general-purpose processors, while GPUs dominated highly parallel AI workloads. That balance is now shifting. Arm’s CEO Rene Haas argues that the rise of “agentic AI” — networks of AI agents handling diverse, ongoing tasks — will push CPU core scaling into a new era. Instead of modest core increases each generation, future AI processor architecture is expected to prioritize extreme core count designs tailored to parallel computing design. The idea is not just more of the same cores, but many more energy-efficient, AI-optimized ones working in concert. As AI agents proliferate across data centers and edge devices, CPUs must handle thousands of concurrent micro-tasks, orchestration logic, and lighter-weight inference. This forces chip makers to rethink traditional CPU design philosophy and build processors that natively embrace massive parallelism rather than treating it as a niche GPU domain.

Why Extreme Core Counts Are Becoming Inevitable

Modern GPUs already pack huge numbers of cores, but they are hitting physical limits. Haas notes that flagship AI accelerators, such as Nvidia’s latest generations, are approaching the reticle limit — the maximum area a lithography mask can expose in a single pass. CPUs, by contrast, still have more room to grow their extreme core count within these constraints, especially when leveraging modular chiplet designs and efficiency-optimized cores. Arm expects future server CPUs to double or even quadruple their core counts compared to today’s designs, fundamentally changing AI processor architecture. In this vision, CPUs evolve from dozens to hundreds of cores, each tuned for low-power, parallel computing design. Such platforms become ideal for AI agents that require many concurrent threads of execution rather than a few ultra-powerful cores, creating a compute fabric that better matches the parallel structure of modern AI workloads.

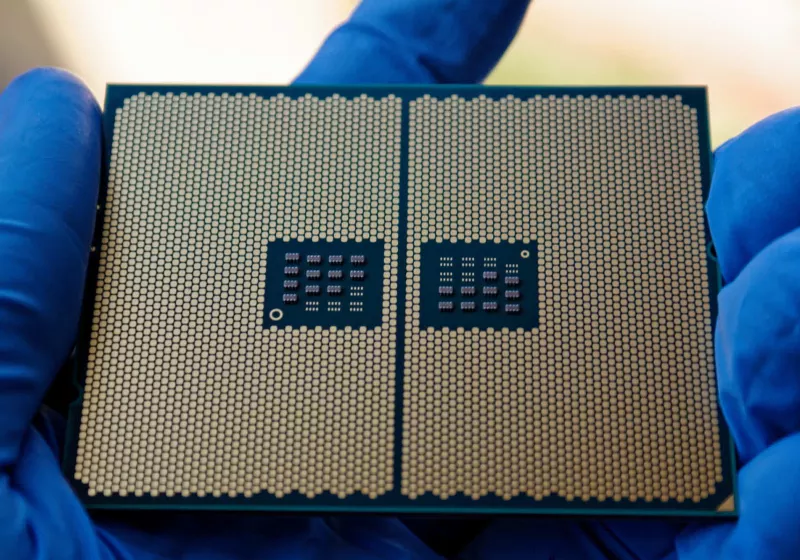

Concrete Steps Toward Hundreds of CPU Cores

Extreme CPU core scaling is not a distant theory; it is already appearing in commercial and near-term products. Arm’s recently introduced AGI CPU supports up to 126 cores, signaling how far their architecture can stretch while maintaining power efficiency. In the x86 camp, Intel has demonstrated Xeon designs with as many as 288 efficiency cores, while AMD is expected to reach up to 256 cores in future Epyc processors when combined with simultaneous multithreading. Haas envisions this trajectory continuing toward 256-core and eventually 512-core CPUs, creating processors composed of hundreds of small “brains” working together. This shift redefines what a CPU is for AI workloads: no longer just the control hub feeding data to GPUs, but a high-density parallel engine in its own right, capable of running fleets of AI agents, microservices, and inference tasks simultaneously across data center and edge deployments.

Architectural Implications for Data Center and Edge AI

As CPUs adopt extreme core count configurations, system architecture across data centers and edge environments will change significantly. Today’s model typically treats CPUs as orchestration units and GPUs as the heavy parallel engines. With aggressive CPU core scaling, more of the AI stack can execute directly on CPU clusters, reducing data movement and simplifying parallel computing design. Agentic AI systems, which may spawn thousands of lightweight processes, can map naturally onto hundreds of CPU cores, improving responsiveness and isolation. Edge devices, powered by efficient Arm-based CPUs, can run multiple local agents without relying as heavily on cloud GPUs. This evolution will blur the traditional CPU–GPU divide: GPUs will remain key for dense matrix operations, but AI processor architecture will increasingly rely on CPU fabrics as scalable, parallel backbones. The result is a more balanced, heterogeneous compute landscape tuned for AI-first workloads.