Why Build a Local AI Coding Assistant at All?

A local AI coding assistant is essentially your own private pair programmer that lives entirely on your machine. Instead of sending your code to cloud services, you run the model yourself, keeping everything on-device. That matters for teams working with sensitive or proprietary code, where sending snippets to external servers is a non-starter. A local setup also removes recurring subscription worries: once installed, you can use it as much as you want without API limits or surprise bills. Because it runs on your hardware, you can keep coding with full AI assistance even when offline—on a plane or in a network-restricted environment. Compared with cloud tools that evolve quickly and offer polished experiences, a local assistant trades some convenience for privacy, control, and long-term flexibility, making it especially appealing for regulated industries and cautious engineering teams.

Inside the OpenCode + Ollama + Qwen3-Coder Stack

OpenCode, Ollama, and Qwen3-Coder combine into a free local AI coding stack that behaves much like a cloud assistant, but runs entirely on your computer. OpenCode is the front-end: an open-source coding assistant that can live in your terminal, IDE, or as a desktop app. It understands your project tree, can read and write files, run commands, and interact with Git through a text-based interface. Ollama is the model manager, handling download, versioning, and execution of large language models with simple commands. Qwen3-Coder is the coding brain, a model tailored for code generation, completion, and repair, notable for its 256,000-token context window, which lets it reason over very large files or even small projects at once. Together, they create a local AI coding assistant with strong code understanding, full privacy, and offline code generation capabilities.

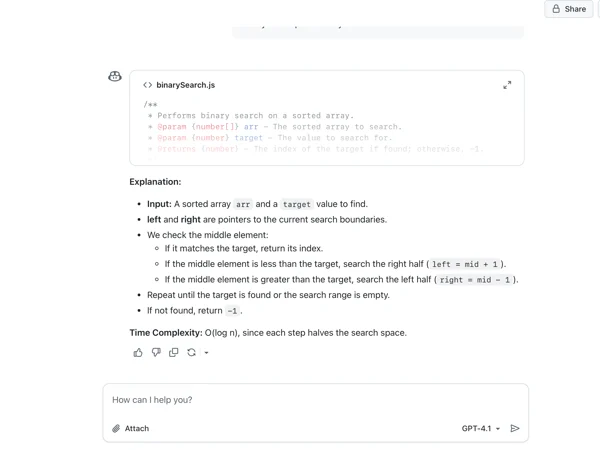

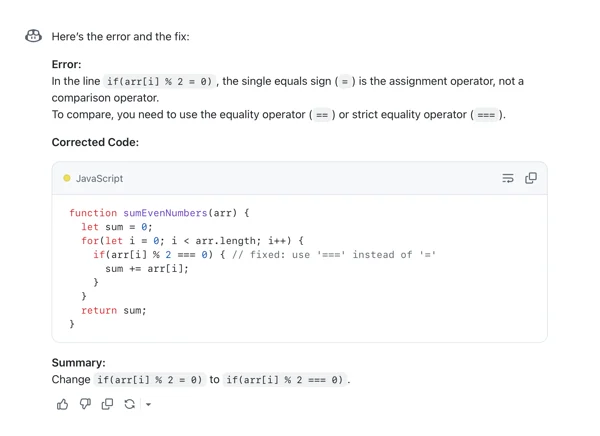

What Developers Expect: Copilot, ChatGPT and the Local Alternative

GitHub Copilot and ChatGPT have shaped expectations for AI pair programming tools. Copilot excels at inline autocompletion and real-time suggestions inside your IDE, speeding up active development with context-aware snippets as you type. ChatGPT, by contrast, shines as a conversational partner: it explains code, debugs errors, and generates full scripts or components from natural language prompts, often walking through the logic step by step. A local OpenCode + Ollama Qwen3 coder setup aims to blend both modes: autocomplete-like assistance via OpenCode’s integration with your editor, plus chat-style help for refactors, documentation, and debugging. While it may lack some of the polished UX and multi-model selection that Copilot and ChatGPT offer, the workflow—ask questions, get code, refine, and iterate—feels familiar. The main difference is that all of this happens locally, with your project context staying on your machine.

Latency, Accuracy, Context and Language Support

In day-to-day development, performance and quality matter as much as privacy. A local Qwen3-Coder assistant run via Ollama can feel very responsive, because responses are generated on-device instead of round-tripping to a server—especially for short prompts and refactors. Its 256,000-token context window is a major advantage: you can feed entire files or small projects, enabling wide-scope refactors and consistent naming across modules. Cloud tools like GitHub Copilot and ChatGPT benefit from access to multiple cutting-edge models and continuous optimization, which can translate into stronger accuracy across a broad range of languages and patterns. Both cloud assistants support many mainstream languages and stacks. A local Ollama Qwen3 coder setup is strongest where it can see and reason about your entire codebase and tests at once; cloud tools tend to be better at niche frameworks, fast-updated ecosystems, and tasks that leverage features like multimodal inputs or external tools.

Workflows, Privacy Benefits and Key Trade-offs

In practical workflows, you might connect OpenCode to your IDE, then route its prompts through Ollama to Qwen3-Coder. From there, you can ask for inline refactors, unit test generation, or help repairing broken functions, all without your code leaving the device. This is particularly compelling for teams with strict privacy or compliance rules: no source files need to be transmitted to third-party servers, and you maintain full control over which models run and how they’re updated. The trade-offs are real, though. Local models are constrained by your hardware and may lag behind the latest commercial models in raw capability and convenience. You also rely more on community support and DIY configuration instead of polished, vendor-managed experiences. For greenfield design, learning a new framework, or complex research tasks, it can still make sense to fall back to cloud assistants while keeping the most sensitive code paths strictly local.