What Is Gemini Intelligence on Android?

Gemini Intelligence Android is Google’s new AI layer that sits inside the operating system, designed to make your smartphone far more proactive. Instead of waiting for you to open an app and type a prompt, Gemini Intelligence can read context from your screen, understand what you are trying to achieve, and coordinate multiple apps to get it done. Google frames this as a shift from simple voice assistants to full AI automation smartphone experiences, where the system can anticipate needs and assist across apps, devices, and services. It connects with existing Android AI features like Personal Intelligence, which, after you opt in, lets Gemini use information from apps such as Gmail, Photos, YouTube, and Search. The goal is to turn your phone into a proactive AI assistant that quietly prepares the next step for you, while still keeping you in final control of what actually happens.

How Proactive AI Automation Works on Your Phone

The signature capability of Gemini Intelligence is app automation. You can long-press the power button and ask Gemini to handle multi-step tasks that normally require jumping between different apps. Early examples focus on everyday use cases such as food, grocery, and rideshare apps. Gemini can create a shopping cart from a grocery list, reorder a favorite meal, book a ride, or even find and book a tour based on a photo of a brochure. While these Android AI features operate proactively across apps, Google still keeps humans in the loop. You can watch progress through live notifications, and purchases or bookings require your confirmation before they complete. This approach makes Gemini Intelligence feel less like a chatbot and more like a background agent that prepares everything for you, then waits for your approval before committing to any action.

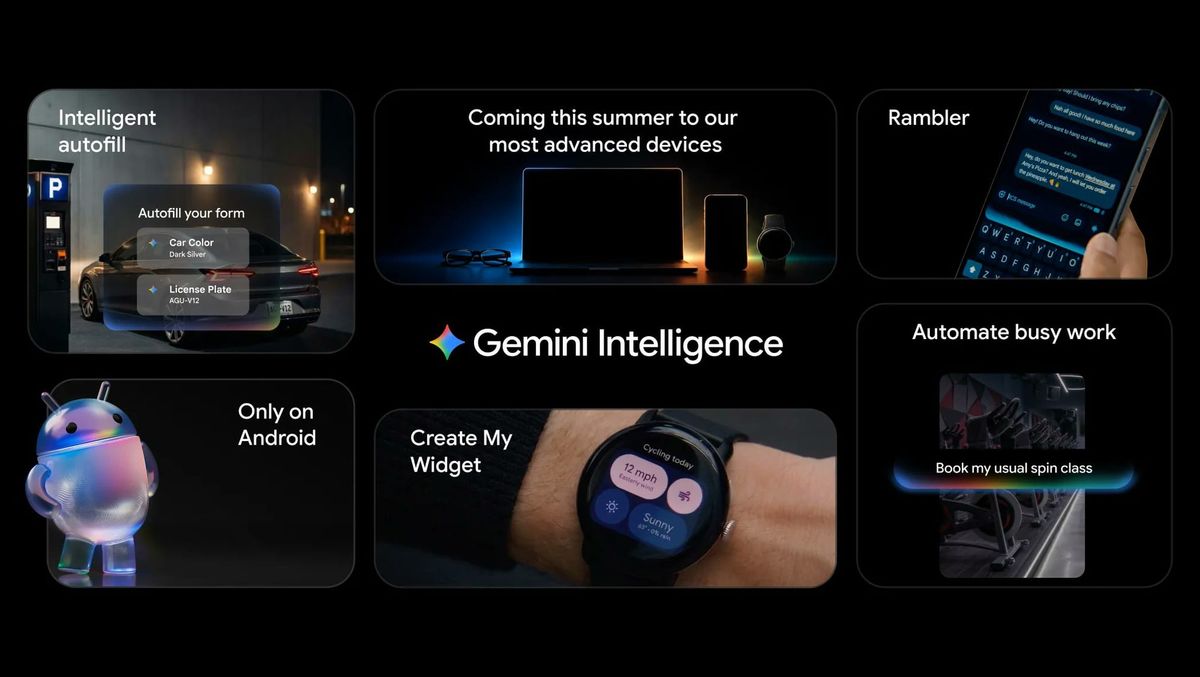

Beyond Chat: Chrome, Widgets, and Smarter Typing

Gemini Intelligence goes beyond voice requests and chat windows by weaving AI automation deeply into Android tools you already use. Gemini in Chrome is coming to select devices, built on Gemini 3.1, to help summarize web pages, answer questions about what you are reading, connect with Google apps, and even run auto browse tasks like reserving parking or updating an order for eligible subscribers. On the keyboard side, Rambler in Gboard transforms natural speech into polished written text, even when you mix multiple languages in a single message. Another feature, Create My Widget, lets you describe the widget you want—such as a weather widget focused on rain and wind or a meal-prep dashboard—and have Android generate it for your phone or watch. Together, these tools shift your phone from reactive assistant to proactive AI assistant that shapes apps and content around your needs.

Privacy, Control, and Security for AI Automation

Because Gemini Intelligence is embedded so deeply in Android, Google emphasizes privacy and control as core design principles. You choose which apps can use Gemini automation, and every purchase still requires confirmation. A new view in Android’s Privacy Dashboard will show which AI assistants were active and which apps they accessed in the last 24 hours, giving you better visibility into how AI automation smartphone features operate behind the scenes. Google also highlights a stack of security technologies supporting these Android AI features, including Private Compute Core, Private AI Compute, protected KVM, and defenses against prompt-injection attacks that try to manipulate AI behavior. The connection between Gemini and your personal data from Google apps remains opt-in, and the integration with Autofill is designed to handle more complex forms while limiting data sharing. The result is an AI-first Android experience that still keeps users in charge of their information.

Which Devices Get Gemini Intelligence First?

Gemini Intelligence will debut first on the latest Samsung Galaxy and Google Pixel phones starting this summer, positioning those devices at the front of Google’s shift toward proactive AI assistants. After that initial rollout, the experience will expand later in the year across more Android devices, including not just phones but also watches, cars, glasses, and laptops. That means the same AI automation smartphone capabilities helping you book a ride on your phone could eventually coordinate navigation in your car or surface relevant information on your wearable. Gemini in Chrome will arrive at the end of June for select U.S. devices running Android 12 or higher with at least 4GB of RAM and English-US as the system language, initially offering auto browse for AI Pro and AI Ultra subscribers. Over time, this broader ecosystem could turn Android into a platform where AI agents move seamlessly across every screen you use.