From Static Arrow to Contextual Agent

For more than half a century, the mouse cursor has essentially been a passive arrow: it tracks coordinates, not meaning. Google DeepMind’s Magic Pointer challenges that assumption by integrating Gemini directly into the cursor, turning it into an AI-powered cursor that understands what is on screen and what the user likely wants to do. Instead of living in a separate chatbot window, Gemini now follows the pointer into whatever app a user is working in. The cursor already signals intent—what you are reading, selecting, or about to click—but historically it could not interpret that intent. Magic Pointer Google reframes the cursor as a contextual mouse pointer that combines on-screen visuals, interface elements and short voice commands, laying the groundwork for an entirely new interaction layer on top of traditional point-and-click.

How Gemini Cursor Control Works in Practice

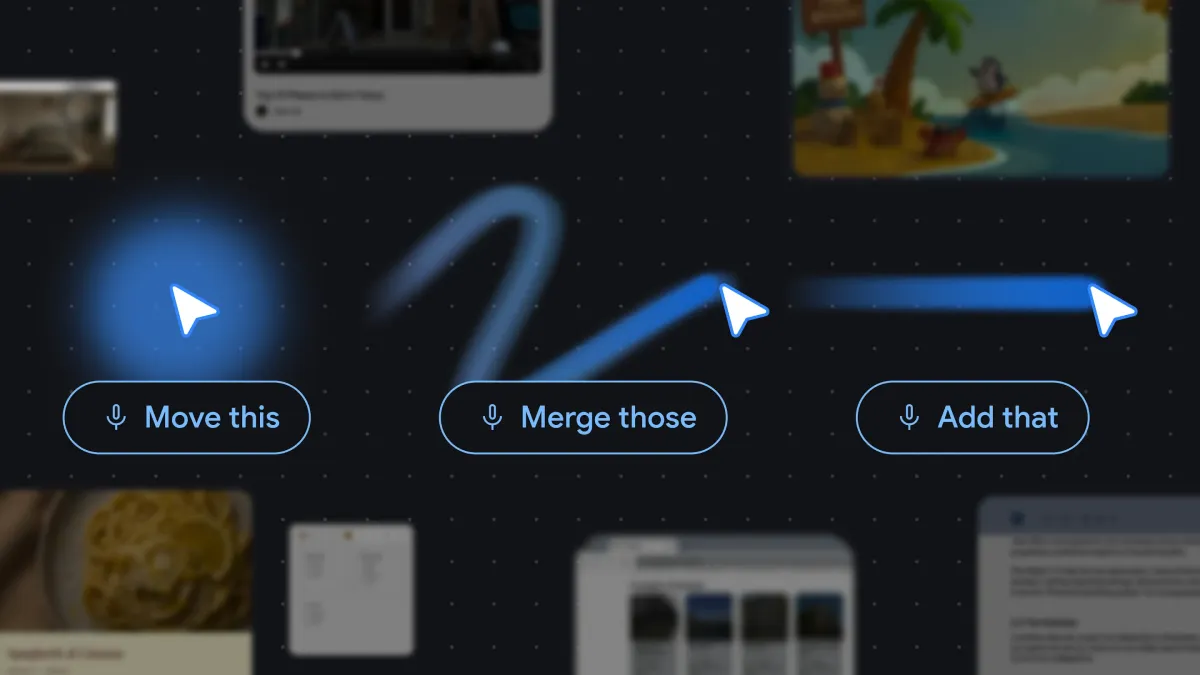

Magic Pointer’s core innovation is letting users point, speak, and get help without crafting elaborate prompts. Hover over a table of statistics in Chrome and the pointer can suggest converting it into a pie chart; highlight a recipe and ask to “double these ingredients,” and Gemini treats the text as an actionable entity rather than static pixels. In a demo, users hover over a crab and say “move this here,” and the system infers what “this” and “here” refer to, then rearranges the elements accordingly. The cursor is paired with the microphone, so short phrases like “fix this” or “what does this mean?” can be grounded in the exact interface element under the pointer. This Gemini cursor control effectively fuses semantic understanding with pointer position, keeping AI assistance woven directly into the flow of work.

Redesigning Desktop Interaction Around Context

DeepMind’s researchers describe Magic Pointer as a fundamental rethinking of desktop interaction rather than a mere shortcut. Their principles center on maintaining user flow, supporting “show and tell” instead of verbose prompts, and embracing natural shorthand like “this” and “that.” AI no longer waits behind a chat icon; it sits at the operating layer, watching context and acting inside current tasks. A user might point at a date and request a calendar entry, or hover over an image and ask for an edit, all without changing windows. Compared with the 1970s cursor, which only understood X/Y coordinates, the 2026-style contextual mouse pointer combines visual semantics, app state and user intent. This shift brings AI much closer to Doug Engelbart’s vision of more natural human-computer cooperation, turning the cursor from a static selector into an active collaborator across the desktop.

Privacy, Latency and Trust as Adoption Gatekeepers

For Magic Pointer to move from demo to mainstream, privacy, speed, and accuracy will be decisive. Because the system effectively “watches” what is under the cursor and listens for commands, users will want clear guarantees about how on-screen content and voice snippets are processed and stored. Latency is another critical factor: the traditional cursor feels instant, so any noticeable delay from Gemini could break the illusion of seamless control. Accuracy might matter even more. A slightly off summary is tolerable, but a misinterpreted “delete this” inside email or work tools is far riskier. DeepMind acknowledges that the model must understand not only pixels but also which actions are allowed in each context. The success of Magic Pointer Google will ultimately hinge on whether this AI-powered cursor can deliver reliable help without compromising trust or slowing down everyday workflows.

Implications for Chrome, Laptops and Beyond

Google’s experimental demos position Magic Pointer as a central feature for Chrome and its Googlebook laptop concept, hinting at how deeply this AI layer could be woven into future devices. Inside Chrome, a contextual mouse pointer could turn any web page into an interactive workspace: summarizing PDFs, restructuring tables, or generating email-ready bullet points directly from on-screen content. On laptops, embedding Gemini at the cursor level suggests a future where the line between operating system and assistant blurs, with AI quietly mediating most interactions. If successful, this model could influence other platforms to treat the cursor—or its equivalent—as an always-on AI agent rather than a mere pointer. In that scenario, pointing and speaking might become as fundamental as clicking and typing, redefining how people navigate software across browsers, desktops, and potentially other operating systems.