Why Real-Time Voice Models Matter for Developers

OpenAI’s latest real-time voice models mark a shift from simple call-and-response bots to fully fledged voice agents. Instead of one model trying to do everything, OpenAI now offers a split stack tuned for reasoning, translation, and transcription workloads. This design is built for live systems that must keep talking, handle interruptions, and still call tools or APIs without losing context. For voice AI development, that means less custom orchestration code just to keep a conversation coherent across long calls, pauses, and tool hops. Developers can choose where they want deep reasoning and where low-latency streaming is enough. The result is a more modular approach: capture speech quickly, translate when needed, and reserve heavier models for decision-heavy turns. Whether you are building customer support flows, live assistants, or media tools, these real-time voice models give you a more controllable foundation for conversational experiences.

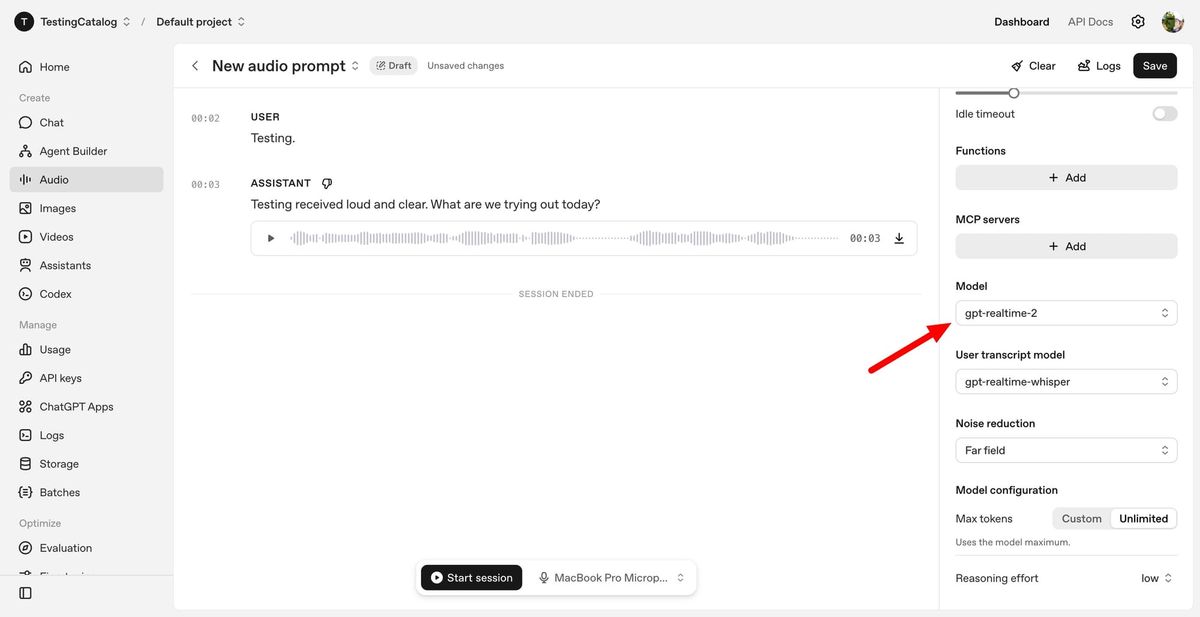

GPT-Realtime-2: GPT-5-Class Reasoning for Live Voice Agents

GPT-Realtime-2 is the centerpiece of OpenAI’s new real-time voice models, bringing GPT-5-class reasoning to spoken conversations. It is designed to act as the primary agentic voice model, handling complex requests, managing context over time, and using tools in parallel while continuing to talk. Developers can enable short spoken preambles like “let me check that” while the model performs background actions, creating more natural conversational pacing. A key upgrade is the expanded 128K context window, up from 32K in the previous generation, allowing for longer and more coherent sessions without constant resets or manual state compression. Reasoning effort is tunable from minimal to xhigh, letting teams trade off latency against depth of thinking depending on the task. GPT-Realtime-2 also improves audio intelligence, instruction adherence, and interruption handling, making it ideal for live assistants, call flows, and tool-using voice agents built on the Realtime API.

GPT-Realtime-Translate: Building Live Multilingual Experiences

GPT-Realtime-Translate focuses on live multilingual conversations, giving developers a dedicated live translation API for speech. It accepts spoken input in more than 70 languages and can output translated speech in 13 languages, keeping pace with fast speakers and mid-conversation topic shifts. Instead of bolting translation onto a general-purpose assistant, teams can plug this model directly into their voice pipelines wherever translation is needed. The model is engineered to handle regional accents, domain-specific vocabulary, and rapid context changes, making it suitable for customer support, cross-border sales, education platforms, events, and media localization. Because translation is separated from the reasoning tier, you can keep latency under control while still routing complex decisions to a more powerful model when necessary. For developers, this separation means cleaner architectures: capture and translate speech in one lane, then optionally feed the result into GPT-Realtime-2 or other tools for deeper reasoning or workflow automation.

GPT-Realtime-Whisper: Streaming Transcription for Voice Workflows

GPT-Realtime-Whisper fills the transcription lane with low-latency streaming speech-to-text. It listens as people talk and produces text in real time, which is ideal for live captions, meeting notes, and voice-driven workflows such as documentation or customer support logging. Because transcription is separated from the heavier reasoning tier, developers do not need to pay the performance or complexity cost of a large agentic model just to capture accurate text. You can pipe audio into GPT-Realtime-Whisper, stream the resulting text to your application, and selectively invoke GPT-Realtime-2 only when deeper reasoning or tool use is required. This separation also aids debugging: if transcription remains solid while reasoning slows or fails, you can tune or swap the reasoning model without touching the speech capture layer. Overall, GPT-Realtime-Whisper makes streaming transcription a first-class, flexible component in modern voice AI development pipelines.

Integrating the Realtime API into Your Voice Apps

All three models—GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper—are exposed via OpenAI’s Realtime API, giving developers a common interface for building voice-first applications. A typical architecture starts with GPT-Realtime-Whisper for streaming transcription, optionally routes text through GPT-Realtime-Translate for multilingual scenarios, and then hands off to GPT-Realtime-2 when the conversation needs reasoning, tool calls, or long-term context. Because the stack is split, you can independently scale or tune each lane based on your workload’s priorities: speed for transcription, accuracy for translation, and depth for reasoning. This approach is especially useful as voice interfaces expand across devices like phones, in-car systems, and desktops, where latency and reliability are critical. By treating reasoning, translation, and transcription as modular services, you can build more robust voice agents, reduce custom orchestration logic, and adapt quickly as OpenAI updates each model tier in the Realtime API.