From Bard to Gemini Intelligence: The Rise of Agentic AI

Google’s transformation from Bard to Gemini marked a shift from a standalone chatbot to a broader family of large language models. The latest step in that evolution is “Gemini Intelligence,” unveiled during The Android Show: I/O Edition as an explicitly agentic AI assistant rather than a purely conversational tool. Instead of only answering questions, the Gemini AI agent is being designed to carry out multi-step tasks, interpret context from images and documents, and complete actions across apps. This aligns with the broader trend toward agentic AI models that can plan, coordinate and execute on user goals. Google is positioning these new Google Gemini features as an upgrade to existing integrations—such as ordering rides or food—by allowing deeper automation, like finding information in Gmail or notes and acting on it. The result is a gradual pivot from “chatbot” to autonomous AI assistant that lives inside Android and Google services.

Gemini Intelligence on Android: From Widgets to Autofill

On Android, Gemini Intelligence is being framed as a system-level upgrade that turns Gemini into a hands-on helper. It can interpret on-screen content, create custom widgets, and carry out tasks like managing bookings or ordering items directly from notes, emails, or photos. For instance, users can show Gemini a grocery list and have it construct a shopping cart, or snap a picture of a travel brochure and ask the Gemini AI agent to find a matching tour. A major enhancement is smarter autofill: Gemini can tap into Personal Intelligence to insert secure details—such as passport information—through explicit user interaction. Google stresses that this is strictly opt-in, with controls to disconnect Gemini Intelligence at any time. Alongside this, a feature dubbed “Rambler” cleans up dictated speech, turning messy spoken prompts into polished text. Collectively, these Google Gemini features edge the system closer to an autonomous AI assistant embedded across Android workflows.

Remy: A 24/7 Personal Agent for Gemini

Internally, Google is testing Remy, a new AI personal agent meant to make Gemini more proactive. Described as a “24/7 personal agent,” Remy is designed to act on a user’s behalf in both work and daily tasks, going beyond traditional, reactive chat. Unlike one-off commands, it aims to monitor what is most relevant to users across connected services and handle complex tasks while learning their preferences. This builds on existing capabilities like Agent Mode and the current connected-app ecosystem spanning Google Workspace tools, media services, messaging, smart-home controls, and Android utilities. The design philosophy behind Remy reflects Google’s research guidance for AI agents: well-defined human controllers, carefully scoped powers, observable actions, and transparent logging. Memory and personalisation are also central; Remy’s preference-learning puts a spotlight on Gemini’s Privacy Hub, where users can review saved information, control personalisation, and limit what data the autonomous AI assistant can use over time.

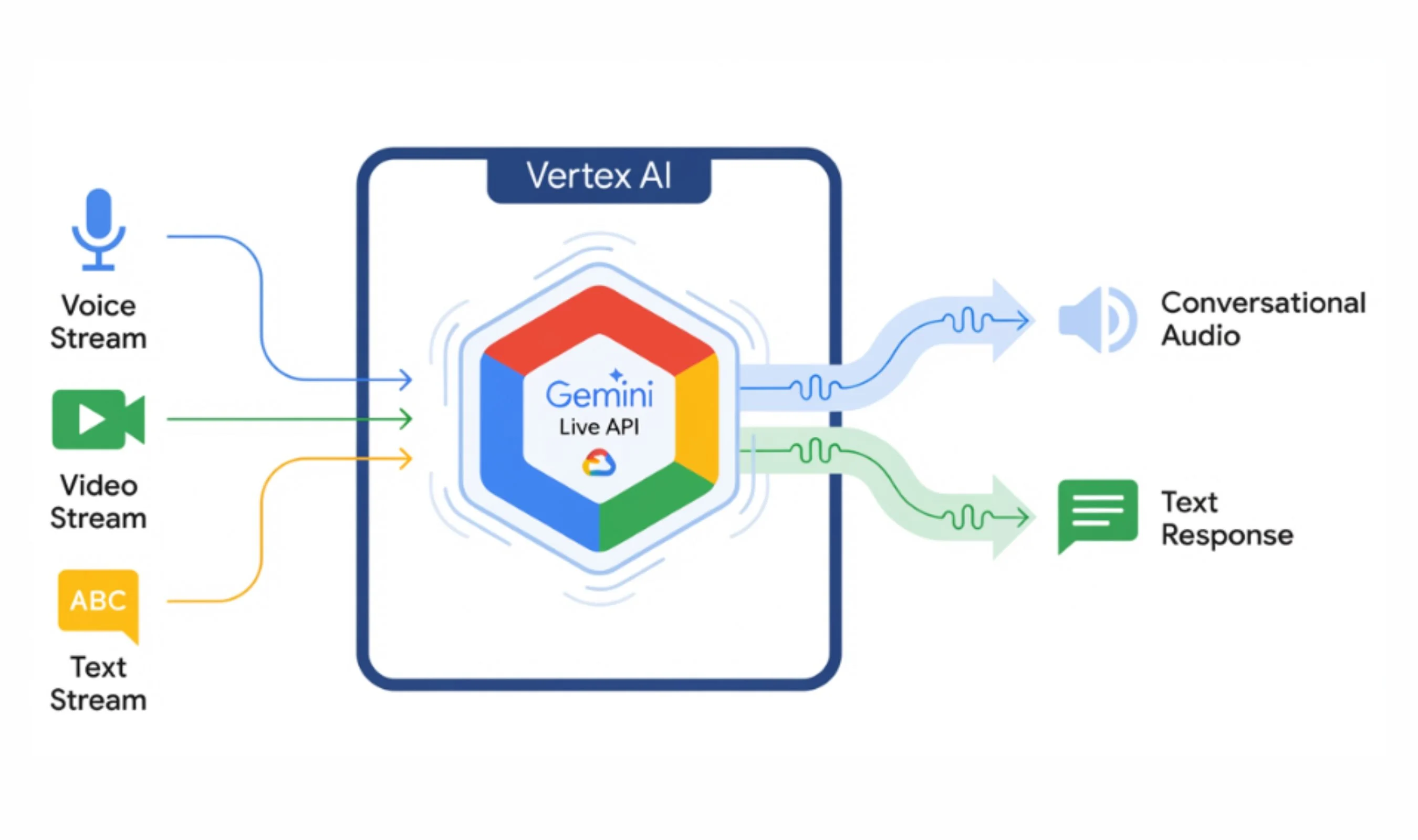

Hidden Gemini Live Models and the ‘Thinking’ Variant

Under the hood, Google is preparing a new generation of Gemini Live models that hint at deeper reasoning and autonomy. A hidden selector discovered in the Google app reveals seven audio-to-audio (A2A) model options for Gemini Live, including variants labelled “Thinking,” personalization-focused P13n, and codenames like “Nitrogen” and “Capybara.” Early testing shows these agentic AI models behave differently: some can access live location for weather, while the personalization variant remembers user details and reuses them naturally in later conversation, an ability the current default Gemini Live model refuses to exercise. Notably, the “Capybara” model identifies itself as “Gemini 3.1 Pro,” suggesting an upgraded capability tier compared with the typical Flash Live deployment. Because the model list is delivered from Google’s servers, the company can rapidly swap or expand these Google Gemini features without app updates. Together, these experimental models signal a push toward Gemini Live becoming a more context-aware, persistent, and adaptable autonomous AI assistant.

Balancing Proactive Assistance with User Control

As Gemini shifts toward an agent that can plan and act, Google is putting visible emphasis on user control and transparency. The Gemini Privacy Hub serves as the central place where people can inspect and delete Gemini Apps Activity, manage auto-delete settings, and decide whether their data helps improve Google AI. It also governs access to connected apps and personal information saved via Personal Intelligence. For higher-impact actions—such as sending messages, creating calendar events, controlling smart devices, or accessing sensitive documents—Gemini’s permissions are designed around least-privilege principles, granting only what is needed for a given task and making actions observable and auditable. Remy’s preference-learning and the new Live models’ memory capabilities heighten the importance of clear consent and reversible choices. In practice, this means Gemini is evolving into a proactive assistant that watches and works for users, but within boundaries they can see, understand, and change at any time.