Vibe coding explained: shipping from vibes, not specs

Vibe coding explained in one scene: you chat with an AI, describe a feature in plain language, hit accept on whatever code it proposes, then move on to the next idea. There is little up-front architecture, few written design docs, and almost no manual typing beyond prompts. As How-To Geek notes, many developers skip planning entirely and jump straight to implementation with tools like GitHub Copilot, only to discover half-baked products and broken features later. The AI may choose inefficient patterns—like N+1 database queries instead of joins—simply because it was never steered otherwise. Game and app developers now routinely prototype this way, building projects one prompt at a time. It feels fast and creatively liberating, especially for those with some programming background, but the lack of structure plants the seeds for trouble as the codebase grows.

From speed to ‘vibe debt’: when AI-assisted code collapses

Vibe debt is the technical debt from AI-first, prompt-driven development: code that works in the moment but resists testing, maintenance, and scaling. In game projects, a weekend prototype built entirely through natural language instructions can quickly turn into a tangle of conflicting logic and mystery functions with names like "thing2" that neither you nor the AI fully understand. One source describes how what runs fine at 500 lines often collapses at 5,000, as duplicated logic, ad hoc state management, and inconsistent patterns accumulate. Traditional debugging tools assume a human author with coherent intent; they are less effective when large chunks were stitched together by a model “working from vibes rather than a structured plan.” The result is a new class of technical debt from AI: not just messy code, but lost intent that makes it hard to reason about what the system was ever supposed to do.

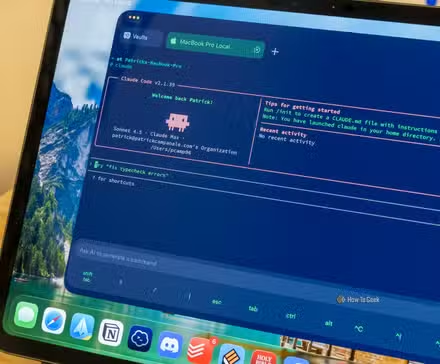

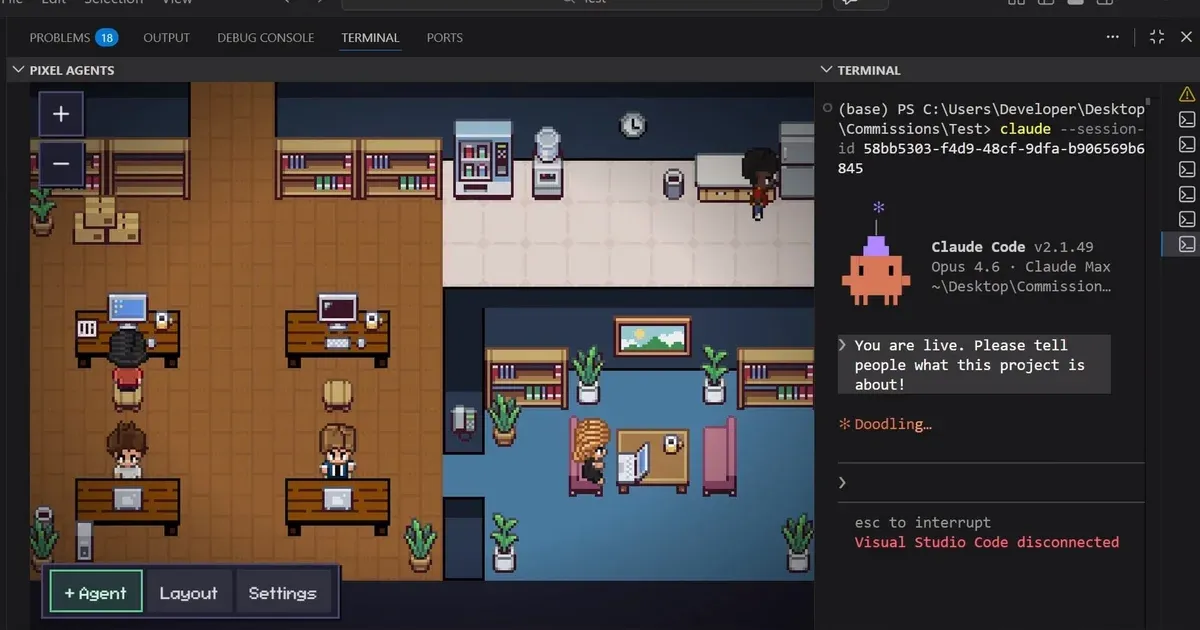

Cursor vs Claude Code and the rise of agentic coding assistants

To deal with vibe debt, AI platforms are shifting from autocomplete-style helpers toward agentic coding assistants that can inspect and reshape whole projects. Cursor 3’s new Agents Window lets developers describe a task and have an agent execute it end to end, mirroring the experience of Anthropic’s Claude Code terminal agent. Recent tools across Cursor, Replit, and GitHub Copilot Workspace are being tuned specifically to debug and refactor vibe-coded projects: they read full repository context, infer original intent, and propose structured fixes instead of waiting for perfectly phrased bug reports. Early tests on real-world codebases like HTTPie focus on debugging difficult, security-sensitive issues to benchmark how well these agents can reason over large contexts. The direction is clear: the same systems that let you ship fast via chat are now expected to untangle the resulting mess without forcing you to manually shepherd every single change.

More features or better fit? Industry pushback on bloated agents

Not everyone believes the answer is simply piling more capabilities into coding agents. Veteran open-source developer Mario Zechner argues that tools like Claude Code risk feeling like opaque spaceships—developers may only use a small fraction of features while hidden “dark matter” behavior silently edits context and code. His alternative, a minimalist terminal agent with just four tools—read, write, edit, and bash—emphasizes control and malleability over breadth. The broader point is that agents must adapt to each team’s workflow and constraints, rather than dictate a mysterious process. In today’s experimental landscape, no one knows what the ideal programming agent looks like, so the ability to self-modify and rapidly test new workflows matters more than exhaustive feature lists. For managing technical debt from AI, predictable, inspectable behavior may be more valuable than yet another automated refactor button buried in a complex UI.

Staying fast without drowning in vibe debt

The next evolution of AI coding is not just faster creation, but systematic repair. Developers can harness vibe coding without being buried in vibe debt by adding a bit of discipline around how agents are used. First, plan in prompts before coding: ask the AI for a detailed implementation plan, review it, and only then have it generate code—a workflow that has been shown to yield better results than jumping straight to implementation. Second, schedule periodic refactoring sessions where agents like Cursor, Claude Code, or Copilot Workspace are explicitly tasked with simplifying modules, improving naming, and aligning patterns across the codebase. Finally, treat agents as structured reviewers: have them lint, type-check, and critique their own output once they “think” they are done, rather than accepting the first pass. The goal is a feedback loop where AI not only accelerates development, but also continually hardens the code it helped create.