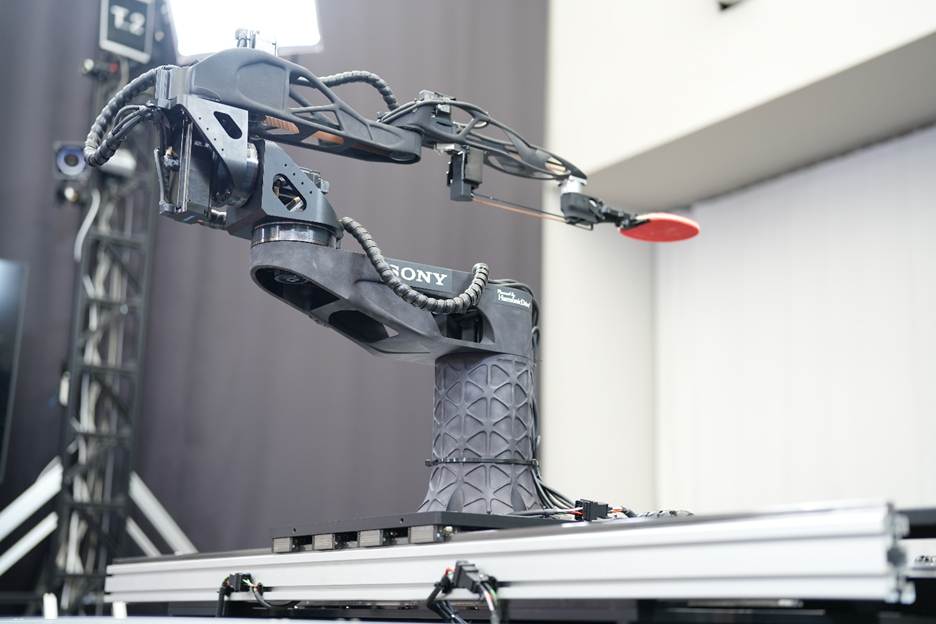

Ace: The Sony AI Robot That Can Rally With the Best

Sony AI’s Ace robot is not a scripted machine; it is a physical embodiment of advanced reinforcement learning. Designed to compete with human table tennis players, Ace is described by Sony AI as the first robot to reach human, expert-level performance in a competitive physical sport. Instead of being painstakingly programmed for every possible shot, Ace learned through thousands of hours of simulated table tennis. The system tries random motions, receives a reward signal when its returns land successfully or with more speed and spin, and gradually discovers winning strategies. Technically, the feat is formidable: nine high-speed cameras triangulate the ball in 3D at 200 Hz, while specialized vision systems estimate its extreme spin in real time. Deep reinforcement learning policies then turn this perception into safe, collision-free motions. Beating elite players is impressive in itself — but as a proof of concept, Ace shows how Sony can train AI agents that respond to humans at real-world speeds.

From Gran Turismo Sophy to Smarter Game Opponents

Ace builds on Sony AI’s earlier milestone: Gran Turismo Sophy, a racing agent honed in the virtual world before tackling human drivers. Sony’s researchers use games as benchmarks because performance can be directly compared to human players and complex environments can be safely simulated. The same reinforcement learning techniques that let Ace read spin and timing could drive the next wave of AI in gaming. Imagine smart game opponents on PlayStation that learn to counter your racing lines, fighting combos, or stealth routes with the same adaptability Ace shows at the table. Instead of relying on scripted behavior trees, non-player characters could be trained in large-scale simulations to react fluidly to player tactics at high speed. For players, that could mean AI rivals that feel less predictable, more human, and capable of genuinely surprising comebacks — a clear step beyond today’s difficulty presets.

Adaptive Game Difficulty and Personal Coaching on PlayStation

Ace doesn’t just hit the ball back; it learns what works against skilled opponents. Translated into games, this mindset points toward adaptive game difficulty that constantly calibrates itself to each player. An AI system similar to Ace’s could track how quickly you react, where you make mistakes, and which strategies you prefer. Over time, it could subtly adjust enemy aggression, puzzle hints, or racing AI pace to keep you in a sweet spot between boredom and frustration. The same data could power in-game coaching tools, especially in sports titles. A tennis, football, or racing game might review your last match, highlight recurring errors, and suggest targeted drills, mirroring how Ace’s own learning is guided by reward signals. Done well, this would boost accessibility, making challenging genres approachable for newcomers while still pushing experts to refine their skills.

Motion-Based Play: Lessons From Camera-Driven Gaming

Ace’s core strength is interpreting fast-moving physical action and responding in real time, which resonates with the broader trend of motion-based gaming. Devices like the Nex Playground connect to a TV via HDMI and use a built-in camera to detect full-body motion, turning the living room into a controller-free arcade. With titles ranging from Fruit Ninja to branded experiences featuring characters like Barbie and Teenage Mutant Ninja Turtles, it keeps kids and adults actively moving instead of sitting. Sony already has a history of experimenting with camera and motion interfaces, and its new robotics work suggests how much richer these experiences could become. An AI system with Ace-like perception could track nuanced gestures, adapt sports drills to a player’s ability, or dynamically adjust minigames to keep a family engaged. The line between training a robot and training a player could blur, with both learning from the same vision-driven AI foundations.

Opportunities, Risks, and What PlayStation Fans Should Expect

AI in gaming is becoming a strategic battleground, and Sony’s work on Ace and Gran Turismo Sophy positions it strongly for the next wave of PlayStation future tech. In theory, reinforcement learning could enable opponents that learn from each individual’s style, offering huge benefits for accessibility and long-term engagement. Players who struggle could get gentler, more supportive AI that adapts around their needs, while competitive users could face ever-tougher smart game opponents that evolve with them. However, there are risks: AI that improves too aggressively could feel unfair, and systems that model players closely may raise concerns about transparency and control. In the near future, fans should expect incremental features — smarter NPC behaviors, more responsive difficulty curves, and richer motion tracking — rather than fully self-learning enemies in every title. But Ace’s victory at the table hints that the underlying technology is already well on its way.