From Developer Framework to No-Code VR Creation Platform

Meta has updated its open-source Immersive Web SDK (IWSDK), shifting it from a developer-focused framework into a far more accessible AI VR toolkit. Originally unveiled at Meta Connect as a way to streamline WebXR development tasks such as physics, hand tracking, locomotion, grab interactions, and spatial UI, IWSDK was designed to let creators concentrate on concept and content rather than engine-level details. The latest release layers agentic AI workflows on top of these abstractions, turning WebXR development tools into something that non-engineers can increasingly use. Instead of manually wiring up every interaction, creators describe what they want, while AI coding assistants translate those goals into working browser-based VR. The result is a toolkit that blurs the line between traditional coding and no-code VR creation, expanding who can realistically build immersive web experiences that run seamlessly across headsets and desktop browsers.

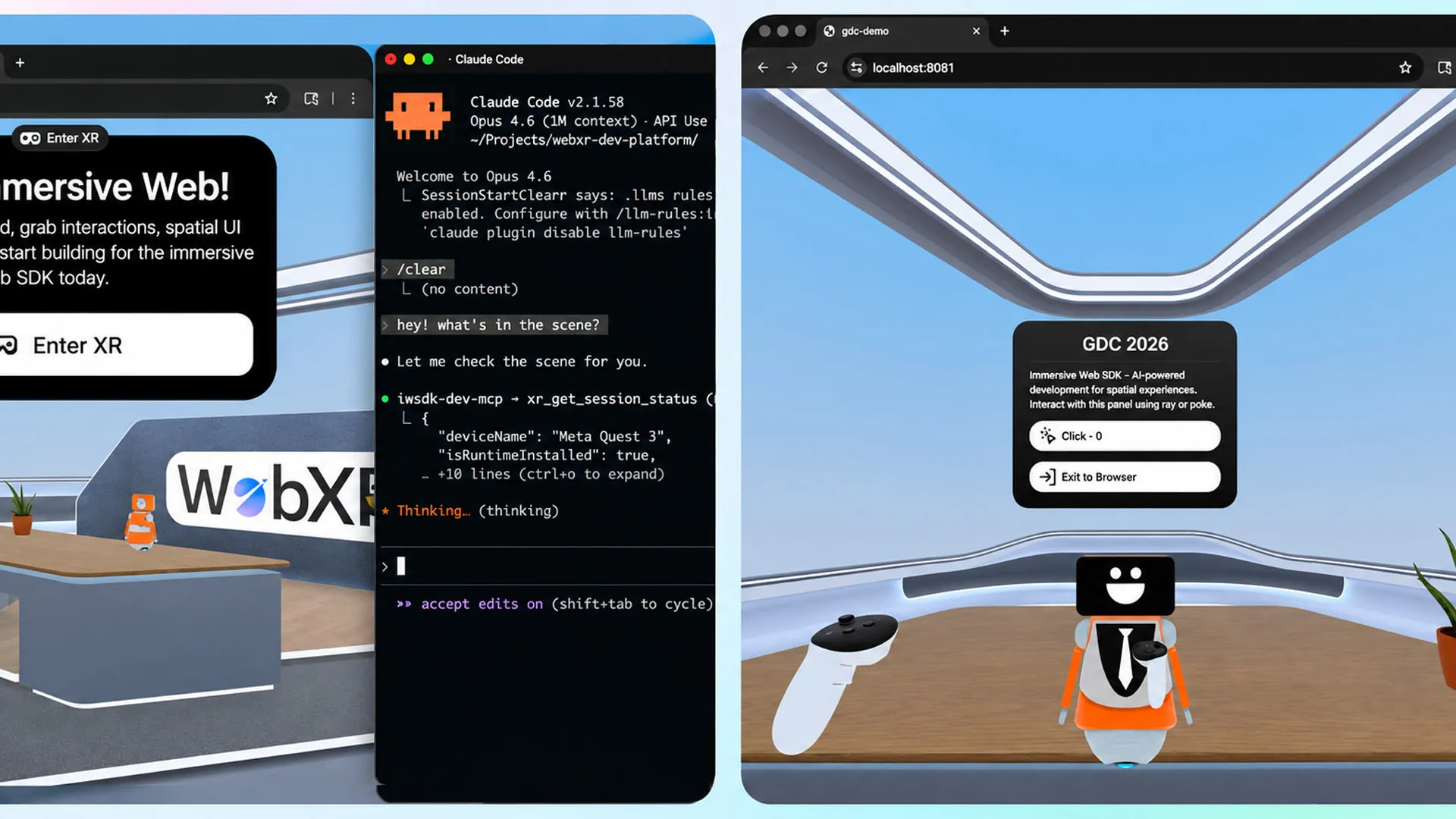

Inside Meta’s Agentic AI Workflow for WebXR

Meta’s new agentic workflow goes beyond one-off code suggestions to create a closed-loop development cycle for immersive web experiences. Integrated assistants such as Claude Code, Cursor, GitHub Copilot, and Codex don’t just generate scripts; they iteratively test and validate them against IWSDK’s components. This means the AI can refine behaviors like physics responses or hand interactions until they function reliably in a WebXR scene, without a human painstakingly debugging every line. Meta emphasizes that this is not about fixing typos or spitting out boilerplate snippets, but about orchestrating full interactive VR experiences in the browser. For teams used to traditional engines, that repositions IWSDK as a semi-autonomous collaborator—one that understands the SDK’s patterns, runs experiments, and converges on working features. In practice, this elevates the tools from passive code helpers into active agents in the VR creation pipeline.

Rebuilding ‘Project Flowerbed’ Shows What AI-Driven WebXR Can Do

To illustrate the impact of agentic AI workflows, Meta revisited its 2022 gardening demo, Project Flowerbed, which originally consisted of tens of thousands of lines of custom code. Using IWSDK’s AI-powered pipeline and the existing art assets, Meta reports that the entire application was rebuilt in just 15 hours. That compressed timeline underlines a key point: WebXR development tools enhanced by AI are now capable of assembling complex, interactive VR scenes, not just trivial prototypes. The demo includes core interactions such as planting and manipulating virtual plants in a richly interactive environment—behaviors that typically demand substantial engineering effort. By offloading much of that to the AI, designers and small studios can focus on pacing, aesthetics, and narrative instead of wrestling with low-level logic. For non-technical creators, this example signals that they can aim for sophisticated immersive web experiences without hiring a large engineering team.

Lowering Barriers for Creators, Designers, and Small Studios

The combination of no-code VR creation patterns and agentic AI makes IWSDK particularly appealing to independent creators and small studios. Many teams with strong visual or narrative skills struggle to enter VR because they lack deep engineering capacity. IWSDK abstracts away common systems—movement, grabbing, spatial UI—while the AI handles code integration and validation. This lets designers iterate through ideas in natural language or higher-level prompts instead of source files. Because the experiences are web-based, they can be instantly tested in a browser, cutting down on compile times and complex build processes. This rapid loop favors experimentation and quick prototyping, a critical advantage for teams with limited time and resources. As more non-technical creators gain the ability to prototype and ship WebXR projects, the diversity of immersive experiences is likely to grow beyond what traditional, code-heavy pipelines typically support.

Web Deployment and Meta’s Push to Grow the VR Ecosystem

Meta’s strategy around IWSDK clearly extends beyond tooling convenience to ecosystem expansion. By leaning on WebXR, the company enables VR experiences that launch directly from a URL, bypassing app store submission, downloads, and install friction. Experiences can be accessed on both VR headsets and desktop browsers, which aligns with Meta’s note that over one million monthly users already consume WebXR content on Quest. This browser-first approach pairs effectively with AI-assisted workflows: creators can iterate quickly, then share a simple link for user testing or public release. The open-source nature of IWSDK, released under an MIT license and hosted on GitHub, further encourages community contributions and third-party extensions. Together, these choices position the AI VR toolkit not just as another internal product, but as a foundational layer that could attract a broader, more diverse WebXR developer base around Meta’s hardware and platforms.