From Chatbots to Autonomous Task Execution

AI agents are rapidly shifting from conversational helpers into systems that handle end-to-end work with minimal human input. Instead of merely answering questions, new agentic AI toolkits are designed for autonomous task execution, including planning, coding, and deployment. This marks a new phase of hands-off automation, where users describe goals in natural language and the AI orchestrates the rest. The appeal is clear: non-technical users can tap into complex capabilities such as code generation, content creation, and workflow management without learning programming or toolchains. Behind the scenes, these agents coordinate models, APIs, and data sources to deliver results that used to require entire teams. Meta’s upcoming Hatch agent and Hugging Face’s agentic toolkit for Reachy Mini robots are early signals of this shift, each showing how AI agents code generation can move beyond chat into persistent, goal-driven behavior.

Meta’s Hatch Agent: Socially Grounded Automation

Meta Hatch agent is being built as a consumer-grade autonomous assistant that lives inside the social platforms people already use. Code traces show Hatch preparing for a waitlisted launch, with a broad skill set spanning image and video generation, shopping flows, learning sessions, research workloads, and even scheduled tasks and file generation. Unlike traditional chatbots, Hatch is designed for persistent, goal-oriented behavior, aligning with Meta’s vision of agents that work day and night toward user objectives. A key twist is social grounding: Hatch is expected to reach deeper into Instagram and Facebook than previous Meta AI surfaces, turning feed exploration, creator discovery, and shopping research into agent-driven workflows. Internal testing is reportedly targeting mock environments that resemble popular marketplaces and forums, signaling a focus on tool use and real-world task execution rather than simple conversation.

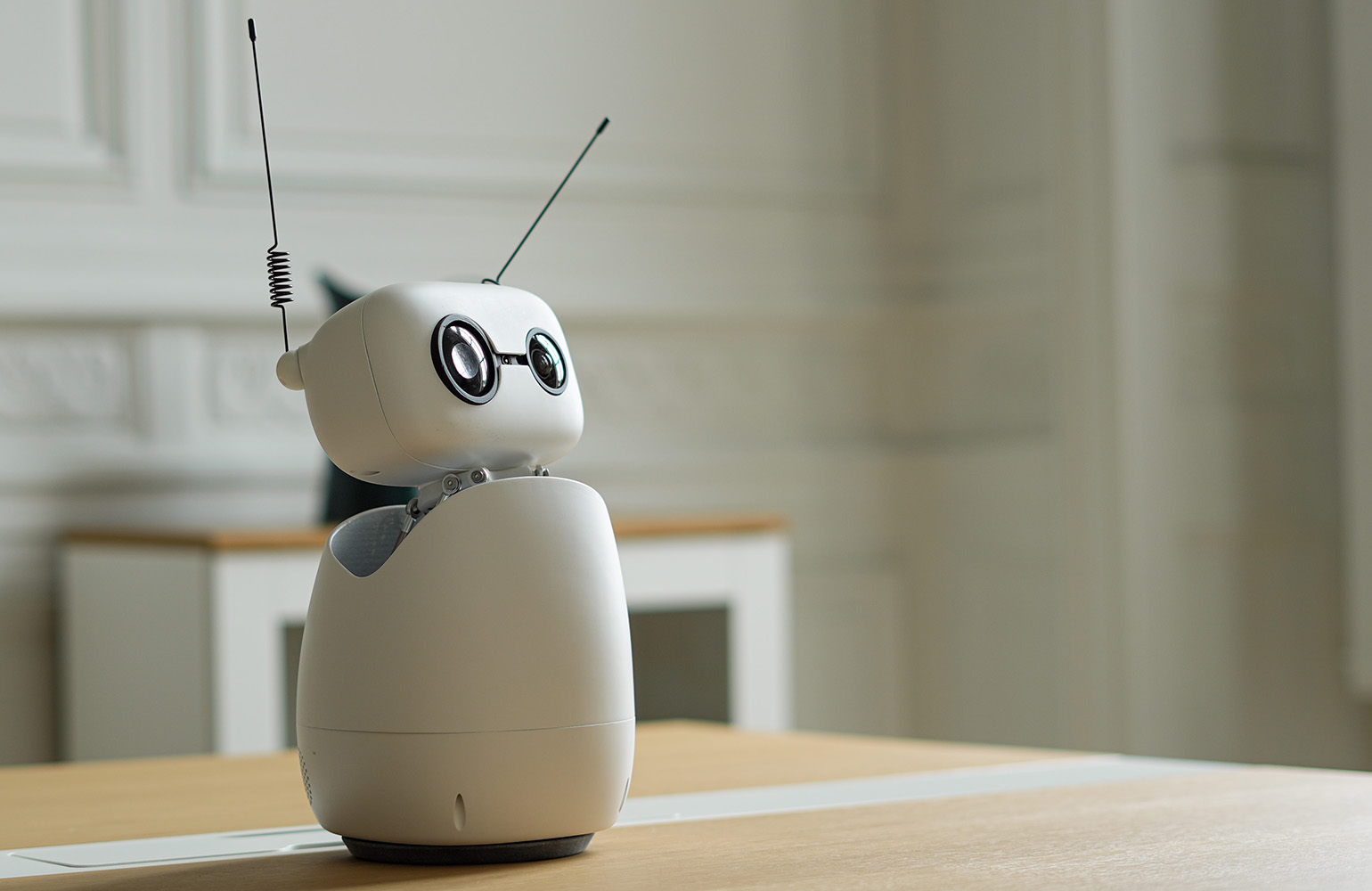

Hugging Face’s Agentic AI Toolkit for Reachy Mini

Hugging Face’s new agentic AI toolkit for the Reachy Mini desktop robot shows what happens when AI agents code generation is wired directly into hardware. Users describe the behavior they want in plain English, and an AI agent writes, tests, and ships the code to the robot in under an hour, without requiring a single line of manual programming. The agent effectively replaces robotics expertise, while the open-source, compact Reachy Mini provides an affordable hardware platform, and the cloud flow removes lengthy integration work. This combination collapses traditional barriers in robotics, enabling anyone to create working robot apps through an agentic AI toolkit. Every app lives on the Hugging Face Hub, with one-click installation and a browser-based simulator so people can experiment even without owning the robot, reinforcing how autonomous task execution is becoming part of everyday tools.

Hands-Off Automation in the Real World

The practical impact of these tools can already be seen in early Reachy Mini apps. A 78-year-old non-developer built a voice-controlled AI co-facilitator that sits on his desk during online CEO peer groups. By describing what he needed in natural language, he had an AI agent generate a full application that listens for cues, responds with personality, manages facilitation modes, and tracks dozens of participants by name. Other apps include language tutors, game companions, productivity helpers that discourage phone distraction, and even live race commentators. Each example highlights hands-off automation: the agent writes and deploys code while users focus on their goals. This real-world usage underscores how autonomous task execution is moving from experimental demos into practical tools that adapt to personal workflows, signaling a future where defining behavior in English is enough to create sophisticated, interactive systems.

Democratizing Automation Beyond Developers

Both Meta and Hugging Face point toward a world where automation is defined by intent rather than technical skill. Meta’s socially grounded Hatch agent aims to turn everyday browsing, shopping, and learning into automated workflows that operate inside familiar feeds. Hugging Face’s Reachy Mini ecosystem shows how an agentic AI toolkit can let anyone build robot behaviors, fork existing apps, and iterate with one-click deployment. Together, they signal a shift from chat interfaces toward task-execution agents that minimize human intervention once goals are set. Crucially, these systems lower the barriers that once kept non-technical users on the sidelines. Expertise is encapsulated in the agents, integration is simplified, and code becomes a behind-the-scenes artifact. As these platforms mature, hands-off automation is likely to extend from robots and social feeds into a wide range of everyday tools, reshaping how people delegate work to AI.