From Developer Framework to No-Code VR Development Platform

Meta’s Immersive Web SDK (IWSDK) began as an open-source framework to simplify building VR experiences on the web with WebXR. It bundled complex systems—such as physics, hand-tracking, motion, grab interactions and spatial UI—into reusable modules so developers could focus more on creative design than on low-level engineering. The latest update pushes IWSDK further into no-code VR development territory by weaving AI deeply into the toolchain. Instead of manually writing thousands of lines of JavaScript or WebGL, creators can describe the experience they want, then rely on AI-powered development tools to generate, refine and integrate the underlying code. This repositioning of IWSDK, from a helper library to an intelligent creation environment, signals Meta’s intention to make WebXR experiences accessible to artists, designers and indie teams who may lack traditional programming expertise but have strong ideas for immersive content.

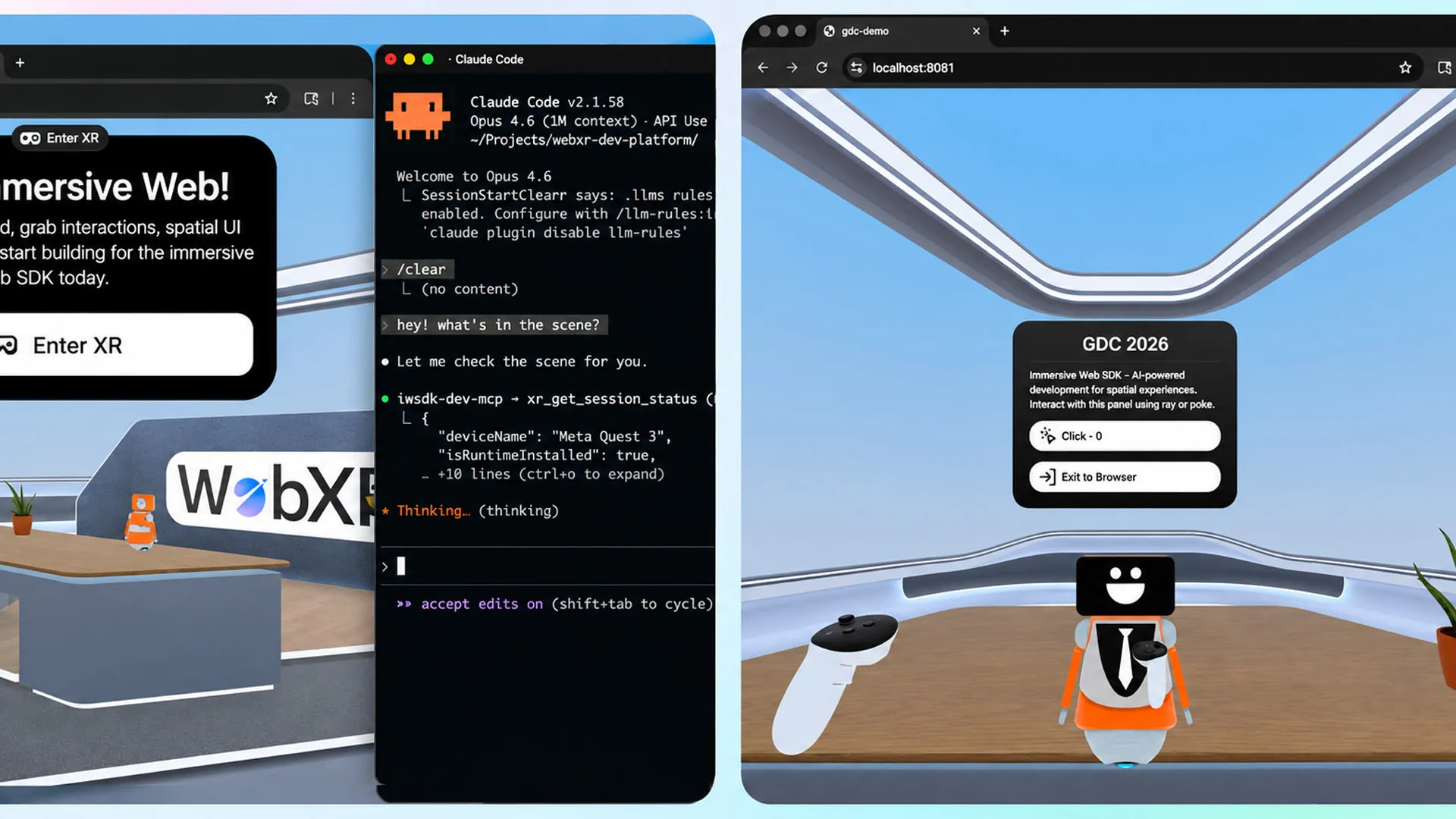

Inside the Agentic Workflow: AI That Builds, Tests and Refines VR

The core of Meta’s update is an “agentic workflow” that connects IWSDK with AI coding assistants like Claude Code, Cursor, GitHub Copilot and Codex. Rather than just producing code snippets, these agents operate in a closed loop: they generate code, run it, observe what breaks or behaves unexpectedly, then modify and retest until the experience works. Meta emphasizes that this loop is essential for reliable, production-ready WebXR experiences. In practice, a creator can specify interactions, environments or UI behaviors in natural language, then let the AI orchestrate IWSDK’s components to implement them. The workflow also leverages WebXR’s instant browser deployment, allowing rapid iteration without long compile times. Because everything runs via a URL, creators can test and share prototypes across desktop and VR headsets easily, making the entire build–test–deploy cycle far more approachable for non-programmers.

Rebuilding Project Flowerbed: A Case Study in AI-Assisted WebXR

To showcase what the agentic workflow can achieve, Meta revisited its 2022 VR gardening demo, Project Flowerbed. The original project was a full-featured VR experience backed by tens of thousands of lines of custom code. Using the updated Immersive Web SDK, Meta fed existing art assets into the AI workflow and tasked it with recreating the entire application as a WebXR experience. According to the company, the system rebuilt Project Flowerbed in just 15 hours, transforming what had been a major engineering effort into a short AI-guided session. Meta stresses that this isn’t about trivial tasks like fixing typos or generating boilerplate code. The result was a complete, interactive VR application running on the web, produced through AI-assisted orchestration of IWSDK’s systems—an example meant to convince creators that large-scale, feature-rich experiences are now within reach without traditional coding.

Democratizing WebXR for Indie Creators and Non-Programmers

By embedding AI agents into IWSDK, Meta is effectively lowering the barrier to entry for immersive content creation. Designers, educators, and indie storytellers who previously relied on developers—or abandoned VR ideas entirely—can now experiment with no-code VR development. They can outline gameplay, interactions or learning scenarios in natural language while the AI configures physics, hand interactions and spatial UI through IWSDK. This democratization aligns with wider no-code and low-code trends, but is tailored to the specific challenges of WebXR experiences, where performance, interaction fidelity and multi-device deployment matter. Meta notes that over one million monthly users already access WebXR content on Quest, underscoring the potential audience for creator-made experiences. By making its toolkit open-source under an MIT license, Meta further invites community experimentation, positioning IWSDK as a shared foundation rather than a closed proprietary stack.

Meta’s Strategic Play in AI-Powered Development Tools

The revamped Immersive Web SDK positions Meta as a key player in AI-powered development tools for the immersive web. While many no-code platforms focus on 2D apps or simple web pages, IWSDK targets fully interactive VR environments that run directly in a browser. The agentic workflow helps Meta address a critical bottleneck: the scarcity of experienced WebXR engineers relative to the growing demand for immersive content. By enabling AI to handle both code generation and validation, Meta promises maximum productivity for developers while simultaneously welcoming non-coders into the pipeline. This dual appeal could make IWSDK a competitive alternative in the broader no-code development movement, especially for creators who want the reach of URLs instead of app-store distribution. If the ecosystem grows around this open-source framework, Meta’s tools could become a default starting point for WebXR creators across skill levels.