AI’s New Bottleneck: Memory, Not Just Compute

Enterprises racing to deploy larger models and more complex agentic systems are discovering that GPUs alone cannot sustain AI at scale. The real constraint is AI memory infrastructure: limited DRAM capacity, fragmented context storage, and inefficient data movement between compute and memory tiers. High-bandwidth memory close to GPUs delivers microsecond access but is costly and capacity bound, while traditional storage has the scale but not the latency required for responsive AI inference. This mismatch creates a persistent AI inference bottleneck, where models repeatedly regenerate context, waste energy, and underutilize expensive accelerators. The emerging response is multi-layered: denser DDR5 RDIMM technology to expand server memory, interconnects like Compute Express Link that disaggregate and pool memory, and new petabyte-scale memory layers that keep AI context hot and shared across clusters. Together, these innovations aim to decouple AI performance growth from proportional power and hardware growth.

Micron’s 256GB DDR5 RDIMMs: Denser, Faster, and More Efficient

At the server node level, Micron is pushing DDR5 RDIMM technology to new density and efficiency levels. The company has begun sampling 256GB DDR5 RDIMMs built on its 1 gamma process, delivering transfer rates up to 9.2 trillion transfers per second—over 40 percent above current high-volume memory hardware. By combining 1 gamma DRAM with advanced 3D stacking and through-silicon via packaging, Micron fits twice the capacity into a single registered DIMM. Crucially for AI infrastructure, replacing two 128GB RDIMMs with a single 256GB module can cut operating power by more than 40 percent, easing both thermal and energy constraints in dense AI servers. This kind of node-level memory expansion helps data center architects feed proliferating CPU cores and AI agents with higher bandwidth and capacity, without linearly scaling power draw, and sets a new baseline for memory in next-generation AI racks.

CXL and Memory ‘Godboxes’: Disaggregated RAM for AI Clusters

Beyond individual servers, architectural change is arriving via Compute Express Link and so-called memory ‘godboxes’. CXL defines a cache-coherent interface that lets CPUs, memory devices, accelerators, and other peripherals share a common fabric, building on PCIe. Early specs enabled CXL-based memory expansion modules that appear to the OS like memory attached to another CPU socket. With CXL 2.0, memory can be pooled behind switches and dynamically allocated across servers, but only one machine at a time can use a given slice. The shift comes with CXL 3.0, which introduces larger fabric topologies and true memory sharing across systems. In this model, a centralized ‘godbox’ exposes shared, fungible memory to multiple AI nodes, allowing them to work on near-identical data without duplicating it in local RAM. While this introduces additional latency, it approaches that of a NUMA hop and adds enormous flexibility, bandwidth, and utilization gains for AI memory infrastructure.

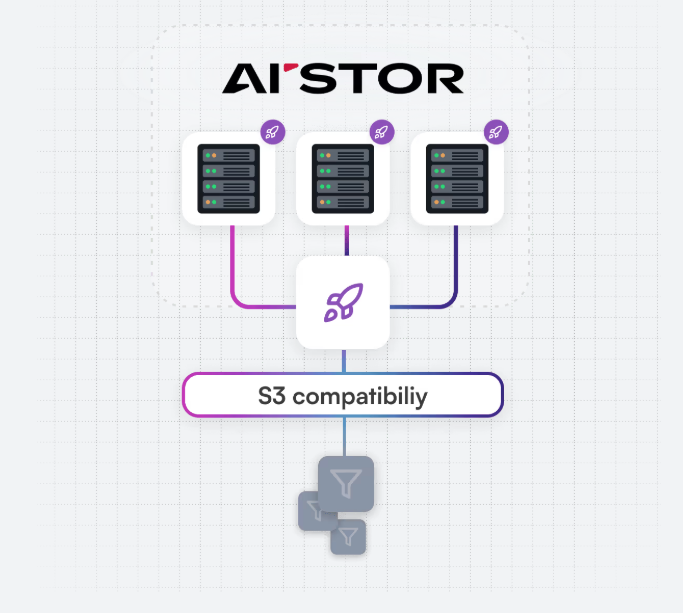

MinIO MemKV: Petabyte-Scale Context Memory for AI Inference

To tackle context persistence at cluster scale, MinIO’s MemKV introduces a petabyte-scale memory tier for AI inference. Traditional setups rely on limited GPU-adjacent DRAM or HBM, forcing systems to drop context between inference steps and recompute it later—a recompute tax that grows dramatically with cluster size. MemKV is designed as a shared, persistent context store with microsecond retrieval, tuned for long-context and multi-step AI workloads. Running on platforms such as NVIDIA BlueField-4 STX and integrated with NVIDIA’s networking stack, it provides a common pool of context for entire GPU clusters. In internal benchmarks with 128 GPUs and 128K-token windows, MinIO reports GPU utilization rising from around 50 percent to over 90 percent, while also improving time-to-first-token. By bridging the gap between fast yet small memory tiers and slower but massive storage, MemKV creates a new petabyte-scale memory layer tailored to AI inference bottlenecks.

From Isolated DIMMs to Memory Fabrics: A New AI Infrastructure Stack

Taken together, these developments signal a broader shift in AI infrastructure design. Micron’s high-density DDR5 RDIMMs push more capacity and bandwidth into each server socket, optimizing local memory footprints and power profiles. CXL-based memory godboxes extend this by turning memory into a shared, fabric-attached resource that can be dynamically pooled and deduplicated across nodes, rather than statically stranded in individual machines. Above that, systems like MinIO MemKV add a petabyte-scale memory tier that keeps AI context hot and accessible at microsecond latencies for entire GPU clusters. This emerging stack recasts memory as a first-class design axis, on par with compute acceleration, networking, and storage. For enterprises deploying demanding AI workloads, efficient scaling will increasingly depend on how well they orchestrate these layered memory innovations to reduce recompute, improve utilization, and decouple performance growth from power and hardware bloat.