From Operating System Feature to Always-There AI Layer

Gemini’s latest moves show that mobile platforms are shifting from app-first to AI-first experiences. On Android 17, Google’s model is no longer just a separate chatbot; it becomes a pervasive layer that understands context across the interface. Instead of opening a dedicated assistant app or copying text between windows, users increasingly interact with an AI mobile assistant that sits on top of whatever they are already doing. This reflects a broader trend: assistants are evolving from voice-controlled search boxes into default problem-solvers embedded inside the system. At the same time, Apple’s CarPlay now allows Gemini to provide answers within the familiar dashboard layout via Google Maps, expanding the role of AI in the car beyond what traditional voice assistants typically handle. Together, these changes mark a pivot toward phones and cars that feel guided by AI rather than just powered by apps.

Gemini on Android 17: Widgets, Chrome Help, and In-App Intelligence

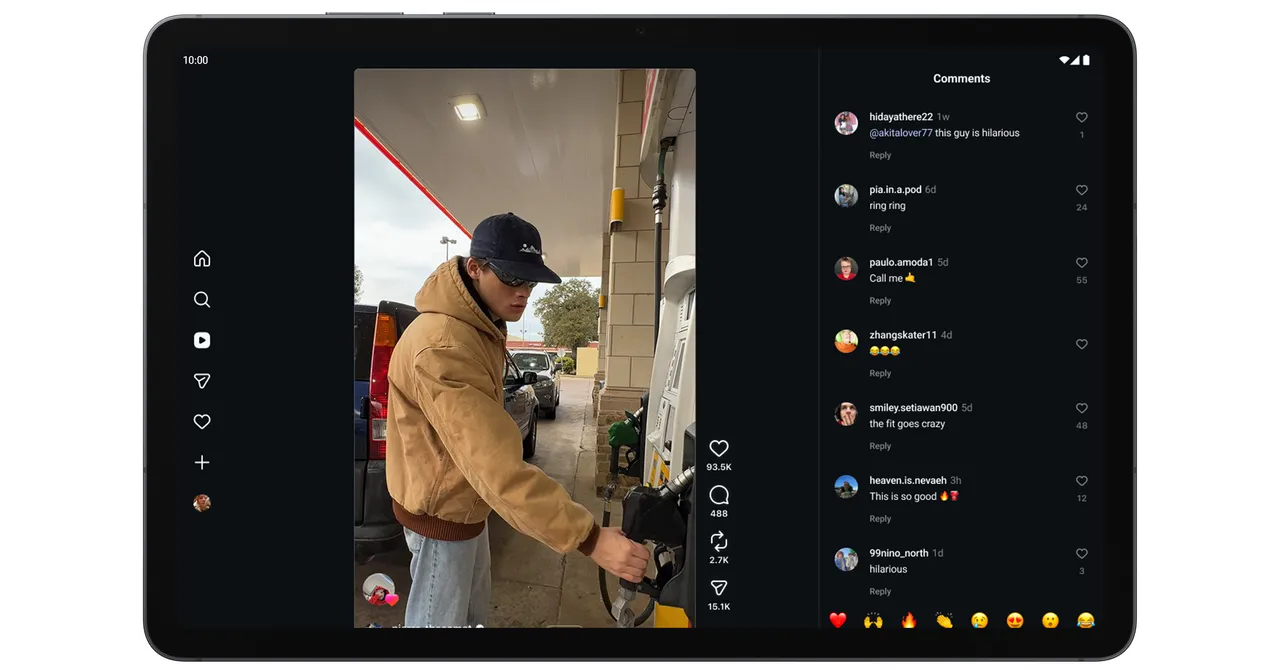

Android 17 bakes Gemini directly into everyday actions, starting with widget generation AI tools that can assemble home-screen widgets from natural language instructions. Instead of manually configuring layouts and options, users describe what they need and let Gemini create a widget that surfaces the right information or shortcuts. Inside apps, Gemini can offer contextual help—such as stepping in during a Chrome browsing session to assist with form or booking completions, rather than forcing a switch to a separate assistant window. The result is an Android environment where AI quietly cleans up friction points, from organizing information on the home screen to finishing tedious online tasks. For users, Gemini Android 17 features blur the boundary between system UI and assistant: the model becomes a built-in co-pilot that understands where you are, what you are doing, and what you are trying to accomplish.

Gemini CarPlay Integration: A New Kind of In-Car Co-Pilot

On Apple’s platforms, Gemini arrives in a different but equally significant way, through Google Maps on CarPlay. Instead of relying solely on Siri or the standard Maps voice interface, drivers can now turn to Gemini for questions that go beyond basic navigation prompts. The assistant can sit alongside the familiar CarPlay dashboard, answering more complex queries and helping interpret information while the Google Maps app remains in focus. This Gemini CarPlay integration shows that AI help can coexist with platform-native tools, giving drivers another option when default assistants fall short. It reinforces the idea that the car display is evolving from a simple mirroring surface into an intelligent cockpit where navigation, recommendations, and real-time information are filtered through an AI layer designed to keep attention on the road and reduce the need to reach for a phone.

Competing Ecosystems, Shared Shift Toward AI-First Mobile Use

Google and Apple may compete on platforms, but Gemini’s spread across Android 17 and CarPlay highlights a shared direction: assistants as ubiquitous problem-solvers. On phones, widget generation AI and in-app assistance cut down on context switching, making tasks like navigation, shopping, and productivity feel more continuous. In the car, Gemini complements existing tools, stepping in where traditional voice interfaces struggle with nuance or context. For users, this means less time thinking about which app to open and more time simply stating a goal—"book this," "find that," "explain this"—and letting the system route the request. As these integrations deepen, the mobile experience shifts from tapping through screens to collaborating with an AI that spans lock screens, browsers, and dashboards, turning the entire ecosystem into one cohesive assistance surface.