Why Google’s Smart Glasses Timeline Matters

Google is targeting a consumer smart glasses launch window around May 2026, turning what once felt like distant science fiction into a near-term product decision. The company’s Project Aura demos, highlighted by tech outlets such as CNET and TechCrunch, showcase lightweight, camera-equipped glasses designed for everyday use rather than lab-only experiments. This timing is critical: 2026 is shaping up as an inflection year for augmented reality, with multiple vendors racing to move from research prototypes to consumer-ready devices. For buyers, that means smart glasses are poised to shift from novelty gadgets to a serious alternative to phone screens for navigation, communication, and quick-glance information. The looming launch compresses timelines for consumers, developers, and regulators alike, forcing everyone to confront questions about privacy, ergonomics, and how comfortable we are with wearing our next screen all day long.

Android XR: Google’s Biggest Platform Advantage

The standout strategic move behind Google smart glasses 2026 is tight integration with the Android XR platform. Unlike isolated, single-purpose wearables, these glasses plug directly into Google’s broader Android ecosystem, giving developers a familiar toolkit for building spatial, camera-aware apps. Source reporting notes that Android XR roadmaps are already in developers’ hands, and that Gemini hooks are planned as well. This combination promises faster app rollouts and more consistent experiences across phones, tablets, and wearables. For consumers, Android XR platform integration means your existing services—navigation, messaging, productivity tools—can evolve into ambient overlays instead of starting from scratch. It also gives Google a significant edge over rivals whose glasses rely on custom or closed software stacks, reducing the classic chicken-and-egg problem where hardware launches without useful apps. In practical terms, Android XR turns smart glasses into an extension of Android rather than a separate gadget category.

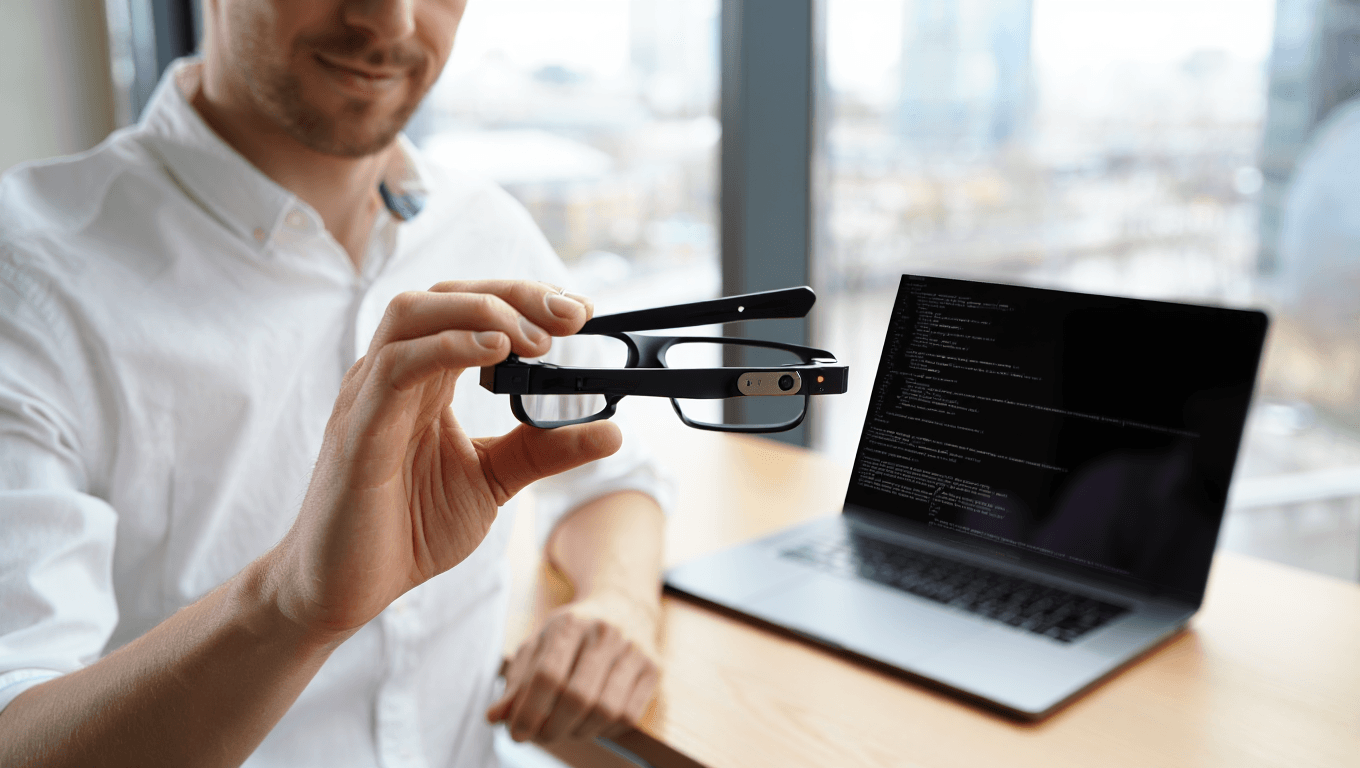

From Enterprise Experiments to Everyday Wear

Earlier smart glasses often focused on industrial or enterprise scenarios, but Google’s current prototypes signal a pivot toward practical consumer wear. Reports describe lightweight designs, day-long wearability, and waveguide displays with fields of view around 60°, all tuned for commuting, in-home use, and remote work rather than factory floors. Project Aura demos emphasize features such as navigation overlays, glanceable notifications, and AR search, underscoring an intent to replace many phone-based micro-tasks with hands-free experiences. This positions the upcoming smart glasses launch as a mainstream consumer wearables play, not a niche pilot. At the same time, continuous sensing and on-board cameras raise flags for privacy advocates, who warn about always-on recording and biometric data collection. The tension between convenience and surveillance will shape how quickly everyday users embrace these devices, and whether early adopters see them as liberating tools or intrusive gadgets.

How 2026 Could Redefine the Consumer Wearables Market

The 2026 wave of AR devices, led by Google smart glasses 2026 and challengers like Snap, could reshape the broader consumer wearables landscape. Hardware is finally converging on comfortable glasses form factors, while Android XR and similar platforms give developers clear roadmaps for immersive, always-available apps. Component makers reporting near 60° fields of view suggest more immersive yet subtle overlays than early smart glasses attempts. If buyers respond positively, smart glasses may begin to erode reliance on smartphones for quick interactions, turning wearables into the primary interface for navigation, meetings, and short-form content. This shift pressures rivals to choose between aligning with Android XR platform standards or betting on their own ecosystems. It also accelerates debates around regulation, battery and heat safety, and public etiquette for recording in shared spaces. In short, 2026 could be the year AR steps out of the lab and onto your face.