Why AI Is Forcing CPUs to Rethink Core Count Scaling

For years, GPUs have dominated highly parallel tasks, but that balance is starting to shift. According to Arm CEO Rene Haas, the rise of “agentic AI”—swarms of AI agents handling complex, ongoing tasks—will demand far more CPU cores than current designs provide. Modern CPUs already use multi-core layouts, yet they still lag GPUs in raw parallel throughput. AI workloads change the equation: instead of a few heavy, monolithic applications, we now see many concurrent agents, each needing its own slice of compute. This favors CPU core count scaling, where hundreds of lightweight cores handle diverse jobs simultaneously. As AI systems expand from single assistants to orchestras of agents, CPUs will evolve from a handful of powerful cores into dense clusters of smaller, more efficient ones, built specifically to keep these AI-driven workflows fed and responsive.

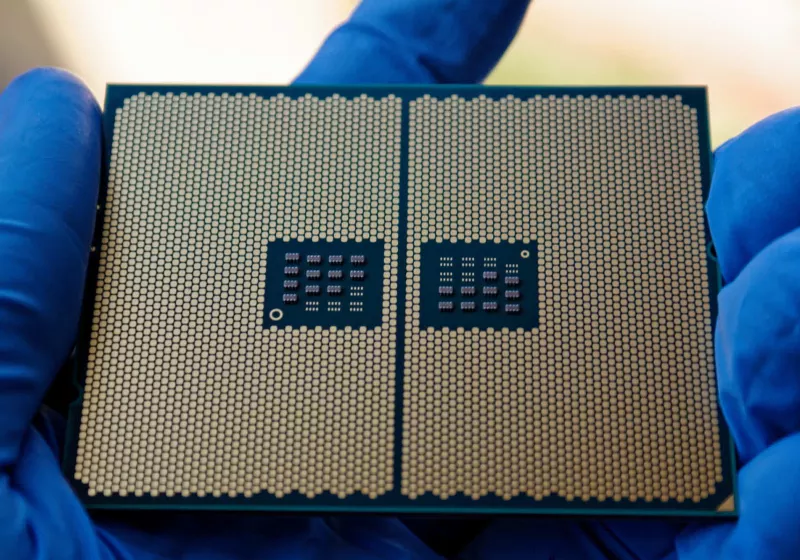

From Dozens to Hundreds: The New AI Processor Architecture

Haas argues that CPUs will eventually surpass GPUs in total compute cores as AI agents proliferate. Today’s GPU-based accelerators, like Nvidia’s latest architectures, are approaching the reticle limit—the maximum chip size current lithography equipment can print. CPUs, however, still have headroom to grow. That’s opening the door to AI processor architecture designs with far higher core densities. Arm’s recently launched AGI CPU already scales up to 126 cores, while Intel’s server-class Xeon designs can pack up to 288 efficiency-focused x86 cores. AMD is expected to reach as many as 256 cores in future Zen 6-based Epyc processors using simultaneous multithreading. Looking ahead, Haas envisions 256-core and even 512-core CPUs becoming mainstream in servers, leveraging Arm’s power efficiency to keep energy budgets plausible while massively increasing multi-core CPU performance for AI-centric data centers.

How Extreme Core Counts Will Change Everyday Computing

The move toward extreme core counts won’t be limited to cloud data centers. As AI agents become embedded in productivity suites, creative tools, and operating systems, consumer and professional machines will need to adapt. Instead of a single virtual assistant, you may have dozens of background agents scheduling tasks, summarizing documents, optimizing workflows, or monitoring systems. That environment rewards processors that can juggle many small tasks at once. For users, this means that raw single-core speed becomes less important than how well software can exploit multi-core CPU performance. OS schedulers, browsers, and everyday apps will need to distribute jobs efficiently across dozens of cores. Laptops, desktops, and workstations will increasingly be marketed not just by clock speed, but by how well they run AI workloads in parallel without draining battery life or throttling under sustained load.

Optimizing AI Workloads: From Software Design to Toolchains

AI workload optimization will become a core skill for developers and IT teams. With CPUs moving toward hundreds of cores, poorly parallelized software will leave enormous performance on the table. AI frameworks, runtime schedulers, and compilers will need to be redesigned to distribute agent tasks across many threads, minimize contention, and keep cache and memory usage efficient. This goes beyond training large models on GPUs; it’s about orchestrating inference and agent logic on CPUs at scale. Microservice-style architectures, event-driven systems, and fine-grained task queues are likely to become standard patterns for AI-heavy applications. For enterprises, success will hinge on choosing platforms that can exploit CPU core count scaling, from Arm-based servers to x86 clusters, and on tuning their AI stacks so that hundreds of cores stay busy, rather than idle, when fleets of AI agents are running.

The Market Shift: AI-Capable CPUs Redefine Design Priorities

The growing demand for AI-capable processors is already reshaping CPU design priorities. Haas expects future server CPUs to ship with up to four times as many cores as current designs, driven by the need to host vast numbers of AI agents. While GPUs will remain crucial for training and high-throughput inference, CPUs are poised to reclaim importance as the orchestration layer where AI logic, control flow, and general-purpose tasks run side by side. This shift could unlock a new business opportunity for chip makers worth more than $100 billion by 2030, as data centers and enterprises upgrade infrastructure to handle agentic AI workloads. For both consumers and professionals, the practical takeaway is clear: the next wave of CPUs will emphasize efficiency, scalability, and parallelism, and the software ecosystem will need to evolve quickly to fully leverage this new multi-core reality.